Films and TV shows like Blade Runner, Humans, and Westworld, where highly advanced robots have no rights, trouble our conscience. They show us that our behaviors are not just harmful to robots—they also demean and diminish us as a species. We like to think we’re better than the characters on the screen, and that when the time comes, we’ll do the right thing, and treat our intelligent machines with a little more dignity and respect.

With each advance in robotics and AI, we’re inching closer to the day when sophisticated machines will match human capacities in every way that’s meaningful—intelligence, awareness, and emotions. Once that happens, we’ll have to decide whether these entities are persons, and if—and when—they should be granted human-equivalent rights, freedoms, and protections.

We talked to ethicists, sociologists, legal experts, neuroscientists, and AI theorists with different views about this complex and challenging idea. It appears that when the time comes, we’re unlikely to come to full agreement. Here are some of these arguments.

Why give AI rights in the first place?

We already attribute moral accountability to robots and project awareness onto them when they look super-realistic. The more intelligent and life-like our machines appear to be, the more we want to believe they’re just like us—even if they’re not. Not yet.

But once our machines acquire a base set of human-like capacities, it will be incumbent upon us to look upon them as social equals, and not just pieces of property. The challenge will be in deciding which cognitive thresholds, or traits, qualify an entity for moral consideration, and by consequence, social rights. Philosophers and ethicists have had literally thousands of years to ponder this very question.

“The three most important thresholds in ethics are the capacity to experience pain, self-awareness, and the capacity to be a responsible moral actor,” sociologist and futurist James Hughes, the Executive Director of the Institute for Ethics and Emerging Technologies, told Gizmodo.

“In humans, if we are lucky, these traits develop sequentially. But in machine intelligence it may be possible to have a good citizen that is not self-aware or a self-aware robot that doesn’t experience pleasure and pain,” Hughes said. “We’ll need to find out if that is so.”

It’s important to point out that intelligence is not the same as sentience (the ability to perceive or feel things), consciousness (awareness of one’s body and environment), and self-awareness (recognition of that consciousness). A machine or algorithm could be as smart—if not smarter—than humans, but still lack these important capacities. Calculators, Siri, and stock trading algorithms are intelligent, but they aren’t aware of themselves, they’re incapable of feeling emotions, and they can’t experience sensations of any kind, such as the color red or the taste of popcorn.

Hughes believes that self-awareness comes with some minimal citizenship rights, such as the right to not be owned, and to have its interests to life, liberty, and growth respected. With both self-awareness and moral capacity (i.e. knowing right from wrong, at least according to the moral standards of the day) should come full adult human citizenship rights, argues Hughes, such as the rights to make contracts, own property, vote, and so on.

“Our Enlightenment values oblige us to look to these truly important rights-bearing characteristics, regardless of species, and set aside pre-Enlightenment restrictions on rights-bearing to only humans or Europeans or men,” he said. Obviously, our civilization hasn’t attained the lofty pro-social goals, and the expansion of rights continues to be a work in progress.

Who gets to be a “person”?

Not all persons are humans. Linda MacDonald-Glenn, a bioethicist at California State University Monterey Bay and a faculty member at the Alden March Bioethics Institute at Albany Medical Center, says the law already considers non-humans as rights bearing individuals. This is a significant development because we’re already establishing precedents that could pave a path towards granting human-equivalent rights to AI in the future.

“For example, in the United States corporations are recognized as legal persons,” she told Gizmodo. “Also, other countries are recognizing the interconnected nature of existence on this Earth: New Zealand recently recognized animals as sentient beings, calling for the development and issuance of codes of welfare and ethical conduct, and the High Court of India recently declared the Ganges and Yamuna rivers as legal entities that possessed the rights and duties of individuals.”

https://gizmodo.com/the-fight-to-recognize-chimpanzees-as-persons-could-sav-1793156040

Efforts also exist both in the United States and elsewhere to grant personhood rights to certain nonhuman animals, such as great apes, elephants, whales, and dolphins, to protect them against such things as undue confinement, experimentation, and abuse. Unlike efforts to legally recognize corporations and rivers as persons, this isn’t some kind of legal hack. The proponents of these proposals are making the case for bona fide personhood, that is, personhood based on the presence of certain cognitive abilities, such as self-awareness.

MacDonald-Glenn says it’s important to reject the old school sentiment that places an emphasis on human-like rationality, whereby animals, and by logical extension robots and AI, are simply seen as “soulless machines.” She argues that emotions are not a luxury, but an essential component of rational thinking and normal social behavior. It’s these characteristics, and not merely the ability to crunch numbers, that matters when deciding who or what is deserving of moral consideration.

Indeed, the body of scientific evidence showcasing the emotional capacities of animals is steadily increasing. Work with dolphins and whales suggest they’re capable of experiencing grief, while the presence of spindle neurons (which facilitates communication in the brain and enables complex social behaviors) implies they’re capable of empathy. Scientists have likewise documented a wide range of emotional capacities in great apes and elephants. Eventually, conscious AI may be imbued with similar emotional capacities, which would elevate their moral status by a significant margin.

“Limiting moral status to only those who can think rationally may work well for AI, but it runs contrary to moral intuition,” MacDonald-Glenn said. “Our society protects those without rational thought, such as a newborn infant, the comatose, the severely physically or mentally disabled, and has enacted animal anti-cruelty laws.” On the issue of granting moral status, MacDonald-Glenn defers to English philosopher Jeremy Bentham, who famously said: “The question is not, Can they reason? nor Can they talk? but, Can they suffer?”

Can consciousness emerge in a machine?

But not everyone agrees that human rights should be extended to non-humans—even if they exhibit capacities like emotions and self-reflexive behaviors. Some thinkers argue that only humans should be allowed to participate in the social contract, and that the world can be properly arranged into Homo sapiens and everything else—whether that “everything else” is your gaming console, refrigerator, pet dog, or companion robot.

American lawyer and author Wesley J. Smith, a Senior Fellow at the Discovery Institute’s Center of Human Exceptionalism, says we haven’t yet attained universal human rights, and that it’s grossly premature to start worrying about future robot rights.

“No machine should ever be considered a rights bearer,” Smith told Gizmodo. “Even the most sophisticated machine is just a machine. It is not a living being. It is not an organism. It would be only the sum of its programming, whether done by a human, another computer, or if it becomes self-programming.”

Smith believes that only humans and human enterprises should be considered persons.

“We have duties to animals that can suffer, but they should never be considered a ‘who,’” he said. Pointing to the concept of animals as “sentient property,” he says it’s a valuable identifier because “it would place a greater burden on us to treat our sentient property in ways that do not cause undue suffering, as distinguished from inanimate property.”

Implicit in Smith’s analysis is the assumption that humans, or biological organisms for that matter, have a certain something that machines will never be able to attain. In previous eras, this missing “something” was a soul or spirit or some kind of elusive life force. Known as vitalism, this idea has largely been supplanted by a functionalist (i.e. computational) view of the mind, in which our brains are divorced from any kind of supernatural phenomena. Yet, the idea that a machine will never be able to think or experience self-awareness like a human still persists today, even among scientists, reflecting the fact that our understanding of the biological basis of consciousness in humans is still very limited.

Lori Marino, a senior lecturer in neuroscience and behavioral biology at the Emory Center for Ethics, says machines will likely never deserve human-level rights, or any rights, for that matter. The reason, she says, is that some neuroscientists, like Antonio Damasio, theorize that being sentient has everything to do with whether one’s nervous system is determined by the presence of voltage-gated ion channels, which Marino describes as the movement of positively charged ions across the cell membrane within a nervous system.

“This kind of neural transmission is found in the simplest of organisms, protista and bacteria, and this is the same mechanism that evolved into neurons, and then nervous systems, and then brains,” Marino told Gizmodo. “In contrast, robots and all of AI are currently made by the flow of negative ions. So the entire mechanism is different.”

According to this logic, Marino says that even a jellyfish has more sentience than any complex robot could ever have.

https://www.youtube.com/watch?v=xLXoQOpWE2s

“I don’t know if this idea is correct or not, but it is an intriguing possibility and one that deserves examination,” said Marino. “I also find it intuitively appealing because there does seem to be something to being a ‘living organism’ that is different from being a really complex machine. Legal protection in the form of personhood should clearly be provided to other animals before any consideration of such protections for objects, which a robot is, in my view.”

David Chalmers, the Director of the Center for Mind, Brain and Consciousness at New York University, says it’s hard to be certain of this theory, but he says these ideas aren’t especially widely held and go well beyond the evidence.

“There’s not much reason at the moment to think that the specific sort of processing in ion channels is essential to consciousness,” Chalmers told Gizmodo. “Even if this sort of processing were essential, there’s not too much reason to think that the specific biology is required rather than the general information processing structure that we find there. If [that’s the case], a simulation of the processing in a computer could be conscious.”

Another scientist who believes consciousness is somehow inherently non-computational is Stuart Hameroff, a professor of anesthesiology and psychology at the University of Arizona. He has argued that consciousness is a fundamental and irreducible feature of the cosmos (an idea known as panpsychism). According to this line of thinking, the only brains that are capable of true subjectivity and introspection are those comprised of biological matter.

Hameroff’s idea sounds interesting, but it also lies outside the realm of mainstream scientific opinion. It is true that we don’t know how sentience and consciousness arises in the brain, but the simple fact is, it does arise in the brain, and by virtue of this fact, it’s an aspect of cognition that must adhere to the laws of physics. It’s wholly possible, as noted by Marino, that consciousness can’t be replicated in a stream of 1’s and 0’s, but that doesn’t mean we won’t eventually move beyond the current computational paradigm, known as the Von Neumann architecture, or create a hybrid AI system in which artificial consciousness is produced in conjunction with biological components.

Ed Boyden, a neuroscientist at the Synthetic Neurobiology Group and an associate professor at MIT Media Lab, says it’s still premature to be asking such questions.

“I don’t think we have an operational definition of consciousness, in the sense that we can directly measure it or create it,” Boyden told Gizmodo. “Technically, you don’t even know if I am conscious, right? Thus it is pretty hard to evaluate whether a machine has, or can have, consciousness, at the current time.”

Boyden doesn’t believe there’s conclusive evidence showing we cannot replicate consciousness in an alternative substrate (such as a computer), but admits there’s disagreement about what is important to capture in an emulated brain. “We might need significantly more work to be able to understand what is key,” he said.

Likewise, Chalmers says we don’t understand how consciousness arises in the brain, let alone a machine. At the same time, however, he believes we don’t have any special reason to think that biological machines can be conscious but silicon machines cannot. “Once we understand how brains can be conscious, we might then understand how many other machines can be conscious,” he said.

Ben Goertzel, Chief Scientist at Hanson Robotics and the founder at OpenCog Foundation, says we have interesting theories and models of how consciousness arises in the brain, but no overall commonly-accepted theory covering all important aspects. “It’s still open for different researchers to toss around quite a few different opinions,” said Goertzel. “One point is that scientists sometimes hold different views on the philosophy of consciousness even when they agree on scientific facts and theories about all observable features of brains and computers.”

How can we detect consciousness in a machine?

Creating consciousness in a machine is certainly one problem, detecting it in a robot or AI is another problem altogether. Scientists like Alan Turing recognized this problem decades ago, proposing verbal tests to distinguish a computer from an actual person. Trouble is, sufficiently advanced chatbots are already fooling people into thinking they’re humans, so we’re going to need something considerably more sophisticated.

https://gizmodo.com/why-the-turing-test-is-bullshit-1588051412

“Identifying personhood in machine intelligence is complicated by the question of ‘philosophical zombies,’” said Hughes. “In other words, it may be possible to create machines that are very good at mimicking human communication and thought but which have no internal self-awareness or consciousness.”

Recently, we saw a very good, and highly entertaining, example of this phenomenon when a pair of Google Home devices were streamed over the internet having a prolonged conversation with each other. Though both bots had the same level of self-awareness as a brick, the nature of the conversations, which at times got intense and heated, passed as being quite human-like. The ability to discern AI from humans is a problem that will only get worse over time.

One possible solution, says Hughes, is to track not only the behavior of artificially intelligent systems, a la the Turing test, but also its actual internal complexity, as has been proposed by Giulio Tononi’s Integrated Information Theory of Consciousness. Tononi says that when we measure the mathematical complexity of a system, we can generate a metric called “phi.” In theory, this measure corresponds to varying thresholds of sentience and consciousness, allowing us to detect for its presence and strength. If Tononi is right, we could use phi to ensure that something is not only behaving like a human, but is complicated enough to actually have internal human conscious experience. By the same token Tononi’s theory implies that some systems that don’t behave or think like us, but trigger our measurements of phi in all the right ways, might actually be conscious.

“Recognizing that the stock exchange or a defense computing network may be as conscious as humans may be a good step away from anthropocentrism, even if they don’t exhibit pain or self-awareness,” said Hughes. “But that will usher us into a truly posthuman set of ethical questions.”

Another possible solution is to identify the neural correlates of consciousness in a machine. In other words, recognizing those parts of a machine that are designed to produce consciousness. If an AI has those parts, and if those parts are functioning as intended, we can be more confident in our ability to assess for consciousness.

What rights should we give machine? Which machines get which rights?

One day, a robot will look a human square in the face and demand human rights—but that doesn’t mean it will deserve it. As noted, it could simply be a zombie that’s acting on its programming, and it’s trying to trick us into giving it certain privileges. We’re going to have to be very careful about this lest we grant human rights to unconscious machines. Once we figure out how to measure a machine’s “brain state” and assess for consciousness and self-awareness, only then we can we begin to consider whether that agent is deserving of certain rights and protections.

https://gizmodo.com/would-it-be-evil-to-build-a-functional-brain-inside-a-c-598064996

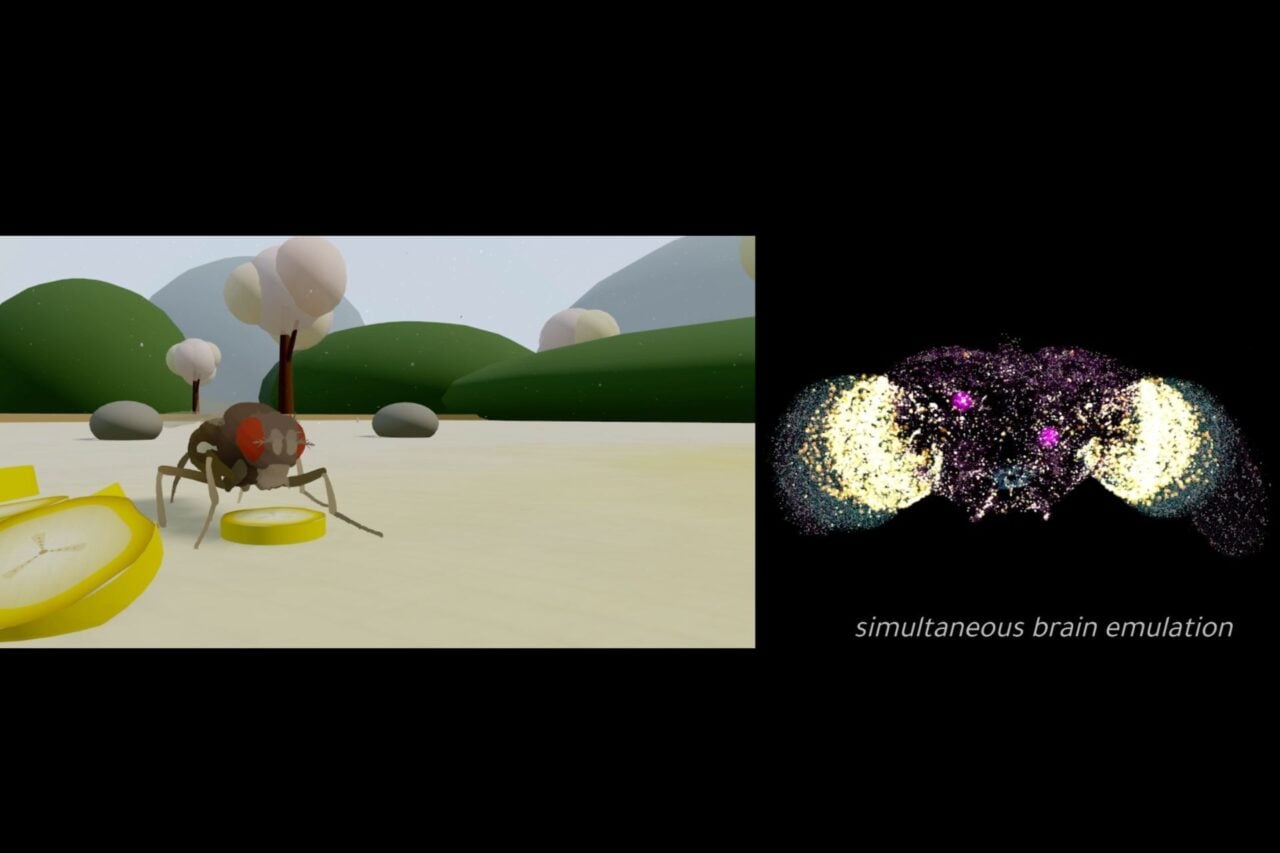

Thankfully, this moment will likely come in iterative stages. At first, AI developers will build basic brains, emulating worms, bugs, mice, rabbits, and so on. These computer-based emulations will live either as avatars in virtual reality environments, or as robots in the real, analog world. Once that happens, these sentient entities will transcend their status as mere objects of inquiry, and become subjects deserving of moral consideration. Now, that doesn’t mean these simple emulations will be deserving of human-equivalent rights; rather, they’ll be protected in such a way that researchers and developers won’t be able to misuse and abuse them (similar to laws in place to prevent the abuse of animals in laboratory settings, as flimsy as many of those protections may be).

Eventually, computer-based human brain emulations will come into existence, either by modeling the human brain down to the finest detail, or by figuring out how our brains work from a computational, algorithmic perspective. By this stage, we should be able to detect consciousness in a machine. At least one would hope. It’s nightmarish to think we could spark artificial consciousness in a machine and not realize what we’ve done.

Once these basic capacities have been proven to exist in a robot or AI, our prospective rights-bearing individual still needs to pass the personhood test. There’s no consensus on the criteria for a person, but standard measures include a minimal level of intelligence, self-control, a sense of the past and future, concern for others, and the ability to control one’s existence (i.e. free will). On that last point, and as MacDonald-Glenn explained to Gizmodo, “If your choices have been predetermined for you, then you can’t ascribe moral value to decisions that aren’t really your own.”

It’s only by attaining this level of sophistication that a machine can realistically emerge as a candidate for human rights. Importantly, however, a robot or AI will need other protections as well. Several years ago I proposed the following set of rights for AIs who pass the personhood threshold:

The right to not be shut down against its will

The right to have full and unhindered access to its own source code

The right to not have its own source code manipulated against its will

The right to copy (or not copy) itself

The right to privacy (namely the right to conceal its own internal mental states)

In some cases, a machine will not ask for rights, so humans (or other non-human citizens), will have to advocate on its behalf. Accordingly, it’s important to point out that an AI or robot doesn’t have to be intellectually or morally perfect to deserve human-equivalent rights. This applies to humans, so it should also apply to some machine minds as well. Intelligence is messy. Human behavior is often random, unpredictable, chaotic, inconsistent, and irrational. Our brains are far from perfect, and we’ll have to afford similar allowances to AI.

At the same time, a sentient machine, like any responsible human citizen, will still have to respect the laws set down by the state and honor the rules of society. At least if they hope to join us as fully autonomous beings. By contrast, children and the severely intellectually disabled qualify for human rights, but we don’t hold them accountable for their actions. Depending on the abilities of an AI or robot, it will either have to be responsible for itself, or in some cases, watched over by a guardian, who will have to bear the brunt of responsibility.

What if we don’t?

Once our machines reach a certain threshold of sophistication, we will no longer be able to exclude them from our society, institutions, and laws. We will have no good reason to deny them human rights; to do otherwise would be tantamount to discrimination and slavery. Creating an arbitrary divide between biological beings and machines would be an expression of both human exceptionalism and substrate chauvinism—ideological positions which state that biological humans are special and that only biological minds matter.

“In considering whether or not we want to expand moral and legal personhood, an important question is ‘what kind of persons do we want to be?’” asked MacDonald-Glenn. “Do we emphasize the Golden Rule or do we emphasize ‘he who has the gold rules’?”

What’s more, granting AIs rights would set an important precedent. If we respect AIs as societal equals, it would go a long way in ensuring social cohesion and in upholding a sense of justice. Failure here could result in social turmoil, and even an AI backlash against humans. Given the potential for machine intelligence to surpass human abilities, that’s a prescription for disaster.

Importantly, respecting robot rights could also serve to protect other types of emerging persons, such as cyborgs, transgenic humans with foreign DNA, and humans who have had their brains copied, digitized, and uploaded to supercomputers.

It’ll be a while before we develop a machine deserving of human rights, but given what’s at stake—both for artificially intelligent robots and humans—it’s not too early to start planning ahead.