This article is part of our new women in gaming series Makers of Now.

For those who play enough games, a voice actor’s every sound and inflection is like hearing the banter of a forgotten friend, sending off firecrackers of recognition in the brain. In video games, despite the recent obsession of graphical fidelity down to each and every facial pore, players get to know their favorite characters through their intonations, their sighs, their joy—their voices. Voiceover work has become an intrinsic part of gaming, yet for an industry that already fails to credit the actors who light the fire the behind these animated characters’ eyes, things may be getting even worse, thanks to the proliferation of generative AI systems.

Jennifer Hale is one of the gaming industry’s most prolific actors. She’s a multi-decade veteran in video games and beyond, and while she’s stuck out in roles like Ashe from Overwatch 2 and Kronika in Mortal Kombat 11, it’s the Mass Effect series that cemented her in the minds of millions of English-speaking players worldwide. In a phone call with Gizmodo, Hale channeled her years as Commander Shepard when she said voiceover actors need to stand together against the threat of artificial intelligence.

“We’re all on the chopping block, and we have to get up, come together, and fight back, or we’re going down,” she said. “As actors, when we are hired, we have a certain shelf life in any given year, in any given decade. And when we are exposed, when our voice is out there and on the market without our consent, they’re taking a piece of our shelf life without compensating us. That’s stealing. That is theft.”

Some industry insiders are considering ways to modify voice actors’ tones and use AI voice synthesizers. Amateur modders have tested these kinds of on-the-fly AI systems in games like The Elder Scrolls V: Skyrim as a kind of proof of concept. Internal emails seen by The New York Times indicate Activision Blizzard, one of the biggest game publishers in the world, is working on tools for AI-assisted “voice cloning.” During a wave of layoffs throughout the video game industry and the tech sphere, voice actors are concerned this push for AI is a means of cutting back on their labor as well. Actors have found their syllables drifting through the internet on YouTube, Reddit, and elsewhere. Most of the time, these are fans replicating voices on a lark, but there have been much more nefarious use-cases. Tools like ElevenLabs have allowed malicious actors to deepfake celebrity voices saying racist and obscene things, while others have used voice actors’ own characters to attack and threaten them online.

Video game voice actor Cissy Jones has been in leading roles for high-grossing games like Destiny 2 as well as indie hits like Firewatch, where she played the central role as over-the-radio deuteragonist Delilah. It’s not as if synthetic, generative voice technology is entirely new, but for folks who regularly work in the video game industry, the most concerning thing has been companies’ willingness to immediately start talking about replacing them with synthetic voices.

“It takes less and less source material to create a very believable, synthetic version of any person’s voice,” Jones said.

What are voice actors’ biggest considerations with AI?

Carin Gilfry has done work in games like Warframe, the recent Saints Row reboot, and voiced the gamemaster narrator in indie RPG/deckbuilder Voice of Cards: The Beasts of Burden. Both Jones and Gilfry are officers at the National Association of Voice Actors, and they said their fellow voice actors started meeting regularly about two years ago to force their way into the conversation. Since then, they’ve talked with hundreds of actors across the world who all share similar fears. For years, people have uploaded clips of characters’ voices to sites used to recreate voices, but the voiceover actors rarely, if ever, give their consent for their voices to be used in this way. They understand that, currently, it’s mostly fans making fan content. Still, synthetically generating their voices cheapens their work. When the models get better at replicating voices, the possibilities for abuse grow.

“I’m not being paid, and voiceover is how I feed my family,” Gilfry said.

Even the most prominent voice actors in the field share little of the same limelight that major actors receive—even less so in the video game industry. Some voiceover actors prefer it that way. They’re not necessarily looking for fame, just credit for their work. Even having voiced major characters in video games that made billions of dollars in sales, many of those lending their voices to characters still go out to casting calls still take small roles in independent titles. Credit is how they create buzz, leading to more gigs.

In effect, their voices are the only things giving them any sense of job security. Stephanie Sheh, an established voiceover actor with credits in games like WarioWare and Devil May Cry: 5, said the biggest problem with the voice industry is simply that it has yet to catch up to where the technology currently stands, let alone where things could be headed. She’s also worked as a casting director on several anime dubs and TV series, and so has seen both sides of voiceover work, and she know how hard it is for actors to find work. Even Hale, with her veteran status, said she still has to go out and hit pavement for the vast majority of jobs she gets.

“I know that it may seem that [voiceover actors] are some form of celebrity, but we’re working for every job. Many of us are still auditioning for every job—it’s gig by gig,” Sheh told Gizmodo in a phone interview. “The longer it doesn’t get regulated, the longer that companies think that what they’re doing is okay.”

Are there legal protections for actors to keep their voices from getting cloned by AI?

Every kind of voiceover job is different. Some contracts involve the actors union SAG-AFTRA, some don’t, and some actors dip their toes into each. Things get even more complicated from there. Voiceover work in a commercial might pay an actor to license their voice as the spokesperson for a product in a certain timeframe. Meanwhile, video games pay on a studio hour basis or a day rate. There is usually a cap on the total hours in a studio, and union contracts mandate a four-hour session. Voiceover actors in the video game space don’t make residuals on sales. Their take home pay could be as low as $1,000 for their time and work if a game makes $50,000 $50 million.

There is no apparatus or standard for what a contract could pay for a voice that’s been synthesized from a few simple voice lines via AI. “Now when we’re dealing with AI and synthetic voices, you don’t need a session at all,” Jones said. “So you’re not even paid a day rate. You’re not paid an hourly rate. You would have to be paid in a different way. And so far, there is no structure set up for that right now.”

Often, a potentially harmful contract could use verbiage such as a “digital double” or a “synthetic version” of the actor’s voice, but most contracts don’t point out plans to use AI-synthesized voices at all. The National Association of Voice Actors has created its own riders for voiceover contracts expressly mandating that actors’ recordings aren’t used to copy their voices or likenesses. Union contracts are mandated to include no language about replicating voices, but these riders could be especially important for any non-union contract where, as Gilfry said, “there are no guardrails.”

“In part, it’s being aware and looking out for changing language because as we become hip to what the current language does, it changes,” the actor said.

Actors see AI voice clones as evidence studios privilege profits over people

For how necessary their voices have been for the rise in video game production values, little attention gets paid to the actors who drive those characters. Voiceover artists are now expected more and more to create their own followings on social media to get those higher-paying and higher-profile jobs, according to actors who spoke to Gizmodo. Sheh said she worries about what would happen if somebody decided to use a deepfake of her voice to say something offensive, leading to online blowback.

Sheh has done a lot of work in anime, describing her voice as slightly younger sounding, and her deeper range is somewhere around mid-range for female voiceover. She does many background non-playable characters (NPCs), and she and others like her make a living from consistent, minor roles—the kind that are most-susceptible for AI replacement. But more than that, she’s concerned for the artists who are just getting started, actors who will struggle for any new gig when they’re all taken up by easy AI generation.

Though synthetic voices might make voicing legions of NPCs free, AI voices might cheapen the quality of games as well, actors warned. While a game like Red Dead: Redemption 2 requires hundreds of voice actors doing over 500,000 lines of dialogue, the background chatter was an integral part of making players feel like they inhabit the world.

“[Publishers] are making hundreds of millions of dollars in profits,” Jones said. “So is that really a place where they want to cut corners?”

Voiceover actors aren’t expecting to keep AI at bay for long

“This is not a genie that we can stuff back into the bottle,” Gilfry said. Actors are concerned the video game industry will try to cut them out of the conversation and further limit how much they can make from their craft. Speech-to-speech AI models may create opportunities for voiceover actors to play with characters and ranges they normally can’t. A double could even be licensed to impossible tasks like reading the weather forecast continuously 24 hours a day.

AI could also automate some of the worst drudgery work, like voiceover lines for customer service calls or sexual harassment training videos. Still, those jobs are often how new actors cut their teeth and break into the industry. Actors willing to license their voice could end up making it that much harder for people to get started.

For Hale, those conversations can’t be had until the video game industry as a whole comes to terms with how it will support voiceover actors in the age of AI. Without those guardrails, “it’s too ripe for abuse,” she said. Taking that stand is even more important for those non-union actors just getting started.

“We have to step in together and it’s all hands on deck,” Hale said. “It’s our job as people who’ve been doing this a long time to reach out to the newer people and support them, empower them, and encourage them to stand together.”

Actors are already finding their voices unethically replicated online

Several big name actors have already complained their voices have been replicated using AI. Gizmodo previously talked to the voice of Doctor Eggman, Mike Pollock, who takes time out of his own schedule to beg YouTubers to remove AI-created renditions of his famed character from the Sonic series.

Though beyond harassment, many of these fakes are made by fans who either don’t know better, or don’t care. Other than finding their voices being used by a few naive folks on YouTube, there’s been a few cases of voiceover actors who found companies replicating their voices without their say so. Voice actor Bev Standing sued TikTok in 2021—before the big AI craze—for using a digitized version of her voice across the platform without her permission. Standing eventually settled the case for an undisclosed amount, according to court filings.

Voice actor Remie Michelle Clarke said she had learned from a friend that the text-to-speech service Revoicer was promoting a synthesized version of her voice without her knowledge. Other services, including the increasingly AI-icized Microsoft Bing in Ireland were also using a AI-generated version of her vocals. She eventually learned that, due to a contract she signed with Microsoft several years ago, her voice had been cloned, altered, and spread through Instagram ads and sites like Revoicer. Clarke said she has not received payment for any of those ads using her voice due to the contract.

Over Twitter, Clarke told Gizmodo that she got the job in 2019 from an open call on major casting site Voices.com. Only after she got the gig did they reveal the call was for Microsoft and sent her the contract to sign. She admitted she signed “without fully understanding the ramifications” While Revoicer removed her voice—renamed “Olivia”—from the site, Microsoft has not yet reached out to her despite the media attention. In response to an inquiry from Gizmodo, Microsoft declined to comment. In a series of guidelines posted last year, the company said it contractually requires customers to obtain explicit written permission from voice talent to create a synthetic voice.

Connecticut-based attorney Robert Sciglimpaglia represented Standing in her lawsuit and has since taken on multiple clients in the voiceover industry concerned about their work and AI. In Clarke’s case, he said she had signed her Microsoft contract during a time when many of the big tech companies, from Google to Amazon to Apple, were creating large voice banks, and the documents specifically mention voice cloning and then selling that voice commercially. Though this was years before most professionals understood an iota of the work being done on generative AI, and a voice actor, especially one who’s desperate for gigs, wouldn’t necessarily notice any clause that noted any fine print that allowed perpetual use.

“There’s going to be a lot of surprises for voice actors coming up,” Sciglimpaglia told Gizmodo over the phone, noting how many actors have likely signed these kinds of contracts. The attorney himself has done some voiceover work on a few short films and TV series, and he’s seen cases where “unscrupulous players” are using provisions for “automated dialogue replacement” in union contracts to argue they can clone people’s voices.

Though as far as he’s seen, the major players—talent agencies, casting sites, or studios themselves—are willing to play ball and keep AI out of their contracts. What’s going to be more concerning for people, especially those just dipping their toes into voice work, are the bevy of unscrupulous companies across the world who could be more willing to outright lie about how they intend to use actors’ voices. With the proliferation of AI overseas, it’s going to get even harder for actors to make claims against foreign companies over stealing their voices.

“With Bev Standing, they said that she was going to be doing a job for a Chinese institute, which was based in the Netherlands, for translation,” the attorney said. “The only companies making money are the ones selling voices to third parties. You can tell right away which ones are the unscrupulous ones and which ones are the legitimate ones just by the language that they use in the contract, just based on if they’re willing to negotiate.”

What are the unions and actors’ groups doing to fight against AI?

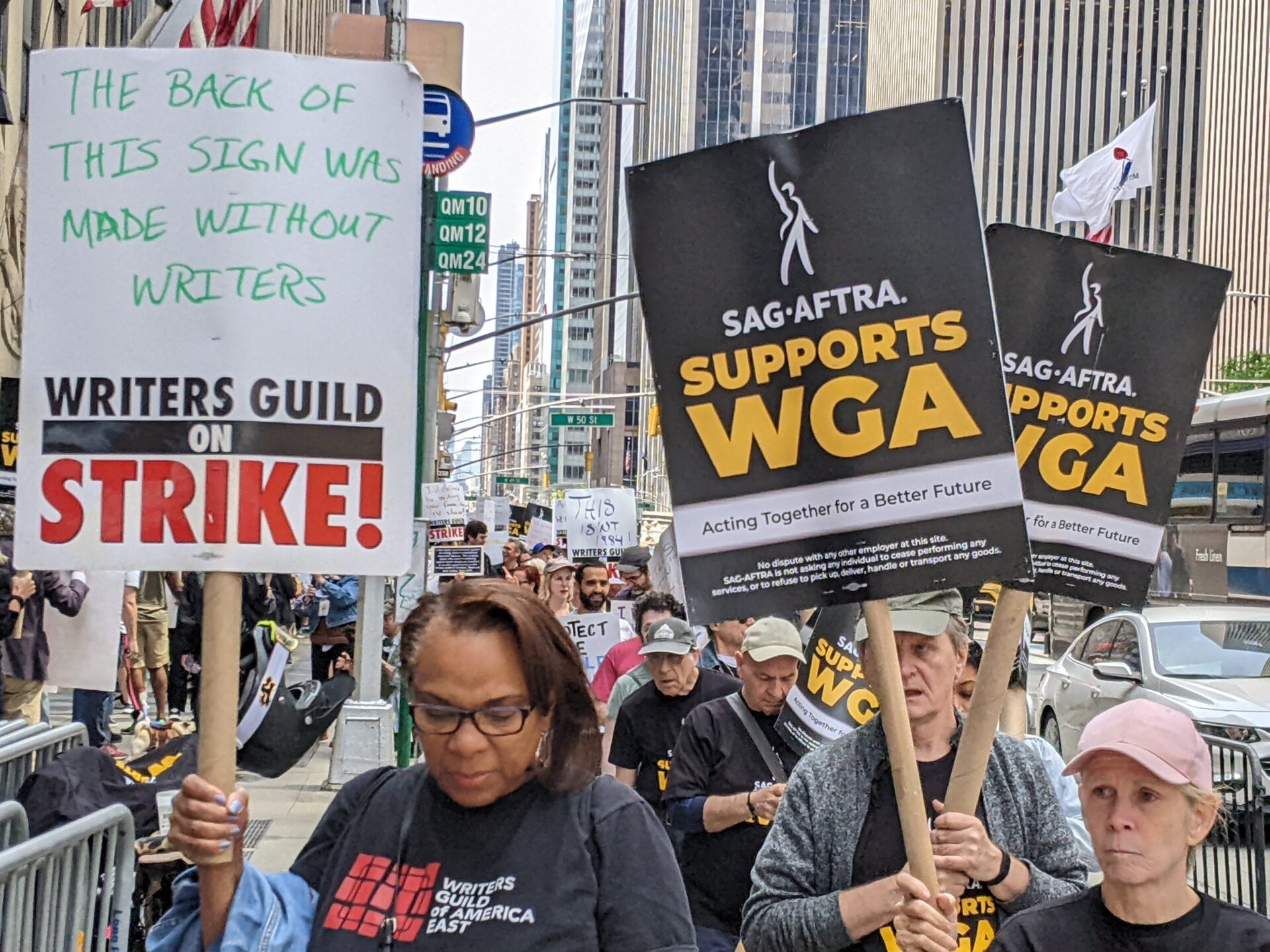

AI has put the fear of a new, digital God in the hearts of folks in many creative industries, including artists, coders, and writers. Thousands of members of Writers Guild of America (WGA) initiated a strike earlier this month centered on pay disputes, but also tangentially related to writers’ concerns about generative AI and being replaced. The WGA is seeking to carve out new contracts that make sure AI isn’t used as a way to write or rewrite anybody’s actual work.

Want to understand why we need a strike authorization on the TV/Theatrical Contract (CBA)? These are the main issues that we’re asking for. I encourage all to read the full document. It’s not long & explains what we’re fighting for & how negotiations workhttps://t.co/rp9IDEHw0B pic.twitter.com/RrunHJObuA

— Linsay Rousseau (@LinsayRousseau) May 20, 2023

The actors’ union is gearing up for a fight on the same line of thinking that the WGA has presented. Early in May, SAG-AFTRA joined the WGA on the picket line. The actors union then sent a strike authorization vote to members, making room for another high-profile fight in the entertainment industry. Gizmodo reached out to the union for comment about its specific demands for companies regarding AI but did not hear back by press time. Still, the union has made its stance pretty clear, with statements saying the rights to digitally copy an actor or create a new performance with AI are “mandatory subjects of bargaining.”

That still leaves out the many voice actors who are either not signed up with a union, or who still take non-union gigs. Some groups like NAVA have taken up the torch by promoting contract language to give actors rights to the AI-generated content trained on their voices. Earlier this month, the organization revealed it had created a “fAIr Voices Coalition” that included several online voiceover casting companies including Voices.com and Voice123. The CEOs have pledged to work out mutually agreed, fair rates for any company that wants to use voice files for AI. Likewise, the Vocal Variants group spinning out of NAVA says it’s devising new contracts as well as lobbying for federal regulations that might protect voiceover actors.

NAVA is pleased to announce the formation of the fAIr Voices Coalition. Over the past two weeks president, Tim Friedlander, and Vice-President, Carin Gilfry, have talked with the CEOS of the 5 major voiceover online casting companies about AI and synthetic voice production. pic.twitter.com/ovaXg2mbUt

— NAVA (@NAVAVOICES) May 10, 2023

The AI-voiced future is just over the horizon. Voices.com caused a stir in the voiceover community when it changed its terms of service to imply it could upload auditions to train AI, though it quickly claimed that wasn’t the intent and again modified its TOS. The site bought Voices.ai in April this year, a site that sells enterprise-focused APIs for AI voices. Voices.com CEO David Ciccarelli said in a release “there are many applications that don’t require artistic interpretation.”

While its easy to see the company’s argument, these kinds of lines from major corporations don’t sit well for the actors whose jobs are on the line. And in the end, the industry moving in the direction of AI without first engaging with actors has caused a widening fracture within the voiceover industry.

“In the past, it was very logical,” Sheh said. “You could say ‘I’m giving out these rights because it’s just the nature of production. We’re all gonna assume that everyone is a good player, and no one’s trying to cheat us or try to take advantage of us.’ Now, I think it’s a very different situation.”

And even if, in some utopian future, AI becomes another tool in the chest for voiceover work, it cannot replace the real, human expression that voiceover acting is. The actors Gizmodo spoke to all recognized those moments of unscripted inspiration. AI, they said, can never truly replicate those.

“It’s a piece of that process that you can’t name,” Hale said. “You just know it when it happens. It leaves you speechless. It leaves you laughing uncontrollably. It surprises you. It actually pings the heart center of your body as a real human experience.”