Inside Silicon Valley, the consensus is that artificial intelligence will be the most important technology for the next decade. Okay, but what the hell does that mean for the rest of us?

In an attempt to to build in transparency and accountability into the next generation of world-changing technology, American lawmakers introduced a bill on Wednesday to require large companies to audit machine learning systems for bias.

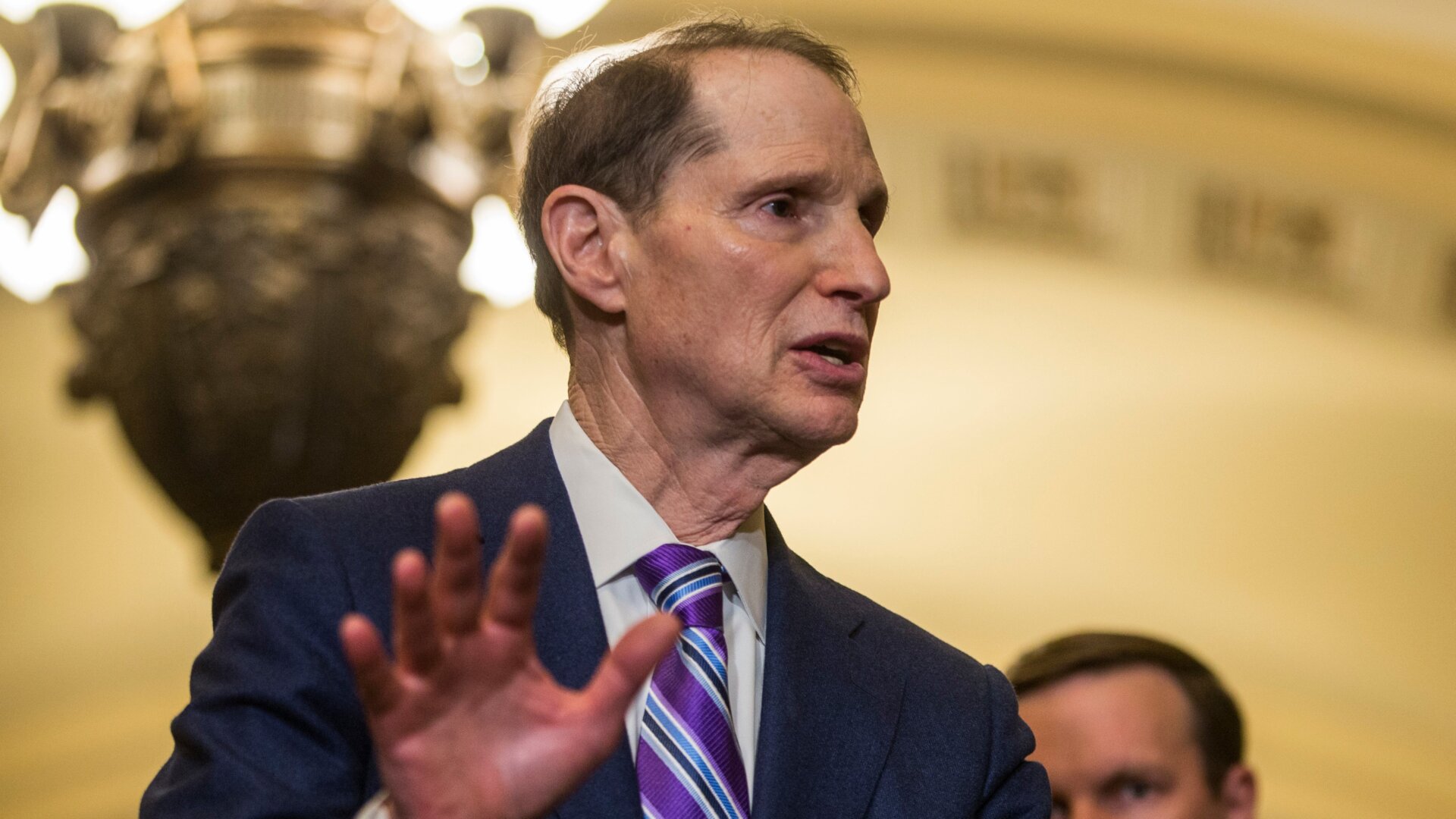

Democratic Senators Ron Wyden and Cory Booker introduced the Algorithmic Accountability Act on Wednesday. Democratic Congresswoman Yvette Clarke introduced an equivalent bill in the House of Representatives.

Machine learning and artificial intelligence already powers a deceptively wide sweep of crucial processes and tools like facial recognition, self-driving cars, ad targeting, customer service, content moderation, policing, hiring, and even war. It’s a huge list, and sometimes it’s fun to sit back and marvel at how different all those uses are.

Exactly how those decisions are made and whether or not they’re fair, however, is often opaque or unknowable. That problem has led lawmakers to this attempt to pry open the “black box.”

The new bill would task the Federal Trade Commission with crafting regulations making companies conduct “impact assessments” of automated decision systems to assess the decision making systems and training data “for impacts on accuracy, fairness, bias, discrimination, privacy and security.”

Companies making over $50 million per year or holding the data of over one million individuals would be targeted by the bill.

“Computers are increasingly involved in so many of the key decisions Americans make with respect to their daily lives—whether somebody can buy a home, get a job or even go to jail,” Wyden said in an interview with The Associated Press.

This is not a hypothetical scenario. Look at a situation that arose at Amazon in 2018. The American tech giant, a world leader in AI, used the technology to help decide which applicants it should interview for jobs. After two years of design, they found that any female applicant was automatically blasted to the back of the list.

The AI Now Institute’s Kate Crawford discussed the incident in a recent interview with Kara Swisher.

“It tells us two things,” Crawford said. “One, it’s actually much harder to automate these tools than you might imagine, because Amazon’s got some pretty great engineers. It’s not like they don’t know what they’re doing. It also tells you something about the pile of résumés that they had. What were they training it on? What was the training data? Surprise, surprise: a lot of white dudes in basically their entire engineering pool.”

Within the last month, Facebook’s discriminatory ads practices came into the spotlight and the company now also faces charges from the Department of Housing and Urban Development for discrimination under the Fair Housing Act.

“By requiring large companies to not turn a blind eye towards unintended impacts of their automated systems, the Algorithmic Accountability Act ensures 21st Century technologies are tools of empowerment, rather than marginalization, while also bolstering the security and privacy of all consumers,” Clarke said.