On Tuesday, the city of Cambridge, Massachusetts moved one step closer to prohibiting local government from using facial recognition. Three other cities in the country have already instituted such bans over concerns that the technology is biased and violates basic human rights.

In December of last year, the Cambridge City Council passed the Surveillance Technology Ordinance which requires the council’s approval prior to the acquisition or deployment of certain surveillance tech, which included facial recognition software. The order was passed on Tuesday by the council and will next be sent to the Public Safety Committee, Mayor Marc McGovern and Councillor Sumbul Siddiqui both confirmed to Gizmodo in an email. This marks one step closer to banning the city’s use of the tech altogether.

McGovern’s office said that it’s “unclear” when next steps take place and that Councillor Craig A. Kelley, a sponsor on the amendment, has to schedule the Public Safety Committee hearing. The amendment would then be heard by an ordinance committee. It would then head to City Council for adoption. McGovern couldn’t comment on a timeline but said, at the longest, “it will be done by the end of the year.”

The amendment, sponsored by Cambridge Mayor Marc McGovern and two city councilmembers, argued that, given recent reports, it’s evident this tech can discriminate against women and people of color. They also argued that facial recognition technology violates a person’s civil rights and civil liberties.

“The use of face recognition technology can have a chilling effect on the exercise of constitutionally protected free speech, with the technology being used in China to target ethnic minorities, and in the United States, it was used by police agencies in Baltimore, Maryland, to target activists in the aftermath of Freddie Gray’s death,” the amendment states.

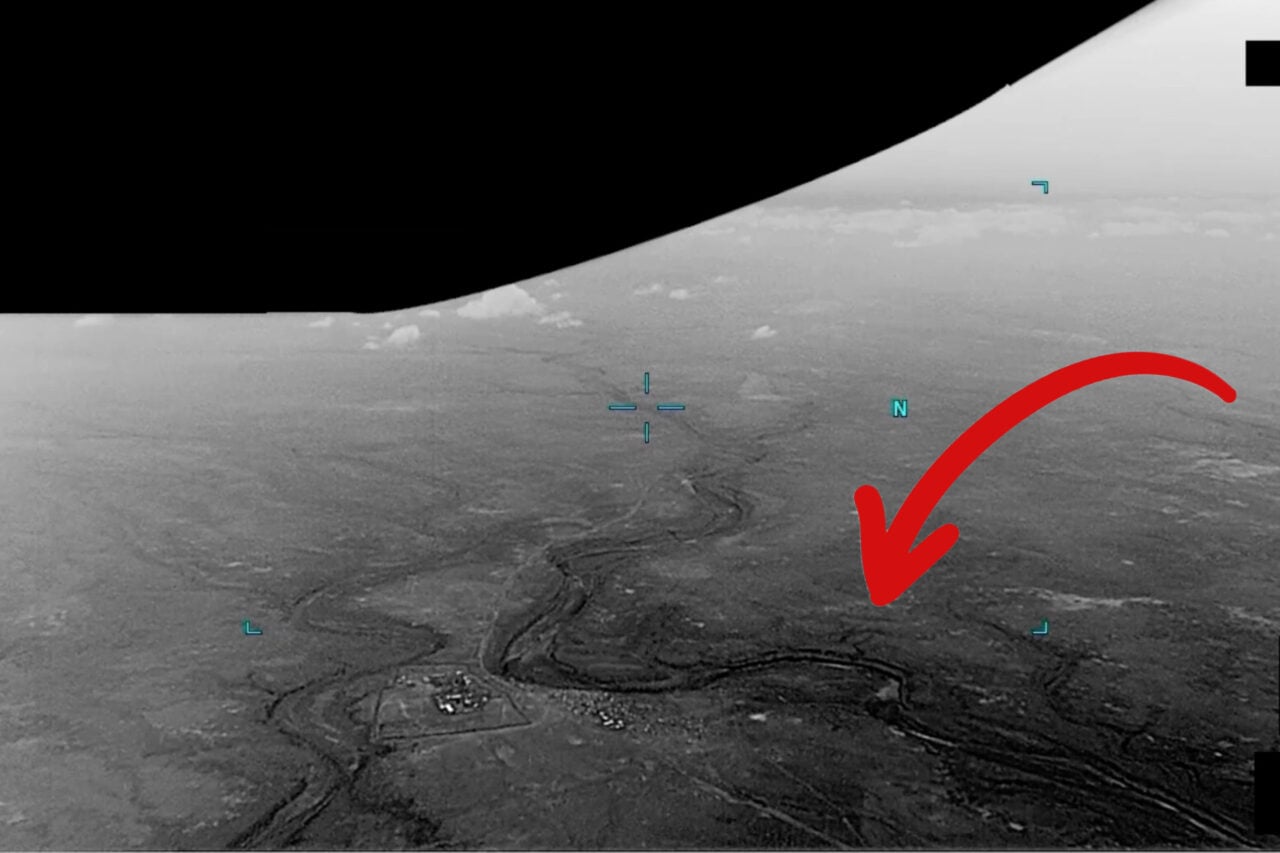

It’s true that the Chinese government is using a massive facial recognition system to track Uighurs, the country’s Muslim minority. According to documents and interviews obtained by the New York Times, government officials racially profiled this group of individuals by tracking them based on their physical appearances, with 500,000 Uighur identified in one region of the country in just a month. The technology was deployed across the country, and, according to the report, had seen an increase in interest over the last two years.

It’s also true that when activists took to the streets after Freddie Gray’s death in April of 2016, cops used facial recognition tech to identify and arrest certain individuals participating in the protests. As is the case in both China and Baltimore, this technology wasn’t merely used as a tool for surveillance, which in its own rite is a violation of an individual’s privacy. But it was also used as a tool for oppression, targeting, tracking, and punishing populations both vulnerable and exercising their right to speak out against the misconduct of their government.

Cambridge would join San Francisco, Oakland, and Somerville in banning this specific type of surveillance tech. As the flaws and the biases of this tech are further illuminated, and as protectors of our digital rights and civil liberties issue grave warnings over its widespread deployment, it’s likely we’ll continue to see lawmakers push to strip cities of their power to track their citizens.

“We’re at a pivotal moment in human history,” Evan Greer, deputy director of Fight for the Future, told Gizmodo in an email. “Invasive surveillance technology like facial recognition is spreading extremely quickly. It’s being marketed as ‘convenient’ and for ‘public safety,’ but it’s putting us on a path to a totalitarian police state. Backlash to the spread of face surveillance is growing. But if we don’t act now, it will soon become ubiquitous, and then it could be too late.”

Correction: A previous version of this article incorrectly stated that Cambridge lawmakers had passed the proposal into law. The city council instead unanimously voted to consider the proposal, which will now go before the public safety committee. We regret the error.