Devices like laser-guided bombs and nonlethal weapons have the potential to reduce civilian casualties and wanton suffering. But as these new technologies emerge, are humans actually becoming more ethical about waging war, or is killing just becoming easier?

The notion that technological advances can improve battlefield conditions may seem counterintutive, considering that historically weapons have had the tendency to make wars more deadly for all involved. Since the time of Napoleon, our capacity to kill each other has increased by an order of magnitude thanks to the introduction of quick-firing guns, grenades, bomber aircraft, and chemical weapons, to name just a few.

Not to mention modern wars have become a scourge for civilian populations. Before the 20th century, noncombatants were able to steer clear of danger as the battlefields raged off in the distance. Since the First World War, however, serious conflicts (i.e. those involving roughly symmetric opposing forces) have produced civilian casualty levels that often match those incurred by the military.

An MQ-9 Reaper landing in Afghanistan (USAF/Public domain)

An MQ-9 Reaper landing in Afghanistan (USAF/Public domain)

But with the advent of directed drone strikes, nonlethal weapons, precision bombing—and most recently the advancement of military robotics and autonomous killing machines—some argue that military forces have the potential to become more discriminate in their killing, not only minimizing noncombatant casualties but also increasingly putting soldiers out of harm’s way.

The potential may be there, but we haven’t seen a change yet. The 50/50 ratio of civilian and military casualties has largely remained intact over the past century—including the current conflict in Syria—despite the introduction of new military technologies like high-precision rifles, helicopters, and jet aircraft.

With these high-tech weapons comes the worry that it’s becoming too convenient to kill. A major criticism of drone strikes is that they kill at a distance without the public even knowing that innocent people are being murdered, or the soldiers issuing the kill commands ever seeing the outcome directly. If we humans can kill other humans with the push of a button hundreds of miles away, is that really making war more humane?

Some argue that there’s value in keeping conflicts mean and unsanitized. As U.S. Civil War General Robert E. Lee once said, “It is well that war is so terrible, otherwise we should grow too fond of it.” But that’s oversimplifying the issue. As we head deeper into the 21st Century, it’s painfully obvious that wars are going to remain a fixture of our civilization for some time to come. It’s incumbent upon us to make it less needlessly callous and sweeping, especially for those on the sidelines. The question is how—and whether our technologies can be of any help.

An Idea Rooted in History

The idea that we might be able to make war more humane at all seems oxymoronic; war is, by definition, inhumane. Yet it’s an effort that dates back centuries. The difference is that throughout history we’ve primarily addressed it by banning excessively brutal weapons and tactics. The idea of using new sophisticated technologies themselves to alleviate the horrors of war has only more recently emerged.

The Gatling Gun, a weapon so horrible that its inventor thought it might make wars more bearable. (Image: Pearson Scott Foresman/Public domain)

The Gatling Gun, a weapon so horrible that its inventor thought it might make wars more bearable. (Image: Pearson Scott Foresman/Public domain)

Well, that’s not entirely true. Back in 1857, the inventor of the first modern machine gun, Richard Jordan Gatling, naively thought that his weapon was so horrible that it might actually mitigate the damage of wars. Gatling wrote that:

It occurred to me that if I could invent a machine—a gun—which could by its rapidity of fire, enable one man to do as much battle duty as a hundred, that it would, to a large extent supersede the necessity of large armies, and consequently, exposure to battle and disease [would] be greatly diminished.

Gatling’s theory was shown to be catastrophically wrong during the First World War.

At the height of the Industrial Revolution, it never occurred to belligerents that weapons themselves could be made gentler and more humane. Instead, they looked to pieces of paper—in the form of treaties and protocols—to prohibit the use of the most “inhumane” devices and tactics. It was the Romantic Era, after all, a time when chivalry and gentlemanly conduct ruled on the battlefield.

Keeping war civilized: The Second Hague Conference in 1907 (Credit: Unknown/CC BY-SA 3.0)

Keeping war civilized: The Second Hague Conference in 1907 (Credit: Unknown/CC BY-SA 3.0)

In the latter half of the 19th Century, the Geneva Convention was set up to regulate the conduct of war and the treatment of those “not taking part in hostilities and those who are no longer doing so.” A more rigorous set of protocols, the Hague Conventions of 1899 and 1907, established humanitarian codes of conduct among the combatants themselves. Owing to the advancing sciences and the subsequent onset of new killing technologies, the attendees of the first Hague conference compiled a list of weapons and tactics deemed too awful to use during times of war, including the use of poison gas, killing enemy combatants who have surrendered, attacking or bombing undefended towns or habitations, releasing explosives from balloons or “by other new methods of a similar nature,” and the use of “soft nosed” and “cross-tipped” bullets which “expand or flatten easily in the human body.”

The Hague Conventions were a precursor to the Geneva Protocol, a treaty prohibiting the use of chemical and biological weapons in international armed conflicts. Since the treaty’s introduction in 1925, the protocol has been interpreted to cover the use of non-lethal agents such as tear gas and herbicides such as Agent Orange. Recently, a report funded by the Greenwall Foundation concluded that the deployment and use of biologically enhanced soldiers could be construed as a war crime under the bounds of the Geneva Protocol.

“In both love and war, there are rules,” noted military strategist Peter W. Singer to Gizmodo. “People don’t always respect them, but there are norms of conduct that the players are supposed to follow. They range from respecting White flags of surrender to torture. Again, they aren’t always respected, but the norms let us know when someone has crossed the line.”

The Rules of War

The aftermath of a barrel bomb attack in Aleppo, Syria, February 6, 2014 (Image credit: Freedom House/Flickr/CC BY 2.0)

The aftermath of a barrel bomb attack in Aleppo, Syria, February 6, 2014 (Image credit: Freedom House/Flickr/CC BY 2.0)

One way to define “humane” in the context of war is to document the extent to which technological change helps responsible militaries reduce superfluous suffering associated with battle, says Michael C. Horowitz, an associate professor of political science at the University of Pennsylvania. He is the author of The Diffusion of Military Power: Causes and Consequences for International Politics, and an expert on military innovation, the future of war, and forecasting.

In the laws and codes of war, unnecessary suffering generally means two different things: one, killing or harming innocent civilians — intentionally or otherwise — and two, inflicting unnecessary or excessive injuries against the combatants themselves.

These aspects of war “are not objectively definable,” says Paul Scharre, a Senior Fellow and Director of the 20YY Warfare Initiative at the Center for a New American Security. “How many civilian casualties are too many? And why are some methods of killing worse than others? Throughout history, societies have struggled to define what humane—in the context of war—truly means.”

Nevertheless, new military technologies may afford us the ability to reduce innocent deaths and excessive violence. Scharre, who has led U.S. Department of Defence efforts to establish policies on intelligence, surveillance, and reconnaissance programs, says there are laws meant to protect civilian populations against wanton environmental destruction, such as salting the earth, poisoning wells, deforestation, and so on.

Civilian deaths arising from accidents, or through deliberate killings (such as the use of barrel bombs in the Syrian Civil War), are more difficult to enforce, says Scharre.

As for military combatants, it’s obviously acceptable to kill or incapacitate them, but not in ways that are needlessly gruesome or painful. Scharre points to the contentious use of sawback bayonets (used in WWI), chemical weapons, poison gas, expanding bullets, and recently, blinding lasers. Now, we’re seeing increasingly effective non-lethal weapons.

Non-lethal Weapons

Non-lethal weapons have emerged over the years but they have yet to attract serious attention from the military, which is surprising given their potential to reduce suffering on a mass scale.

The Active Denial System 2 can project a man-sized beam of heat-emitting millimeter waves. (LCpl Alejandro Bedoya/DVIDS)

The Active Denial System 2 can project a man-sized beam of heat-emitting millimeter waves. (LCpl Alejandro Bedoya/DVIDS)

That said, the U.S. Department of Defense has a non-lethal weapons program, which has fostered the development of such things as acoustic hailing devices (which produce extremely loud and annoying warning tones), the M-84 Flash Bang Grenade (which does exactly what the name implies), and tasers. The DoD is also working on an Active Denial System, a pain gun it describes as a “non-lethal, counter-personnel capability that creates a heating sensation, quickly repelling potential adversaries with minimal risk of injury.”

Scharre says non-lethal weapons are appealing for a couple of reasons. First, soldiers often find themselves in situations where they have no choice but to point a gun at someone—but it doesn’t always have to be a deadly weapon. Second, there are often strategic reasons for wanting to incapacitate, rather than kill, an adversary.

The flip side of non-lethal weapons are the leveling of suffering they cause targets.

For instance, blinding lasers— a directed-energy weapon that blinds and disorients the enemy with intense directed radiation—are prohibited. Technically speaking, this is considered a non-lethal weapon in that it does not kill an adversary, but it does cause considerable harm in the form of blindness.

Blinding laser. Image: ArmorCorp

Blinding laser. Image: ArmorCorp

The International Committee of the Red Cross (ICRC) supports the prohibition on blinding lasers, stating that:

Blinding lasers would not actually save lives as they are intended to be used in addition to other weapons. They might even have the effect of increasing mortality rates as blinded opponents would not be able to defend themselves and thus be easily targeted by other weapons. As it is unlikely that an attacker would be able to assess at a distance whether an opponent has been rendered out of action by blinding, he would also use his other weapons. The result would therefore be just as many deaths and many more blind, thus increasing the suffering which results from battle.

Unlike other injuries, blinding results in very severe disability and near total dependence on others. Because sight provides us with some 80-90% of our sensory stimulation, blinding renders a person virtually unable to work or to function independently. This usually leads to a dramatic loss of self-esteem and severe psychological depression. Blinding is much more debilitating than most battlefield injuries.

But critics of the ban say it’s a misguided and even dangerous idea, worrying that the military is needlessly constraining its capabilities.

Scharre says that the current law against blinding lasers seems to imply that the deliberate blinding of a combatant is a superfluous act, and that the individual is harmed more than necessary to get the job done. “But is it actually less humane to kill people than blind them?,” he asks. “And does this law essentially state that it’s better to be dead than blind?”

It’s a worthwhile question, says Scharre, to ask which option is more humane.

Tear gas being used at Ferguson (Credit: Lovesofbread/CC AS-4.0)

Tear gas being used at Ferguson (Credit: Lovesofbread/CC AS-4.0)

At the same time, it’s important to consider the ways in which non-lethal weapons might lower the barriers to using force. As more non-lethal weapons come into use, it’s conceivable that they’ll be considered for an ever-growing number of contexts.

Instead of being limited to enemy engagements and riots, these technologies could be used to suppress dissent on home soil, such as dispersing protests or other mass gatherings of individuals. Governments, and by proxy militaries and police forces, can thus afford to be more sweeping and indiscriminate in their crowd-control measures, which could undermine democratic principles such as the freedom of expression and freedom of assembly.

What’s more, these sorts of technologies are creating an asymmetry in the opportunity to use them; the people being attacked have no equivalent technology to defend themselves. The same can be said for the application of lethal weapons—even if they are more precise.

A More Accurate Way to Kill?

When it comes to assessing civilian casualty rates, there’s still considerable debate as to whether or not drone strikes are making war more humane. As time passes, however, it’s becoming increasingly obvious that the use of unmanned aerial vehicles (UAVs) produces serious downstream consequences.

Since 2008, the U.S. government has launched over 1,500 drone strikes. Proponents of UAVS, such as CIA Director John Brennan and Senator Dianne Feinstein, claim they result in fewer civilian casualties than other methods. But as Abigail Hall points out in the Independent, accurate numbers are hard to come by. Part of the problem is that definitions of “militant” and “civilian” are sufficiently vague, contributing to inaccurate and biased casualty figures. It’s also unclear as to whether or not fighter pilots are more effective at reducing civilian casualties.

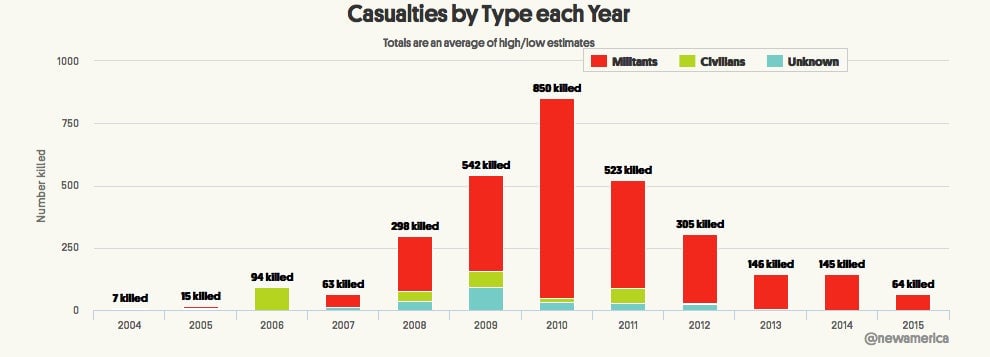

All Pakistan drone strikes (Credit: New America Foundation)

All Pakistan drone strikes (Credit: New America Foundation)

But according to Michael Horowitz, modern guided weapons have become significantly more precise over the past several decades, dramatically reducing civilian casualties when used correctly. Evidence collected by the New America Foundation, a non-partisan think tank, affirms this claim, particularly as it pertains to actions in Pakistan. During the course of the Obama Administration, somewhere between 129 to 161 civilians were killed in Pakistan, while 1,646 to 2,680 militants were killed. But since 2012, no civilians have been killed at all in Pakistan (according to NAF data).

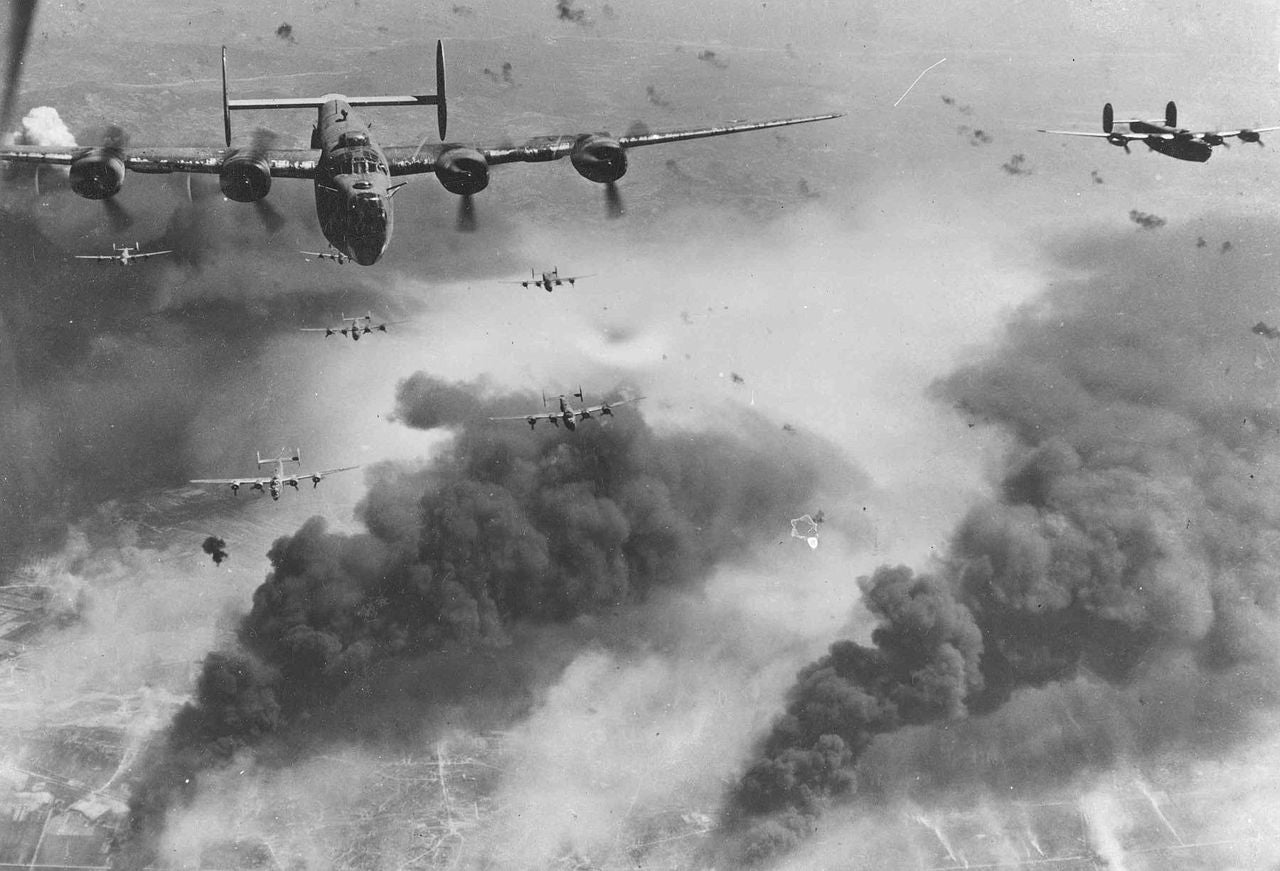

Seems Excessive: 15th Air Force B-24s leave Ploesti, Romania, after one of the long series of attacks against the number one oil target in Europe (Credit National Museum/Public domain).

Seems Excessive: 15th Air Force B-24s leave Ploesti, Romania, after one of the long series of attacks against the number one oil target in Europe (Credit National Museum/Public domain).

Undoubtedly, precision bombing has come a long way in the past 75 years. Guidance systems were so primitive in the 1940s that it took around 9,000 bombs to destroy a single building. Entire communities were leveled in the effort to destroy military targets (though it’s important to remember that Allied forces also deliberately targeted civilians during WWII). Today we have laser and GPS guided missiles, but back during the Second World War, field tests demonstrated that less than 5% of rockets fired at a tank actually hit the target—and that was in a test where the tank was painted white and stationary.

“Some modern precision munitions are accurate to within five feet, meaning fewer bombs are dropped and fewer civilians die,” says Horowitz. “Most militaries actually prefer using precision munitions simply because [they] help militaries accomplish their missions with higher confidence and lower costs.”

Horowitz says that countries can use even the most precise weapons in ways that generate more suffering than necessary. The recent U.S. bombing of a hospital in Afghanistan certainly comes to mind—an act which the charity Doctors Without Borders says may be a violation of the Geneva Conventions. Regrettably, mistakes and recklessness are still part of the equation, as witnessed by the 2014 U.S. drone strike that killed 12 people on their way to a wedding.

“But in general, the more precise the weapon, the lower the risk of civilian casualties,” says Horowitz.

Singer claims that people often misconstrue the capabilities of new weapons in two ways when it comes to civilian casualties.

“First they expect perfection, as if there could be such a thing in war,” he says. “Mistakes happen all the time even with precise weapons, with the causes ranging from tech failure to bad information on the target’s identity, to maybe it wasn’t a mistake. And second, we’ve changed our level of our expectations. Actions like the firebombing of Tokyo were accepted back in 1940s, while single digit casualty counts are viewed by many as unacceptable today, rightly so according to most ethicists.”

When it comes to drone strikes, Scharre says that the objections are not about the technology of drones, but America’s counterterrorism policy.

“What some people are objecting to is not the drone, but the fact that the U.S. is killing terrorists on what doesn’t seem like a traditional battlefield, or in a war as we typically recognize it,” says Scharre. “There’s no question that drones are more precise than other alternative weapons, whether they are cruise missiles, aircraft, or artillery shells.”

Scharre says that high-precision weapons like drones allow the military to choose the time and manner of attack—typically when targets are alone and when there aren’t civilians around.

But herein lies another problem, and another argument against the ‘humaneness’ of UAVs: drone strikes can be incredibly alienating and psychologically torturous. Writing for the Globe and Mail, Taylor Owen of Columbia University’s Tow Center for Digital Journalism says that drones don’t just kill—their psychological effects are creating enemies:

Imagine that you are living somewhere in Pakistan, Yemen, or Gaza where the United States and its allies suspects a terrorist presence. Day and night, you hear a constant buzzing in the sky. Like a lawnmower. You know that this flying robot is watching everything you do. You can always hear it. Sometimes, it fires missiles into your village. You are told the robot is targeting extremists, but its missiles have killed family, friends, and neighbours. So, your behaviour changes: you stop going out, you stop congregating in public, and you likely start hating the country that controls the flying robot. And you probably start to sympathize a bit more with the people these robots, called drones, are monitoring.

Placing people in a position of perpetual terror has its consequences.

Out of harm’s way: UAV monitoring and control (Gerald Nino, CBP, U.S. Dept. of Homeland Security – CBP/Public domain)

Out of harm’s way: UAV monitoring and control (Gerald Nino, CBP, U.S. Dept. of Homeland Security – CBP/Public domain)

Lastly, the advent of drone strikes and guided missiles are, like non-lethal weapons, setting the stage for increased asymmetry amongst combattants. A drone operator is in a much safer position than, say, a target who’s trying to plant a remote-controlled improvised explosive device (IED). This dramatically diminished opportunity for risk is leading some argue that the willingness to use such force is significantly heightened. It’s a concern that’s set to become even stormier with the all-but-inevitable introduction of fully autonomous weapons and the removal of humans from the killing loop.

Automating Death

This past July, an open letter was presented at an Artificial Intelligence conference in Argentina calling for a ban on autonomous weapons, namely weapons systems that, once activated, can select and engage targets on their own and without further intervention. The letter has been signed by nearly 14,000 prominent thinkers and leading robotics researchers.

Supporters of the ban argue that these weapons have the potential to lower the barrier for war, make mistakes, and result in less discriminatory killings. There’s also concern of a catastrophic accident, the lack of human control, and a sudden escalation of violence—particularly if autonomous weapons are set against each other.

You have 30 seconds to comply: The Gladiator Tactical Unmanned Ground Vehicle (U.S. Marine Corps)

You have 30 seconds to comply: The Gladiator Tactical Unmanned Ground Vehicle (U.S. Marine Corps)

On the other side, critics of the open letter were quick to point out that these armed robots are likely to perform better than armed humans in combat, resulting in fewer casualties on both sides. Writing in IEEE Spectrum, Evan Ackerman says that what we really need is way to make armed robots ethical, because their existence is all but guaranteed:

In fact, the most significant assumption that this letter makes is that armed autonomous robots are inherently more likely to cause unintended destruction and death than armed autonomous humans are. This may or may not be the case right now, and either way, I genuinely believe that it won’t be the case in the future, perhaps the very near future. I think that it will be possible for robots to be as good (or better) at identifying hostile enemy combatants as humans, since there are rules that can be followed (called Rules of Engagement, for an example see page 27 of this) to determine whether or not using force is justified. For example, does your target have a weapon? Is that weapon pointed at you? Has the weapon been fired? Have you been hit? These are all things that a robot can determine using any number of sensors that currently exist.

Scharre agrees that advanced autonomous weapons will eventually be sophisticated enough to distinguish a person holding a rifle from, say, a person holding a rake. “The challenge will be for these systems to distinguish a civilian from a combatant, which isn’t an immediately easy distinction to make,” he says. “Imagine a scenario in which ordinary folks are just defending their homes.”

Meanwhile, militaries have no desire to deploy weapon systems they cannot control — not just for humanitarian reasons, but because those systems are less likely to accomplish their missions, says Horowitz. He suspects that militaries—at least the responsible ones—will be extremely conservative about developing and deploying autonomous weapons precisely for that reason.

Another concern is that less responsible militaries or non-state militant groups might have incentives to develop less sophisticated and less reliable versions of dangerous autonomous weapons.

“One line of thought suggests that autonomous weapon systems, if programmed precisely, could reduce suffering in war in situations where human decision making, due to stress, fatigue, or other issues, can lead to suffering,” says Horowitz. “Autonomous weapon systems could also raise several issues of their own, however, from the risk of accidents to unpredictable behavior resulting from the interaction of complex systems, to misuse by irresponsible actors.”

That said, while very simple autonomous weapon systems are possible today (such as the Samsung SGR-1 robot sentries used by South Korea along its border with North Korea), more powerful autonomous weapon systems involving complex decision making and mobility are a long ways off.

The Transparent Future

When considering the future of warfare, you have to take into account the impact of information technology and heightened surveillance. The unprecedented availability of information in the 21st century is making virtually all aspects of life more visible. Some people say this increased transparency has the potential to make us more accountable, and thus more humane, in the world.

You are being watched, soldier: 344th Psychological Operations Company soldier interacts with children in Kandahar province (Credit: U.S. Army photo by Cpl. Robert Thaler/Public domain)

You are being watched, soldier: 344th Psychological Operations Company soldier interacts with children in Kandahar province (Credit: U.S. Army photo by Cpl. Robert Thaler/Public domain)

Scharre points out that soldiers on the ground are already be affected by this trend. Through their use of social networking, or via surveillance, they’re becoming increasingly concerned about what their superiors are seeing. The scandal surrounding Abu Ghraib is a potent example. Increased transparency has also led to calls for personal cameras on police officers. Imagine the effect that these cameras, or other surveillance technologies, would have on soldiers in combat situations.

“In the past we didn’t have sensors everywhere, and this added level of transparency is bound to have an effect—one that will produce a tendency to make people do less horrible things, and make them more careful,” says Scharre. “What does covert action look like in the age of transparency? What does engagement with local populations look like? And what happens to solider action when everything is being recorded on the ground? Personally, I don’t think our military is fully prepared for that.”

New technologies are affording militaries the opportunity to create a more humane battlefield and to exert less harm onto civilian populations. But all the potential consequences must be considered. History shows our potential to misuse the power of advanced technologies, even with laws in place to prevent, or at least mitigate, excessive abuse. It’s not a perfect system, but it’s the best we have.

Email the author at [email protected] and follow him at @dvorsky.

Top illustration by Tara Jacoby