Someday, in the dim fart-filled chamber you will call home, you’re strapped into a helmet suckling mush through a straw and consuming content while social media and marketing companies hoover up your thought data.

This is within the realm of near possibility, a paper from the Imperial College London suggests. I exaggerate, but just a little.

In a forward-looking review of the state of brain-computer interfaces (BCI) technology, researchers warn of grim futures in which companies like Facebook and Google mine your emotions for marketing purposes, video games pipe into your mind, the machine assigns you a new identity, and widespread brain tech addiction rivals the opioid epidemic. Microsoft and Neuralink are already pushing it. (Facebook tried, but has put its efforts on hold.) It’s the stuff of William Gibson’s dreams, but significantly less cool and far more depressing.

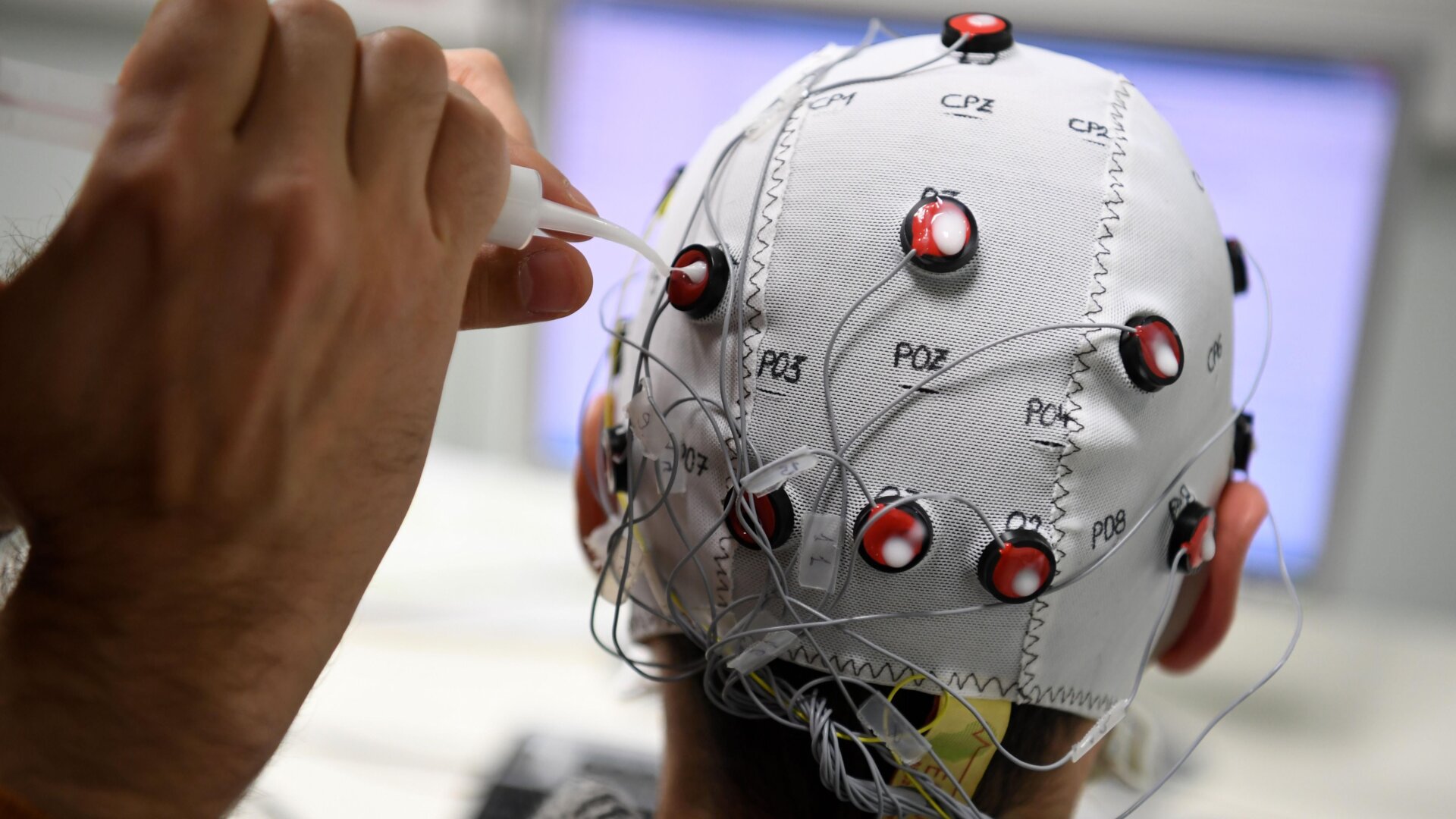

First, researchers list good reasons this technology should be more widely available. BCI enables people with limited motor functions to control prosthetics, wheelchairs, select letters on a screen, turn on the lights and AC with a smart home connection. They also suggest that it could detect warehouse worker fatigue in order to prevent injuries and monitor students for information overload in the classroom. (Side note: Amazon strapping nodes to workers’ heads and sending your kid to school in a mind-reading helmet sounds pretty unappealing.)

It’s probably best that we leave it there because holy shit, it can even take over your mind:

“In addition, owing to the lack of proprioception, the human brain is unable to acknowledge the influence of an external device on itself, which could potentially compromise autonomy and self-agency,” the review reads. “Because of this, users may be liable to mistakenly perceive ownership over behavioral outputs that are generated by the BCI, as well as incorrectly attribute causation to it.”

Such a machine parasite, they say, could change your “character traits” and mold a new “personal identity.”

Fox News has already been on that for decades, in its own way, so this could be kinda cool if it artificially gives you super-memory and enhanced intelligence (also possible). Though researchers couch that with the warning that access to superpowers could addict us on a level “similar to the opioid crisis” and compound inequality. Study co-author Rylie Green said in a statement (published in a press release titled “Bleak Cyborg Future from Brain-Computer Interfaces if We’re Not Careful”) that the tech fixates users who need access to utilitarian assistance. “For some of these patients, these devices become such an integrated part of themselves that they refuse to have them removed at the end of the clinical trial,” Green said.

The technology, they say, will need to be more cost- and energy-efficient before widespread commercial release, but it’s coming. We should soon expect “nero-entertainment,” courtesy of several companies already exploring the idea of hooking gamers directly up to the game, something they note that Valve co-founder Gabe Newel predicted as a soon-to-be-standard feature in an interview with PC Gamer. “I think that it’s an extinction-level event for every entertainment form that’s not thinking about this,” he said.

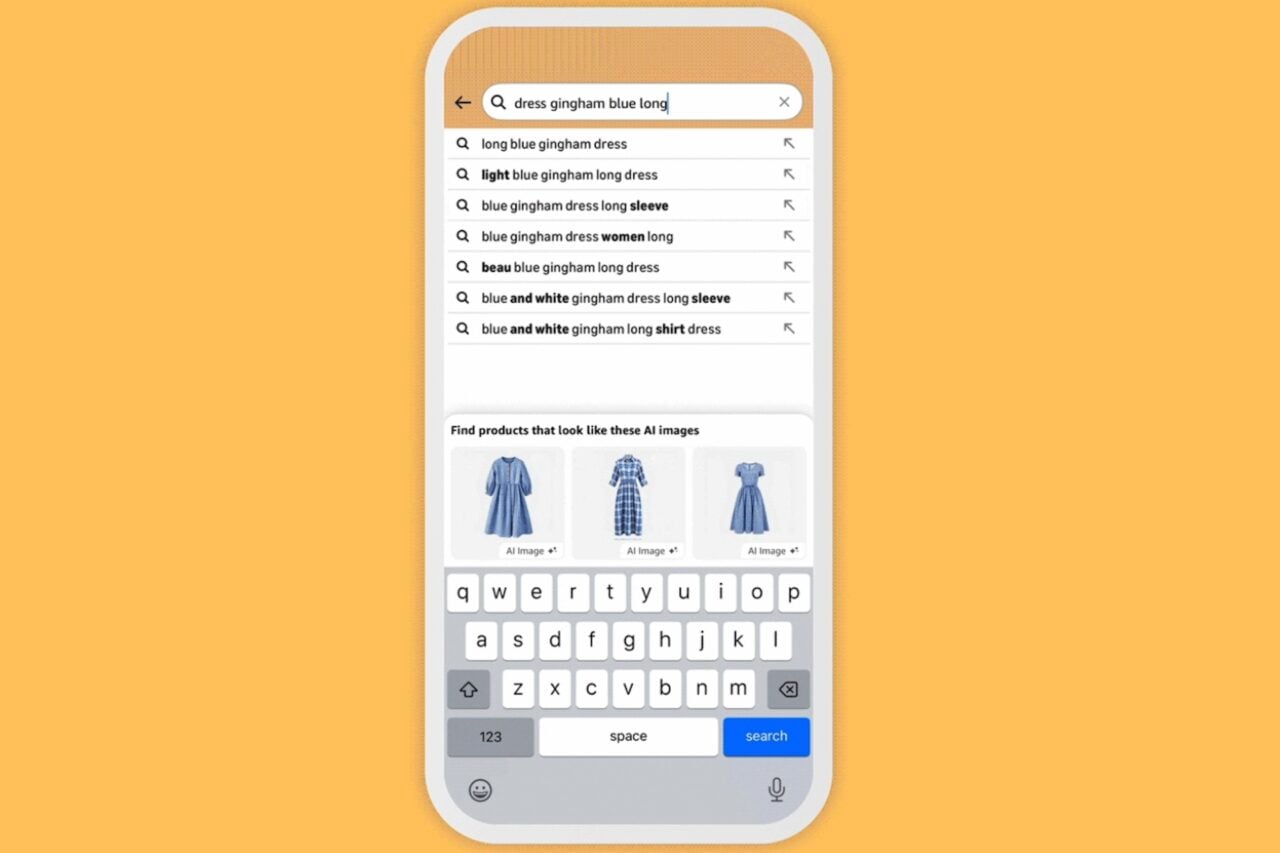

Needless to say, “neural-marketing” that culls emotional response and unconscious decision-making presents a great opportunity for corporate overlords to exploit mass biometric data harvesting.

Spooky shit! The time to legislate is now, the researchers write, noting that previous researchers have suggested legally classifying “personal neural data” similar to organs, so that they can’t be harvested and sold. Unfortunately, the law usually takes years or decades to catch up to technology, and Mark Zuckerberg is an ask-forgiveness-not-beg-permission guy. The experiment has left the lab.