Famed linguist and cognitive scientist Noam Chomsky has suddenly found himself embroiled in a debate about the ongoing quest to develop AI. It all started last year when he spoke at MIT’s “Brains, Minds and Machines” symposium when he critiqued AI theorists for adopting an approach more akin to behaviorism. His talk was later countered by Google’s Peter Norvig, among others. Now, speaking to the Atlantic in a lengthy interview, Chomsky has further expressed his thoughts on the matter.

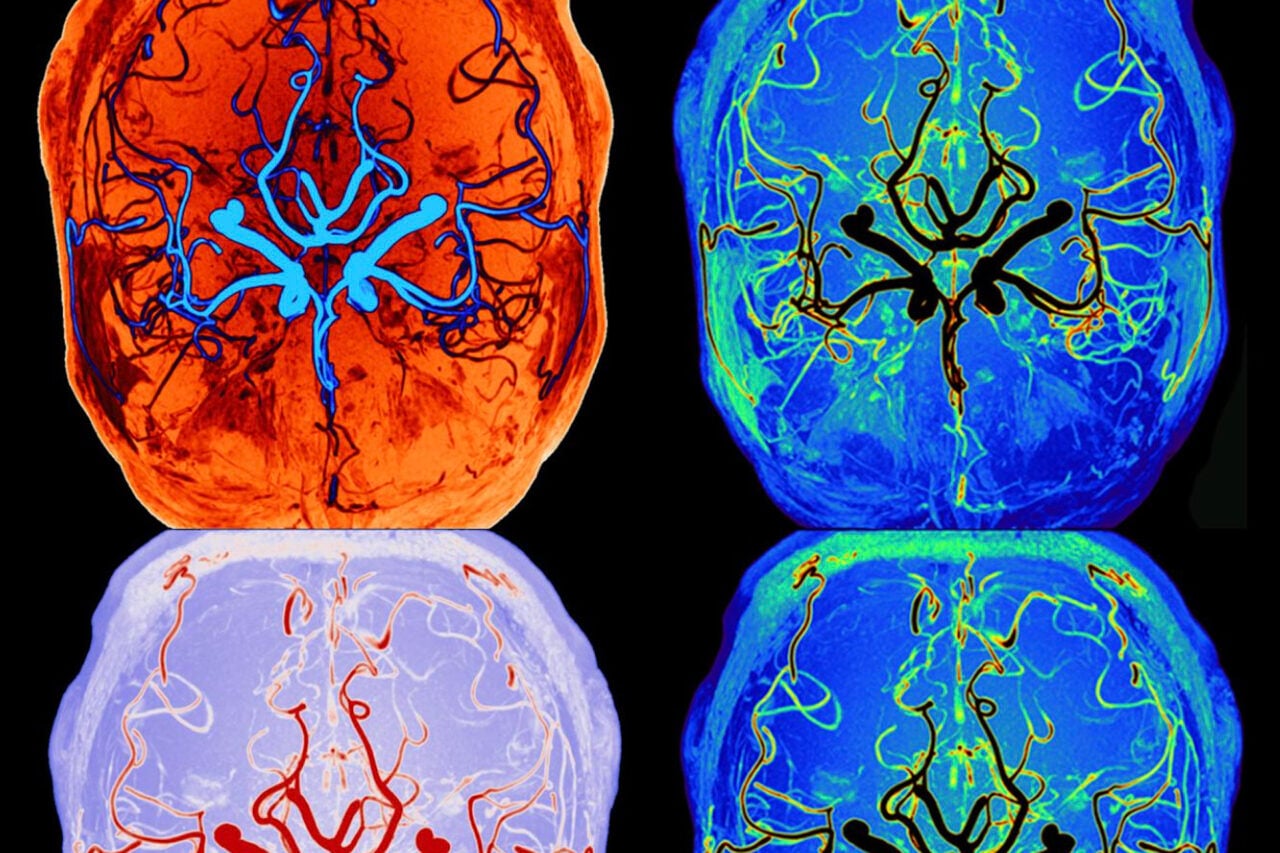

Essentially, Chomsky is worried that AI theorists have been focusing too much on what he calls associationist psychology. “It could be — and it has been argued, in my view rather plausibly, though neuroscientists don’t like it — that neuroscience for the last couple hundred years has been on the wrong track,” he says. Chomsky contends that many AI theorists have gotten bogged down with such things as statistical models and fMRI scans — paths of inquiry that have revealed very little about the inner workings of the brain and how it relates to actual cognitive function.

Instead, says Chomsky, AI developers and neuroscientists need to sit down and describe the inputs and outputs of the problems that they’re studying. When considering vision, for example, the first step is to ask what kind of computational task the visual system in carrying out. “And then you look for an algorithm that might carry out those computations and finally you search for mechanisms of the kind that would make the algorithm work,” he says, “Otherwise, you may never find anything.”

Chomsky continues:

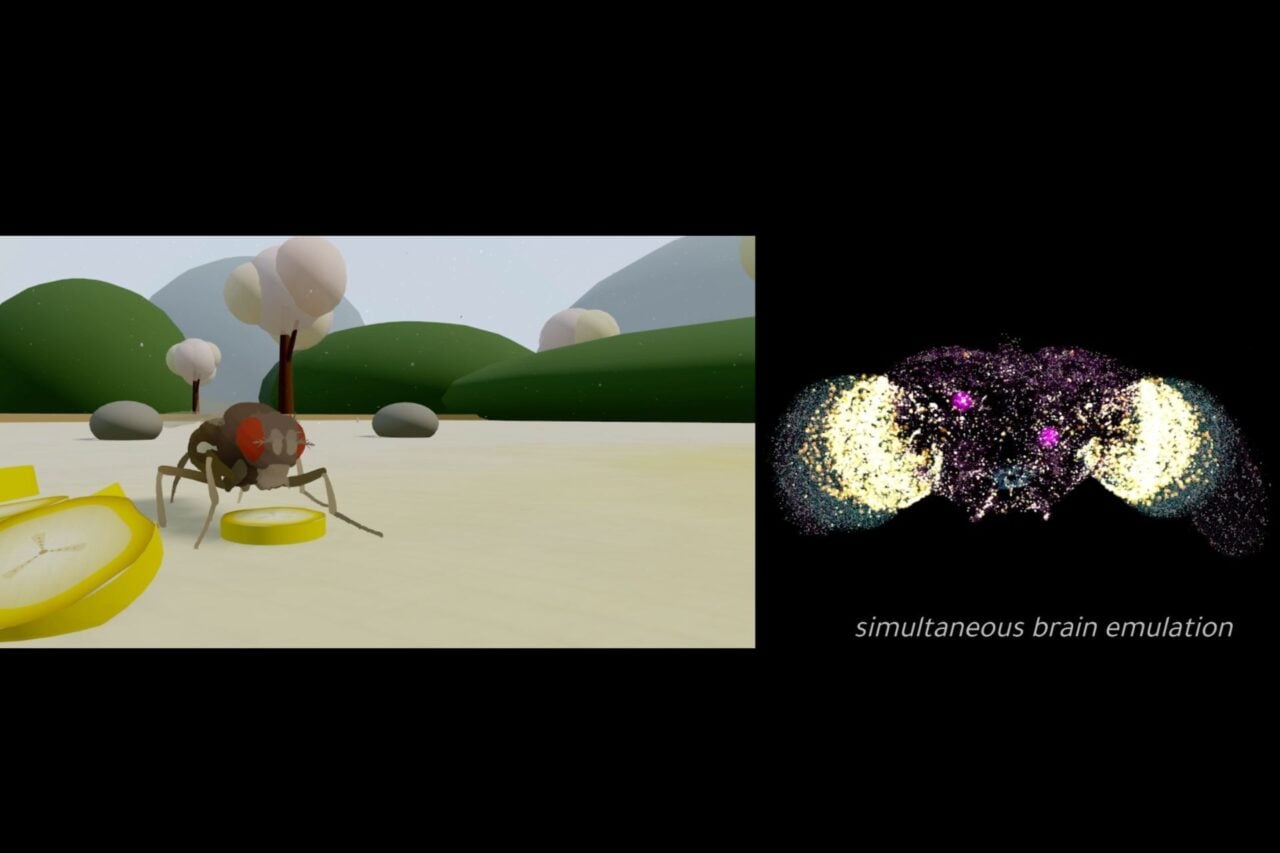

[I]f you want to study, say, the neurology of an ant, you ask what does the ant do? It turns out the ants do pretty complicated things, like path integration, for example. If you look at bees, bee navigation involves quite complicated computations, involving position of the sun, and so on and so forth. But in general what he argues is that if you take a look at animal cognition, human too, it’s computational systems. Therefore, you want to look the units of computation. Think about a Turing machine, say, which is the simplest form of computation, you have to find units that have properties like “read”, “write” and “address.” That’s the minimal computational unit, so you got to look in the brain for those. You’re never going to find them if you look for strengthening of synaptic connections or field properties, and so on. You’ve got to start by looking for what’s there and what’s working…”

This is just a small snippet of the entire interview, which is very much worth the read.

Image via.