For a decade, me and many other disabled people have watched ads for smartphones that promised to improve accessibility with slick, cutting-edge, and life-changing tech. Let’s be honest: historically, there has been a lack of awareness on behalf of tech companies toward those with disabilities. But in the past five years, there have been steady—and significant—improvements. (Perhaps because those companies want more money.) I was especially excited to try Google’s new Pixel 6 and Pixel 6 Pro, which have exclusive accessibility features that build on the ones included in Android 12, which just rolled out to all Pixels.

I have a scratched cornea in one eye, a deaf left ear, weakened and slurred speech, and dexterity issues with my hands, so I wanted to test the new Pixels’ accessibility features to see if they actually worked. Spoiler alert: While the phone still doesn’t feel quite the same for me as it does for someone without disabilities, the gap is narrowing.

Faster, More Accurate Voice Recognition

The Pixel 6 and Pixel 6 Pro are built on Google’s in-house Tensor chip, which promises faster on-device machine learning and features powered by artificial intelligence. One of the features that stands to benefit from Tensor is voice recognition. I have been trying to find a voice recognition program that works for me since 1997. A few years ago, I worked with Google’s Project Euphonia, and after speaking thousands of reiterated phrases, they provided an app with a specific algorithm for me. The app augmented the dictation on my phone in everything from texts and comments to Google Docs and Google Assistant.

But the voice recognition that comes with most smartphones has not been a viable option for me and many people with speech impairments. Our voices are not deciphered the way voices without speech impairments are, resulting in accidental commands like calling the wrong person at midnight or misunderstanding “I love new paintings” for “Hi love, you painting?” Because my words are frequently misheard, my favorite feature of the new dictation mode was the ability to say “clear” and delete the text instead of having to touch Gboard. When I used voice recognition with Google Assistant or said short, declarative sentences, the voice recognition was flawless. Google’s Voice Access, which allows you to control your phone hands-free, was much easier to use because of this accuracy. However, if I didn’t clear my throat often or used voice recognition at night when my voice gets tired, it was less accurate.

Compared to past Pixels, the voice recognition is much more precise—it’s just not perfect. I tested the phones with words like vexatious, vicissitude, and tricky specific names like Megan thee Stallion in Google Docs. For me, the voice recognition got around two sentences out of every four right. I should note that I tested the Pixel 6 phones right out of the box. I spent two years with my Pixel 4a, with the Euphonia app and Google’s AI learning my voice and speech patterns. Google has said that voice recognition improves as you use it, so I expect this to get better.

Android 12 Gains New Gesture Controls

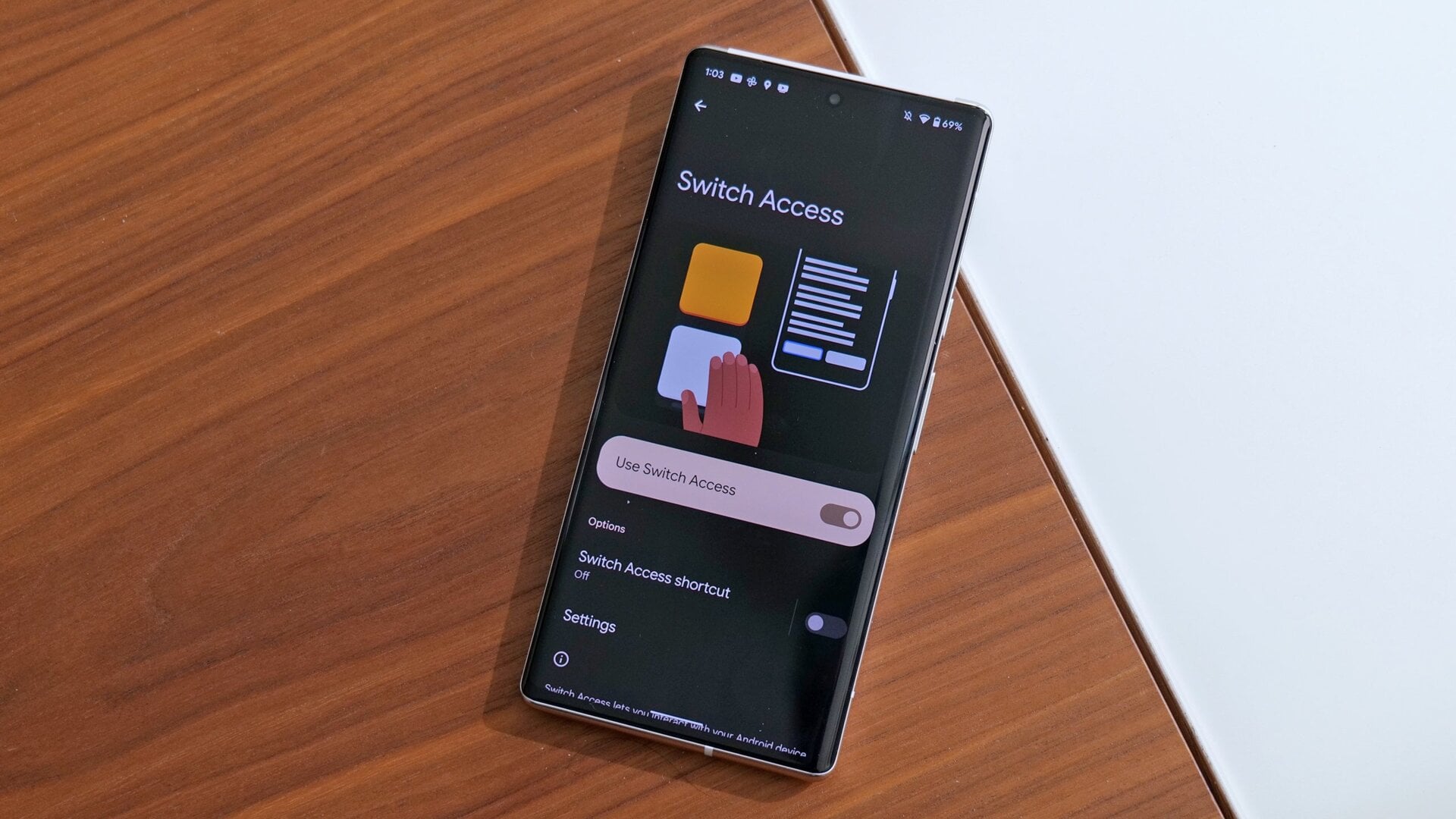

Android 12’s most impressive accessibility feature, at least on paper, is called Camera Switches. It offers the user a hands-free alternative to navigating an Android phone: You can scroll, select, go to the home screen, etc. using gestures like looking in a certain direction, smiling, raising your eyebrows, or opening your mouth.

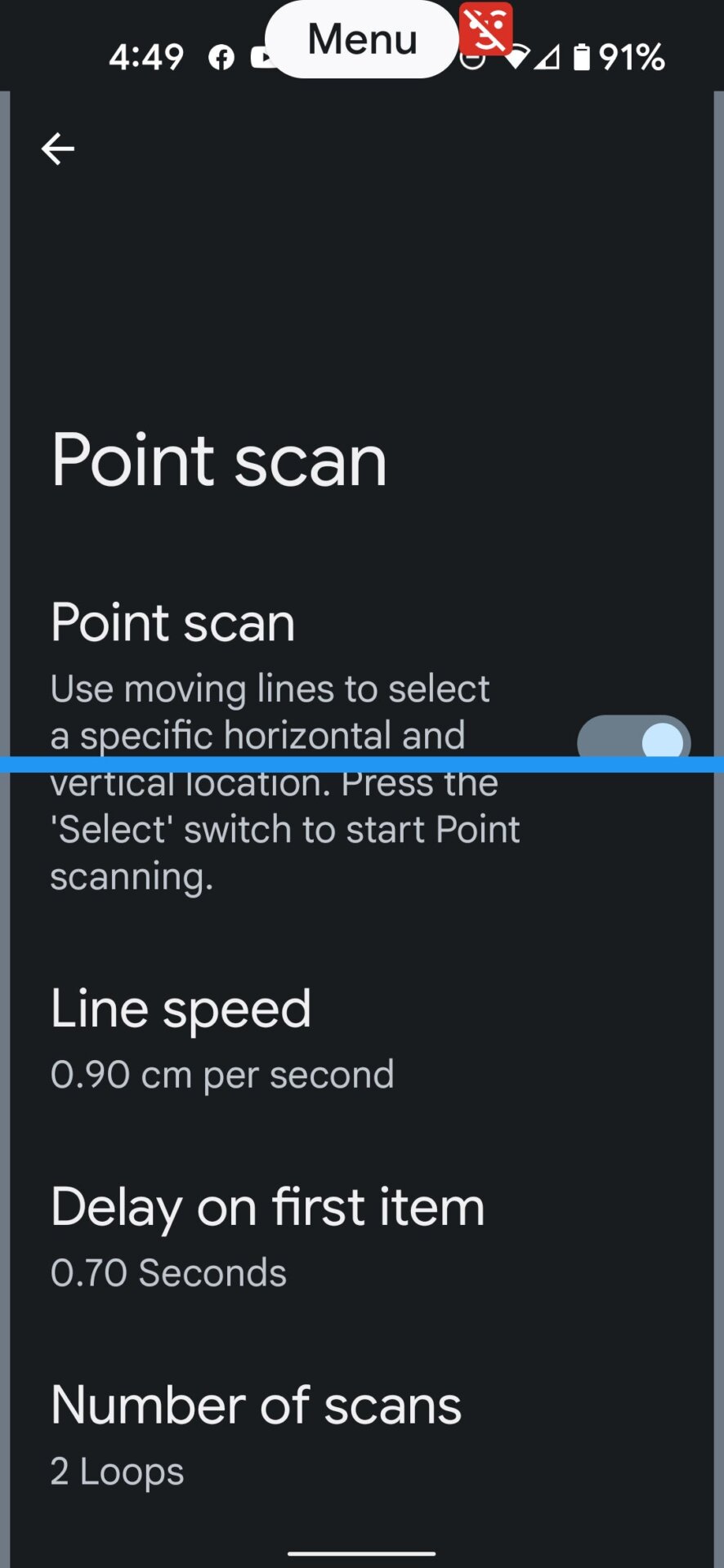

I’ll be honest: It’s incredibly complicated to set up. I won’t walk through each step here, but basically you can use a gesture to either start auto-scanning through multiple elements on a new page until the phone highlights your choice, or you can choose to use point scan, which triggers bars that go up/down and left/right across the screen until there is a fixed point to select (you can change the bars’ speed if you want). If that sounds complicated, it’s because it is.

The bottom line is that it can be a lengthy process to get to your desired task, but once you’re on the right spot, the feature works like a charm—for the most part.

Camera Switches worked best when I stuck to the facial expressions instead of eye movements to perform tasks. But because I’m limited to three switches and my left eye is 40% stitched shut, it’s still too time-consuming for me to adopt as my primary method of navigating around my phone.

One of the other features I was excited to try is Voice Access’s new Gaze Detection. This means Voice Access—which is listening whenever it is on—only works if the camera detects the user gazing at the camera. This is helpful to avoid accidental commands if you are multi-tasking, out with friends, or don’t want to skip a favorite song. Gaze Detection is a great idea but it was finicky for me. There’s a smiley face that appears at the top of the phone when the mode is turned on. It turns blue when it recognizes you looking at the camera and has a line through it when you are not. That line constantly flickered for me. However, Gaze Detection is in beta, and the glasses I wear may cause difficulty—and I’m sure the whole stitched eye thing doesn’t help.

A More Accessible Android Interface

Reviews of Android 12 have glossed over what the new design means for the disabled—and there’s a lot to be excited about. The on-screen volume slider is thicker, which makes it easier for a person’s finger to control it—and the same applies to the big buttons in the notification shade and your phone’s settings. Some may think these larger buttons are childish, but many people need those bigger buttons to have even an average smartphone experience.

The bigger visuals come at a price: a few extra seconds of scrolling or pulling down a window to see more options. That’s hardly a deal-breaker, but it’s worth noting the drawback. Essentially, Google has chosen a spread-out, ranch-style home instead of a stacked, multi-story building, which is a layout particularly good for the disabled. This is where the Pixel 6 Pro’s bigger 6.7-inch screen makes a difference by fitting more text on-screen. Also in the Pro’s favor is that the haptics seem stronger than the standard Pixel 6’s.

With past versions of Android, you could enlarge app labels and magnify text in apps, but it’s nice to see that Google is making the easier-to-read text the standard. Android 12’s much talked-about Material You feature also aids the visually impaired. Material You takes colors from your lock or home screen and makes them a theme throughout your phone, producing a natural aesthetic to complement Android’s contrast options. For example, I use my phone in dark mode, and the background photo on my lock screen is a Caribbean island, so my theme is turquoise and the text in my notification shade is gray. This contrast is easy on my eyes, and you can go into the settings to customize your Material You colors if you want.

Back in 2019, Google introduced a feature called Live Caption, which captions videos in real time. Live Caption is a boon for the d/Deaf and hard-of-hearing communities, but I think it’s being overlooked by the general public. Live Caption can easily be toggled on/off in settings, and it allows the user to read any video when the sound is turned all the way down, which is useful when you’re in a cafe, out shopping, or trying to avoid being glared at on the bus. Google should consider two things here: make the text box customizable so the user can make it thick or as wide as they want, and make it possible to choose any colors for the text and text box so they’re easy to see.

A Promising Work in Progress

Although neither the Pixel 6 or Pixel 6 Pro have the effortless, disability-friendly Face Unlock, and the Pixel 6 Pro’s tall body and curved edges might be unwieldy for some, the Pixel 6 phones are some of the most accessible Android phones today—and that’s entirely due to their software. The bigger, more efficient battery also prolongs a charge, which is often eaten up faster if you’re always using AI features like Live Caption.

But batteries will get better and other Android phones will eventually gain features similar to Camera Switches or Gaze Detection. What really sets Google’s phones apart is the voice recognition made possible by that Tensor chip. While it may not solve every accessibility issue, or be as instantaneous as I had hoped, it’s one of the most substantial accessibility upgrades in a Pixel.