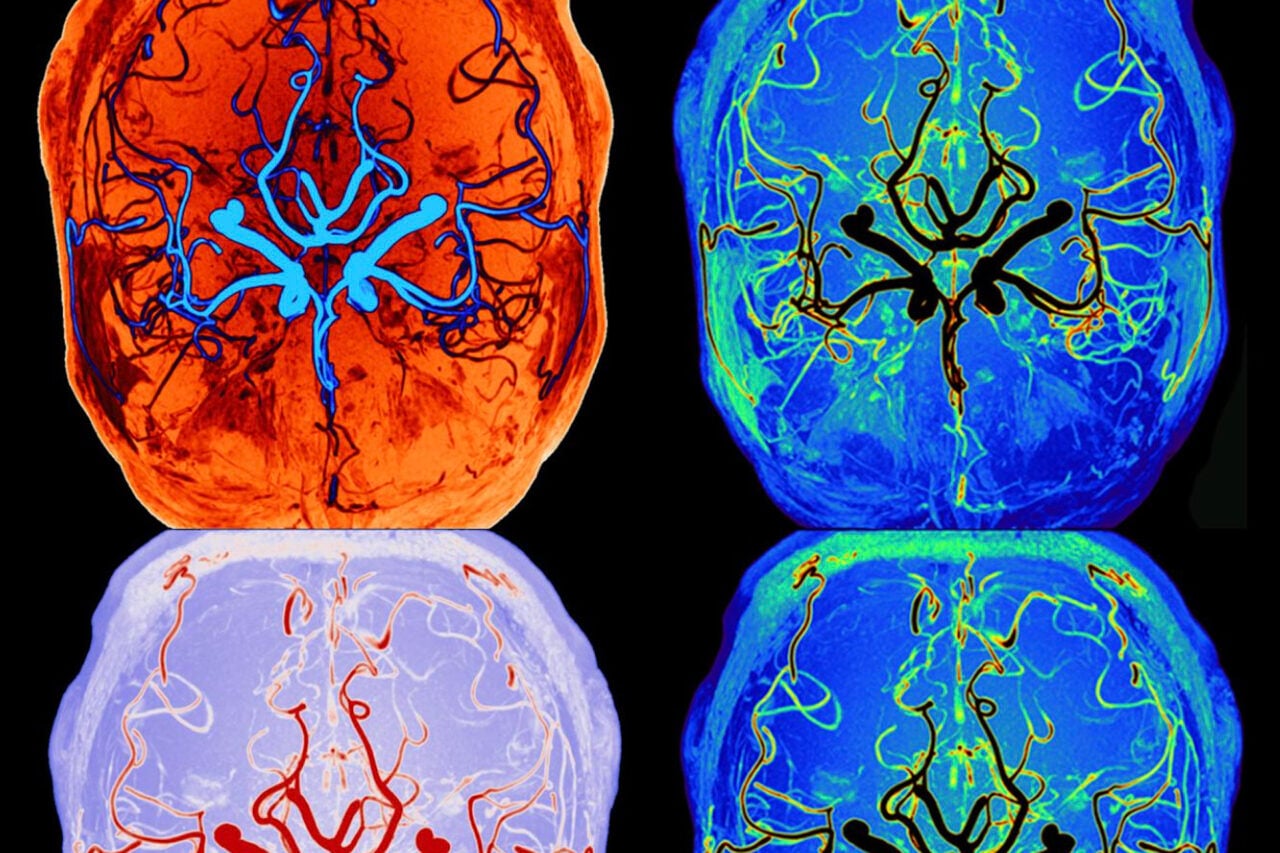

Ever since Google unveiled its Deep Dream algorithm, people have been having a blast feeding all manner of images into theprogram. It was only a matter of time before some enterprising neuroscientist thought to run a couple of brainy MRI images through the software.

Juan (“Zeno”) Sanchez-Ramos did just that (above). A neurologist by training, specializing in movement disorders, the Venezuelan-born physician’s son is also an artist in his spare time. “I’ve always been fascinated by how images occur in the brain, how light is transduced so the brain can create these images,” he said, citing an early 1968 painting depicting lines representing the visual pathways flowing into the cranium and blossoming into a flower. A more recent series of limited edition prints, called “Neon Neurons,” plays with the branching patterns of neurons and their dendrites.

Naturally he was intrigued when heard about the Deep Dream artificial neural network (ANN). He took a standard MRI image of the cerebral cortex brain stem and medulla, enhanced it slightly, and fed it into the program. “What it does to images you feed into the system is similar to what [happens] when you feed images into the eyes, or when you’re on LSD or mescaline,” he told Gizmodo.

He’s not the only one to note the similarity between Deep Dream imagery and the visual distortions and patterns people experience while tripping on acid. Per The Atlantic:

When people take drugs like LSD, they provoke a part of the brain’s cortex that “leads to the generability of these sorts of patterns,” [Lucas] Sjulson said. So it makes sense that asking a computer to obsess over one layer of imagery that it would normally perceive as multilayered would produce a similar visual effect. “I think that this is probably an example of some sort of similar phenomenon. If you look at what the brain does, the brain evolved over long periods of time to solve problems, and it does so in a highly optimized way. Things are learned with humans developmentally through evolution and then also through visual experience.”

That’s how people are training computers to see, too: through visual experience. How the neural network is seeing, then, may be more revealing than what it sees. Which is, of course, what Google engineers set out to explore in the first place.

In fact, it didn’t take long for someone to run footage from the film Fear and Loathing in Las Vegas through the program:

Deep Dream is modeled after the structure of a mammalian cerebral cortex, and may shed light on the mysterious workings of the human brain in turn — possibly even on the puzzle of human consciousness. “As we begin to investigate neural networks at multiple levels, we will eventually understand how consciousness is nothing but an emergent property of neural network functional activity,” Sanchez-Ramos said. And as ANNs continue to improve, this raises an intriguing question that has fueled science fiction for decades. “I think [AIs] can compute and based on the program it will give you product, but will there ever be an emergent consciousness in a computer? Could there be a self-consciousness?” he muses.

That day is likely far into the future. As impressive as the Deep Dream neural network is, Sanchez-Ramos notes that it is a pale reflection of the human brain. The human brain has billions of neurons, all interconnected, compared to hundreds, maybe thousands of artificial neurons in Deep Dream. Still — a neurologist can dream.

You can see more of Zeno’s art here.

Image credit: Juan “Zeno” Sanchez Ramos.