By capturing brain signals associated with the mechanical aspects of speaking, such as movements of the jaw, lips, and tongue, researchers have created a virtual, computer-based vocal tract capable of intelligible speech. The system could eventually be used by people who have lost the capacity to speak.

Conventional speech-generating devices, like the one used by the late Stephen Hawking, typically use nonverbal movements, such as twitches of the eyes or head, to produce words. Users have to spell each word letter by letter, which takes time and effort. At best, these assistive devices produce words at rates between six and 10 words per minute—a far cry from natural speech, which produces around 100 to 150 words per minute.

For people who have lost the capacity to speak, whether it be from Parkinson’s, ALS, stroke, or other brain injury, conventional speech-generating devices are good but not great. In an effort to create something more efficient, a research team led by Gopala Anumanchipalli from the University of California San Francisco developed a system that simulates the mechanical aspects of verbal speech by tapping directly into the brain.

The system collects and maps brain signals that trigger movements of the jaw, larynx, lips, and tongue. A computer then decodes these signals to produce clear sentences with a speech synthesizer. At a press conference held yesterday, the researchers described the new device as a “virtual vocal tract.” The details of this work were published today in Nature.

https://gizmodo.com/neuroscientists-translate-brain-waves-into-recognizable-1832155006

This latest speech-generating device is the second to appear this year that uses brain signals to produce speech. Back in January, a team led by neuroscientist Nima Mesgarani from Columbia University created a system that captures a person’s responses to auditory speech, which was then decoded by machine learning to produce synthesized speech. The approach taken by the UC San Francisco researchers is a bit different. It also taps into brain signals, but instead of decoding auditory speech, it decodes brain signals responsible for verbal speech.

Importantly, neither system collects a person’s covert, or imagined, speech—the words we say to ourselves inside our heads. Current science and technology are nowhere near that level of sophistication. These new approaches still utilize brain signals, but those having to do with neural activity in the sensory cortex (speech perception, as in the Mesgarani system) or neural activity in the motor cortex (speech production, as in the new device).

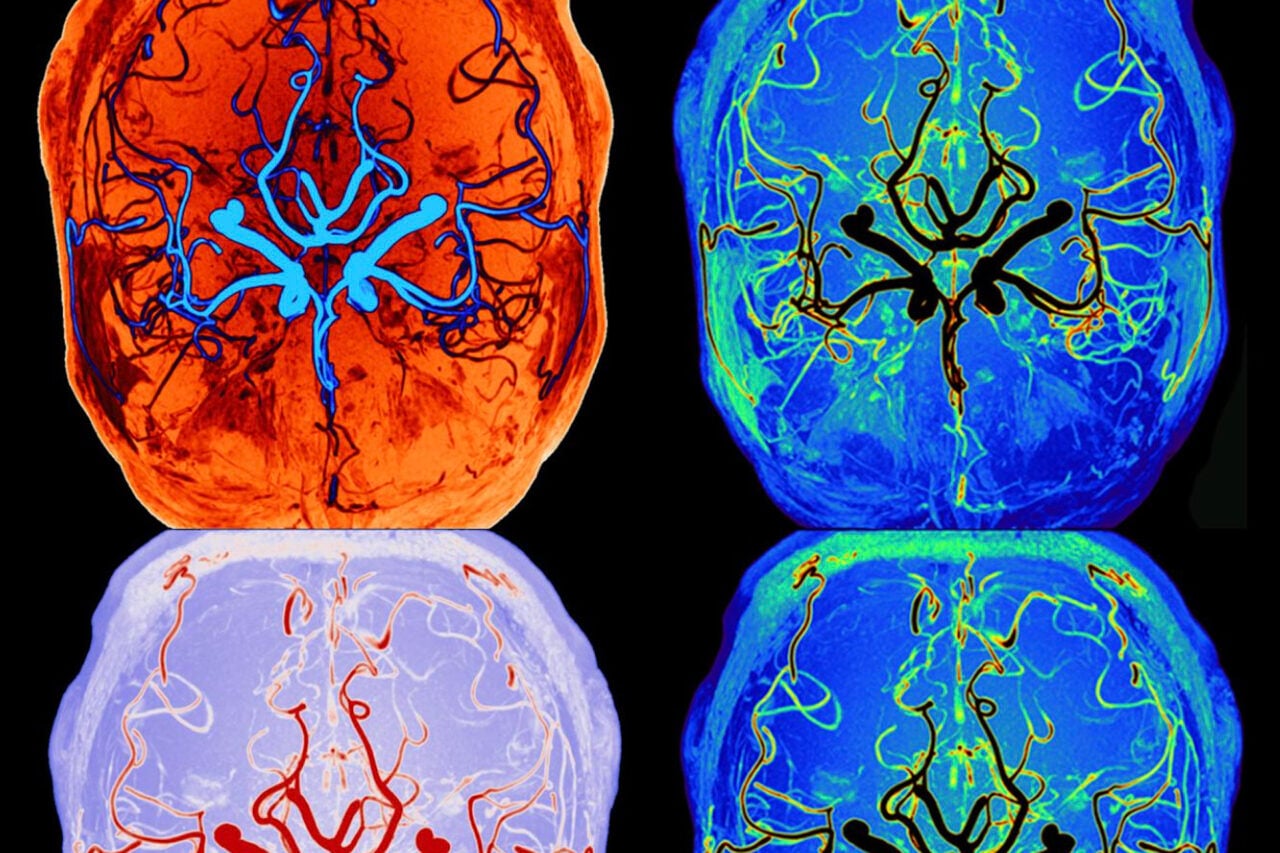

To create the virtual vocal tract, Anumanchipalli and his colleagues recruited five patients who were scheduled to undergo brain surgery to treat their epilepsy. None of the participants had issues with producing verbal speech, and all were native English speakers. Brain surgeons implanted electrode arrays directly onto their brains, specifically the areas associated with language production. The patients then spoke several hundred sentences aloud, while the researchers recorded the associated cortical activity.

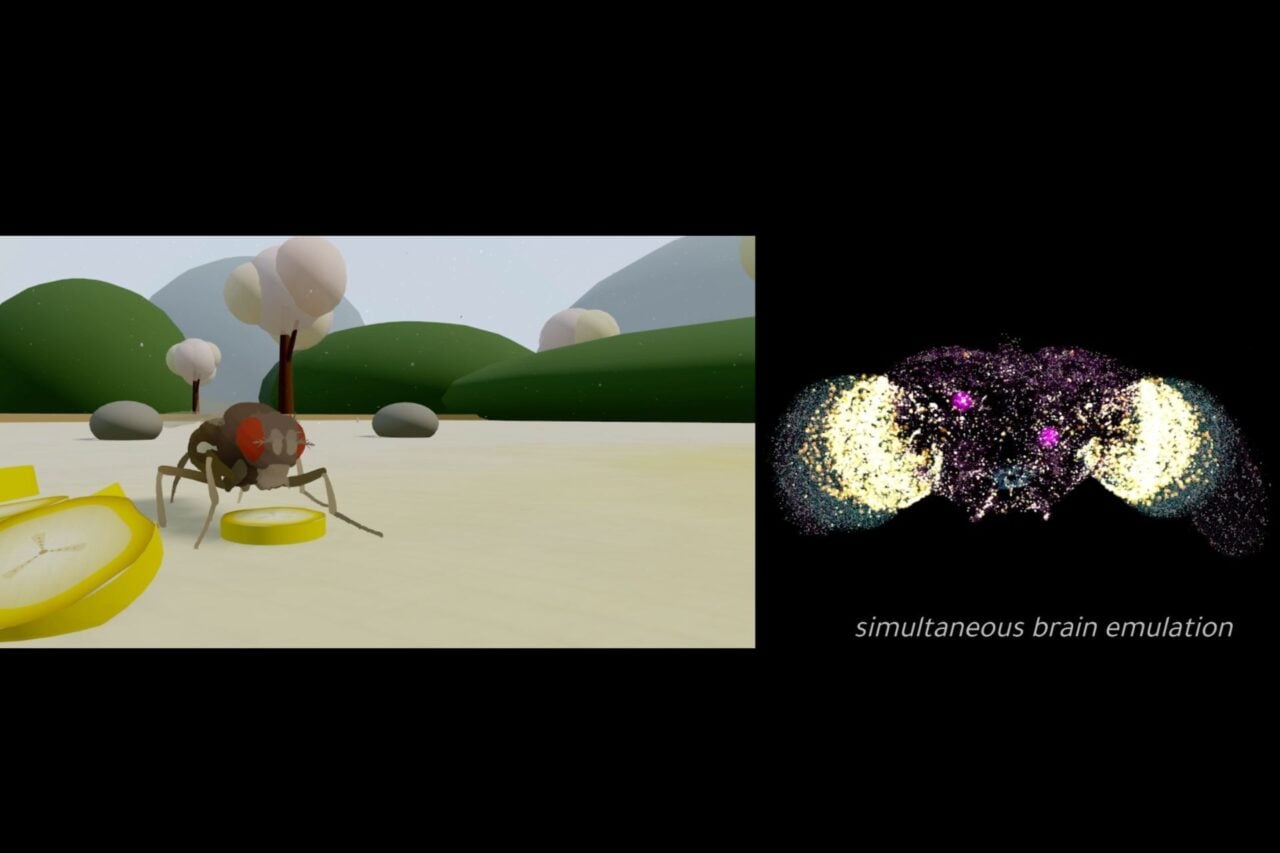

Over the months that followed, this data was decoded and linked to specific movements of the vocal tract. In a way, the researchers reverse-engineered the mechanics of verbal speech, mapping the various ways sounds are produced, for example, by the tongue on the roof of the mouth or the tightening of the vocal cords. A machine-learning algorithm decoded these signals, enabling an intelligent speech synthesizer to convert and express the signals as audible speech. The result was a computer-based, virtual vocal tract that—in theory—could be controlled by brain activity.

To turn theory into action, the researchers then tested the system on a volunteer who was hooked up to the system—intracranial electrodes and all. The person was instructed to both talk out loud and to mime, or mouth, verbal speech without uttering a sound. The latter method, known as subvocal speech, was done to simulate a person who has lost the capacity for speech, but is still familiar with the mechanical aspects of talking. Fed with this data, the virtual vocal tract was able to produce verbal speech with surprising clarity. Both methods resulted in intelligible speech, though verbal speech performed a bit better than the subvocal speech.

In followup tests, a panel of several hundred native English speakers were recruited to decipher the synthesized speech. The panelists were given a pool of words to choose from and told to select the best match. In tests, around 70 percent of the words were correctly transcribed. Encouragingly, many of the missed words were close approximations, such as mistaking “rodent” for “rabbit,” as an example.

“We still have a ways to go to perfectly mimic spoken language,” Josh Chartier, a co-author of the new study, said in a statement. “We’re quite good at synthesizing slower speech sounds like ‘sh’ and ‘z’ as well as maintaining the rhythms and intonations of speech and the speaker’s gender and identity, but some of the more abrupt sounds like ‘b’s and ‘p’s get a bit fuzzy. Still, the levels of accuracy we produced here would be an amazing improvement in real-time communication compared to what’s currently available.”

As noted, the system is designed for patients who have lost the capacity for speech. At the press conference held yesterday, study co-author Edward Chang said it remains “an open question” as to whether the system could be used by people who have never been able to talk, such as people with cerebral palsy. It’s “something that will have to be studied in the future,” he said, “but we’re hopeful,” adding that, “speech would have to be learned from the bottom up.”

A major limitation of this virtual vocal tract is the need for brain surgery and cranial implants to customize the system for each person. For the foreseeable future, this will have to remain invasive, as no technological devices currently exist that are capable of collecting the required resolution outside of the brain.

“This study represents an important step toward the actualization of speech neuroprosthesis technologies,” Mesgarani, who wasn’t involved with the new research, told Gizmodo in an email. “One of the main barriers for such devices has been the low intelligibility of the synthesized sound. Using the latest advances in machine learning methods and speech synthesis technologies, this study and ours show a significant improvement in the intelligibility of the decoded speech. What approach will ultimately prove better for decoding the imagined speech condition remains to be seen, but it is likely that a hybrid of the two may be the best.”

Indeed, an exciting aspect of this field is the rapid rate of development and the application of different techniques. As Mesgarani correctly pointed out, it’s possible that multiple approaches could be combined into a single system, potentially leading to more accurate speech results.

As a final, speculative aside, these brain-computer interfaces could conceivably be used one day to produce a form of technologically enabled telepathy, or mind-to-mind communication. Imagine, for example, a device like the one developed by the UC San Francisco researchers, but with the speech synthesizer hooked directly to a receiving person’s auditory cortex, similar to a cochlear implant (the auditory cortex is associated with hearing). With the two elements linked together over wireless, two interconnected people could theoretically communicate just by silently miming (or imagining the movements of miming) speech—they would hear each other’s words, but no one else would.

But I’m getting ahead of this latest research. Most importantly, the new system could eventually be used to help patients with ALS, multiple sclerosis, stroke, and traumatic brain injury regain clearer speech. And, as the researchers suggested, it could potentially even give a voice to individuals who never had the capacity for speech.