Seemingly with each passing week, YouTube faces another content moderation problem. And despite the company’s own efforts at moderating its platform, it can’t seem to get its hands around the problem.

A profile of the company’s CEO Susan Wojcicki published Wednesday by the New York Times captures in clear terms the extent to which the company struggles with policing the abusive, exploitative, or even moronic videos uploaded to its platform. As one example, the Times detailed a policy meeting during which Wojcicki and her YouTube employees debated whether the so-called Condom Challenge promoted a potentially dangerous or life-threatening scenario. After all, they worried, someone putting a condom over their head might suffocate and die. Per the Times:

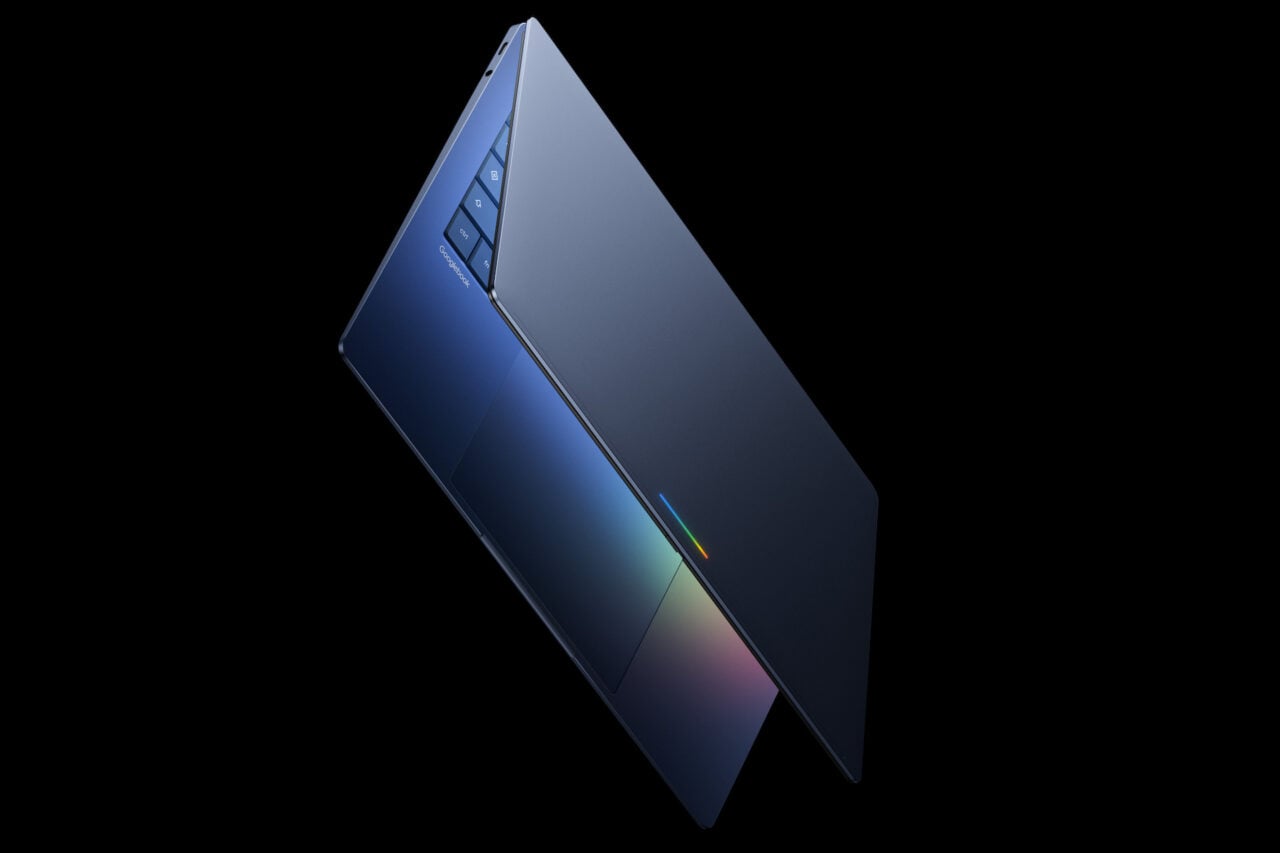

“There’s no reason we want people putting any kind of plastic over their head,” she said, peering over the screen of her open laptop. But the video stayed up. For every minute Ms. Wojcicki spent discussing it, users uploaded to the site an additional 500 hours of footage.

These issues over content moderation, of course, aren’t limited to asinine challenges, and the ensuing damage control when the Google subsidiary does run into more serious issues often plays out before us in real time. The Times reported to the now infamous video by YouTuber Matt Watson that detailed a system by YouTube commenters that facilitated what he described as a “soft-core pedophile ring.”

This certainly wouldn’t be the first time that YouTube has struggled with content that exploited children. However, per the Times, Wojcicki evidently pointed to this situation as a kind of measurement for growth, even as another moderation issue quickly followed:

Ms. Wojcicki said while the situation was regrettable, it also demonstrated that some of YouTube’s new policies are helping. YouTube was able to turn off comments on millions of videos over a holiday weekend, something it would have struggled to do in the past.

A month later, when YouTube’s human reviewers were overwhelmed by a deluge of videos of the mass shooting in New Zealand — at one point, footage of the shooting was being uploaded every second — Ms. Wojcicki said the company intentionally disabled search functions. It also bypassed human reviewers and let its computers immediately take down any videos that were automatically flagged.

Hey, better is good. That’s hard to deny. But it’s also hard to say that pulling the plug on certain features every time there’s a high-profile crisis can really be considered progress. It only addresses the big headline-grabbing incidents that might get YouTube in trouble. “It’s not like there is one lever we can pull and say, ‘Hey, let’s make all these changes,’ and everything would be solved,” Wojcicki told the Times angrily. But the fixes she’s describing actually, in a way, amount to simply pulling a lever any time too much attention gets directed at something horrific happening on YouTube. It’s all bandaids on a wound that’s gushing billions of dollars.

There’s a tension between continued growth and engagement driven by algorithms while managing the uglier parts of YouTube’s platform, and as a result, problems emerge. It’s a dilemma that was examined by Bloomberg earlier this month in an explosive report that charged YouTube’s top brass—Wojcicki among them—with turning a blind eye to the platform’s ongoing content problems in favor of engagement.

A YouTube spokesperson told Bloomberg that “responsibility remains our number one priority” and that the company has “taken a number of significant steps, including updating our recommendations system to prevent the spread of harmful misinformation,” among other initiatives. However as recently as last week, during the debut of the historic image of a black hole, YouTube’s recommendations kicked in with space-related conspiracy theory videos. On Monday, YouTube’s “improved” algorithm fired up while the historic Notre Dame Cathedral burned. Videos showing the fire started serving up useless information windows informing users about the facts behind the 9/11 attacks. How were these incidents related? They were not. But busted-ass algorithms were just doing their job.

Wojcicki told the Times that she knows the company “can do better, but we’re going to get there.” This is the same familiar navel-gazing repeatedly heard from other social media CEOs like Mark Zuckerberg and Jack Dorsey when they’re called on to answer for many of the same issues on their own platforms.

But that’s the rub: These are issues that almost exclusively arise as a result of the very platforms on which they exist. No one thinks that YouTube can stop every bad video from being uploaded, but it insists on using flawed algorithms to recommend content. That’s when YouTube takes responsibility for the content whether it wants to or not. And so long as these issues continue to proliferate, the blame can be squarely placed at Wojcicki’s feet. She can get angry all she wants, but she can’t claim that there’s no lever to pull to fix the problem when there’s always a plug to pull on the whole damn site. If she doesn’t like that option, then she should get to work.