Meta is intentionally limiting search results on Threads for keywords related to the covid-19 virus and a variety of other potentially controversial topics. In a statement sent to Gizmodo, a spokesperson for the tech giant confirmed the “temporary” restrictions and said the social network’s newly released search function may not show results for keywords that “show potentially harmful or sensitive content.” The spokesperson said Meta intends to add the search results “once we are confident in the quality of the results.”

“We just began rolling out keyword search for Threads to additional countries last week,” the spokesperson said. “The search functionality temporarily doesn’t provide results for keywords that may show potentially harmful or sensitive content.”

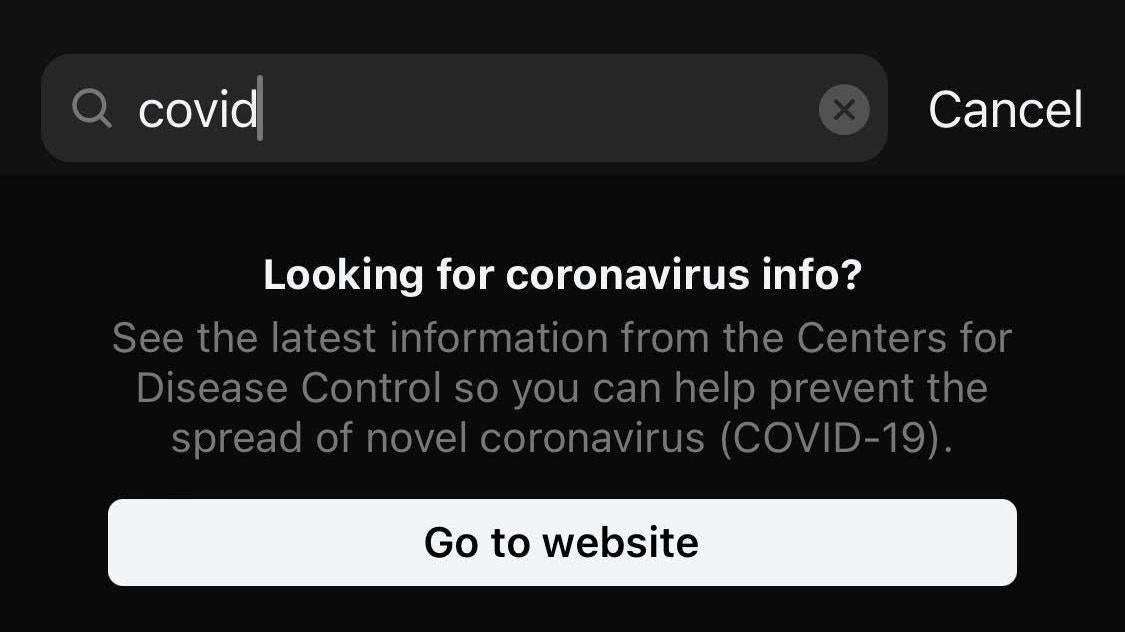

News of the blocked results comes just days after the company introduced its heavily demanded search function. Almost immediately, virology researchers and critics of social media moderation policies alike began noticing searches for keywords like “covid” “vaccine” and “long covid” would return an entirely blank screen except for a pop-up link redirecting users to the Center for Disease Control’s website.

Over a phone call, the Meta spokesperson said the decision to limit the results was made in an abundance of caution in the face of fast-paced product iteration. The temporary restrictions aren’t only limited to pandemic topics either. Gizmodo found searches for “self-harm” “suicide” and “murder” simiarly did not yield results. The spokesperson told Gizmodo other search results related to suicide or self-harm were also limited as a safety measure.

“People will be able to search for keywords such as “COVID” in future updates once we are confident in the quality of the results,” the spokesperson added.

Instagram Head Adam Mosseri acknowledged the limited search results in a Twitter post-Mondaywhere he said the company was trying to take a more cautious approach with Threads.

“We’re working to support more searches quickly,” Mosseri said. “We’re trying to learn from last mistakes and believe it’s better to bias towards being careful as we roll out search.”

I hear you, and we're working to support more searches quickly. We're trying to learn from last mistakes and believe it's better to bias towards being careful as we roll out search.

— Adam Mosseri (@mosseri) September 11, 2023

The content restrictions come amid a surge in severe Covid-19 cases. Hospitalizations related to the virus rose 16% between mid and late August, according to recent CDC data, with some experts fearing true figures may even be slightly higher. Though the uptick in cases falls far short of early pandemic levels, it has nonetheless led to growing anxieties among some and the reinstatement of mask mandates at some schools and universities. Those examples are relatively few and far between, but they’ve nothess drawn the ire of vocal commentators on the political right fearful of a return to large-scale economic shutdowns.

Americans have had enough COVID hysteria. WE WILL NOT COMPLY! https://t.co/2lgmJQJthC

— Rep. Marjorie Taylor Greene🇺🇸 (@RepMTG) August 22, 2023

This is all uncomfortably familiar territory for Meta, which received intense backlash from all sides for its handling of covid-related content on Facebook and Instagram during the height of the pandemic. At the time, some of the internet’s weirdest conspiracies surrounding the virus and eventually the vaccine often began their algorithmic rise to ascent on Meta’s platforms. Health experts and lawmakers slammed Meta for what they viewed as a hands-off approach to moderation with President Biden even going as far as to accuse Facebook of “killing people.” The president later walked that language back slightly.

Internal Facebook documents revealed by Gizmodo as part of The Facebook Papers illustrate how Meta’s tolerance for anti-vaccine content and health misinformation far predated the first cases of Covid-19 in the US. The document shows researchers at the company were well aware of the levels of medical misinformation surfacing in user’s feeds in the early days of the pandemic and understood the platform could play a role in “severely impacting public health attitudes.”

With case numbers ticking up in 2022 and a new platform still maturing, it’s understandable why Meta may feel inclined to simply avoid wading into the health misinformation muck altogether. Mosseri has admitted as much openly saying Threads was “not going to do anything to encourage” politics or hard news.

“The goal isn’t to replace Twitter,” Mosseri said in an exchange with a reporter from The Verge.

But health experts speaking with The Washington Post say Meta’s decision to try and literally remove itself from the conversation could have harmful consequences for users who turn to social media to find meaningful resources and information. Nearly half (48) of US adults surveyed by Pew Research in 2021 said they received a lot or some of their information about Covid-19 on social media.

“Social media is a lifeline for patients, literally. Long covid patients have died of organ failure, infections, cardiac events and more, and social media is one place they can share information,” World Health Network Outreach Director Julia Doubleday said. “Cutting off communication between suffering and disabled patients is cruel in the extreme. It’s indefensible.”