Point a projector at anything other than a completely flat screen, and you’ll end up with a distorted image. But a team of researchers from the Ishikawa Watanabe Laboratory in Tokyo have designed a projector that can compensate for warped and moving surfaces, making the image look more like a perfectly applied sticker, instead of a projection.

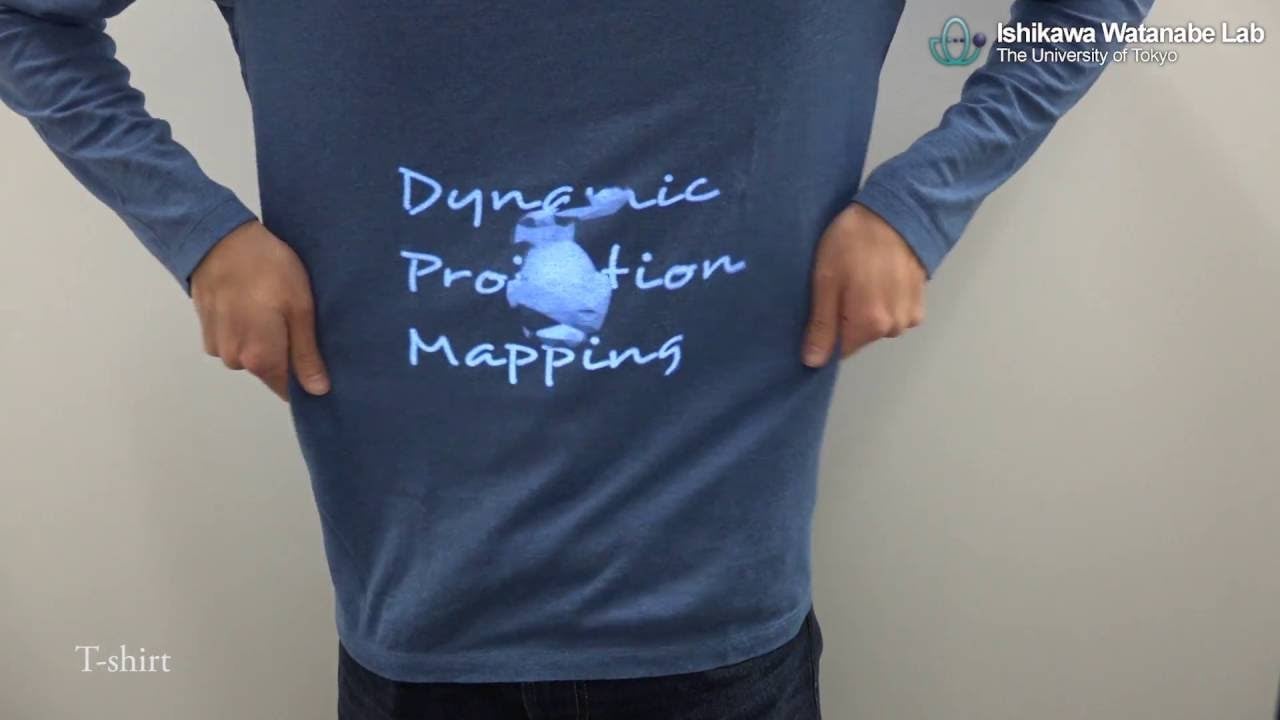

It’s a technique known as projection mapping, and is similar to how textures are applied to 3D models in a video game. But this projector works in the real world, on almost any irregular object, even those with a non-rigid surface. So even when a t-shirt is fluttering away in the breeze from a fan, the projected image on it matches every little move and deformation.

The exact technologies that give this projector its impressive abilities are undoubtedly a closely guarded secret. You don’t do research like this for years and then simply give away your intellectual property. But the system relies on a camera that can see a grid applied to objects, using infra-red ink that’s invisible to the human eye, and track how it’s warped in real time.

Using that deformed grid as a reference, the projector can then warp a projected image to perfectly match the surface it’s hitting. Similar setups have existed before, but by bumping the projector’s frame rate to an astonishing 1,000 frames per second, the delay between what the camera sees and what the projector projects is reduced to a mere three milliseconds. To the human brain, it looks like it’s happening in real time.

So besides being used to create jaw-dropping YouTube demos, what other uses could there be for this technology? At a basic level, it could make setting up a projector at the office dead easy as you wouldn’t have to fiddle with keystone or image adjustment settings to match your screen. At the other end of the spectrum, imagine the ads and sponsors on an athlete’s jersey continuously changing throughout a game.

If you really want your imagination to run wild, this technology could even facilitate a crude version of Star Trek: The Next Generation’s holodeck, projecting custom outfits onto users who never need to change, and dynamically mapping images over every object in a room to change their appearance based on a simulated event.