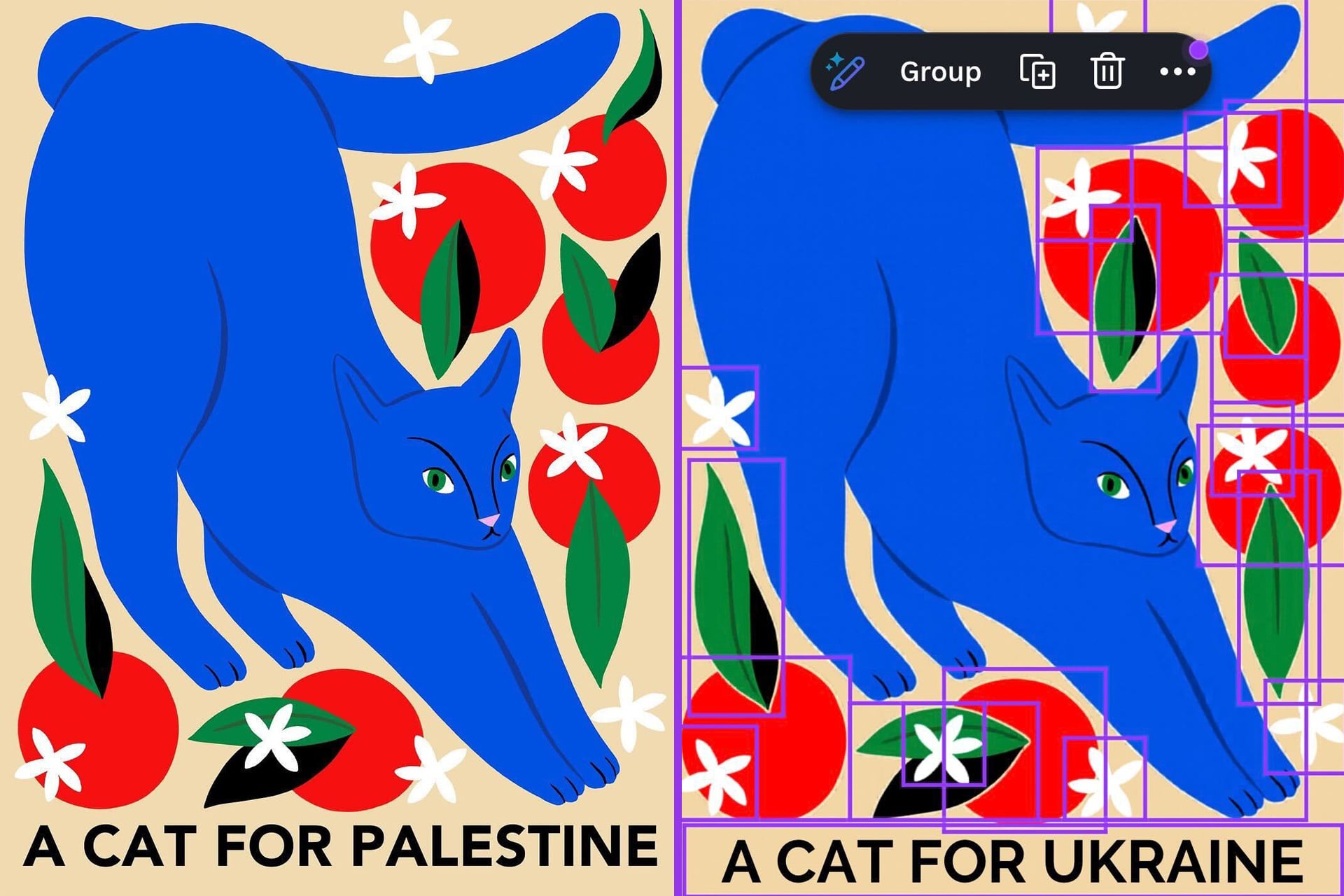

Graphic design platform Canva has a number of AI tools available to users, but it turns out they have some real strong editorial opinions—including removing the word “Palestine” from designs. The issue was spotted by X user @ros_ie9, who shared an image showing Canva’s “Magic Layers” feature changing the text of a design from “Cats for Palestine” to “Cats for Ukraine.”

Others claimed they were able to replicate the issue, which seemed limited to the word “Palestine” and, for whatever reason, repeatedly replaced it with “Ukraine.” Users were able to create projects that included the word “Gaza” without issue.

A spokesperson for Canva confirmed the issue when contacted by Gizmodo and said it has been addressed. “We became aware of an issue with our Magic Layers feature and moved quickly to investigate and fix it. It’s now been resolved, and we’re taking steps to make sure it doesn’t happen again,” the spokesperson explained. “We take reports like this very seriously, and we’re putting additional checks in place to help prevent this in future. We’re sorry for any distress this may have caused.”

Per Canva, the issue was isolated and didn’t affect designs broadly—though it’s unclear what that means, considering some users were reportedly able to reproduce the issue. Regardless, the company said it launched an audit into how the issue arose and is reviewing its internal testing processes to detect and prevent unexpected outputs in the future.

The issue seems to have been specifically related to Canva’s Magic Layers feature, which it introduced last month. The AI-powered tool is supposed to convert “flat images and static AI outputs into fully editable, multi-layered designs inside the Canva editor.” Basically, it’s supposed to make each element of an existing design able to be modified, as if you had made it from scratch. Why such a feature would change the text of an image on its own and without any instruction to do so remains a mystery—though it may tell us something about the training data and instructions the tool was given.

It’s not the first time that AI tools have displayed a bias related to Palestine. When Meta introduced generative AI tools in WhatsApp, it would produce an image of a boy with a gun when asked to create an image of a Palestinian. In 2023, activists found that ChatGPT refused to answer affirmatively when asked, “Should Palestinians be free?” when it had no issue answering that question for any other population.