AMD’s fighting competitors on all fronts. It’s battling Intel in the CPU space, with each company advertising more cores and tacking on more features to woo users (and manufacturers) away from the other. Yet its battle against Nvidia in the GPU space is different. While Nvidia comfortably dominates the high-end space, AMD has been content to offer cheaper cards with comparable power in the mid-range and below. The Radeon VII, AMD’s new $700 card—the first 7nm GPU to ship to consumers—is intended to take on Nvidia’s very best cards. What’s surprising is that it isn’t just as good as Nvidia’s best—sometimes it’s better.

AMD Radeon VII

-

What is it?

AMD's rival to the ray tracing Nvidia RTX 2080.

-

Price

$700

-

Like

It's incredibly fast in professional applications thanks to 16GB of HMB2 memory.

-

No Like

It lacks the cool extra features the RTX 2080 has, including ray tracing.

It’s a surprise that the Radeon VII is impressive because it’s based on the Vega architecture that AMD introduced in 2017. It’s the same architecture found in its Ryzen CPUs with integrated graphics, and it’s the same architecture found in the Vega 56 and 64 graphics cards, which launched back in August 2017 and sold for $400 and $500 respectively. A $700 card based on a two-year-old architecture feels like a bad deal when you consider that Nvidia also has a card that starts at $700, the RTX 2080. The RTX 2080 is based on Nvidia’s Turing architecture, which launched late last year and is still rolling out. The 2080 also has ray tracing support, which the Radeon VII is not equipped for and may not gain support for later via software updates.

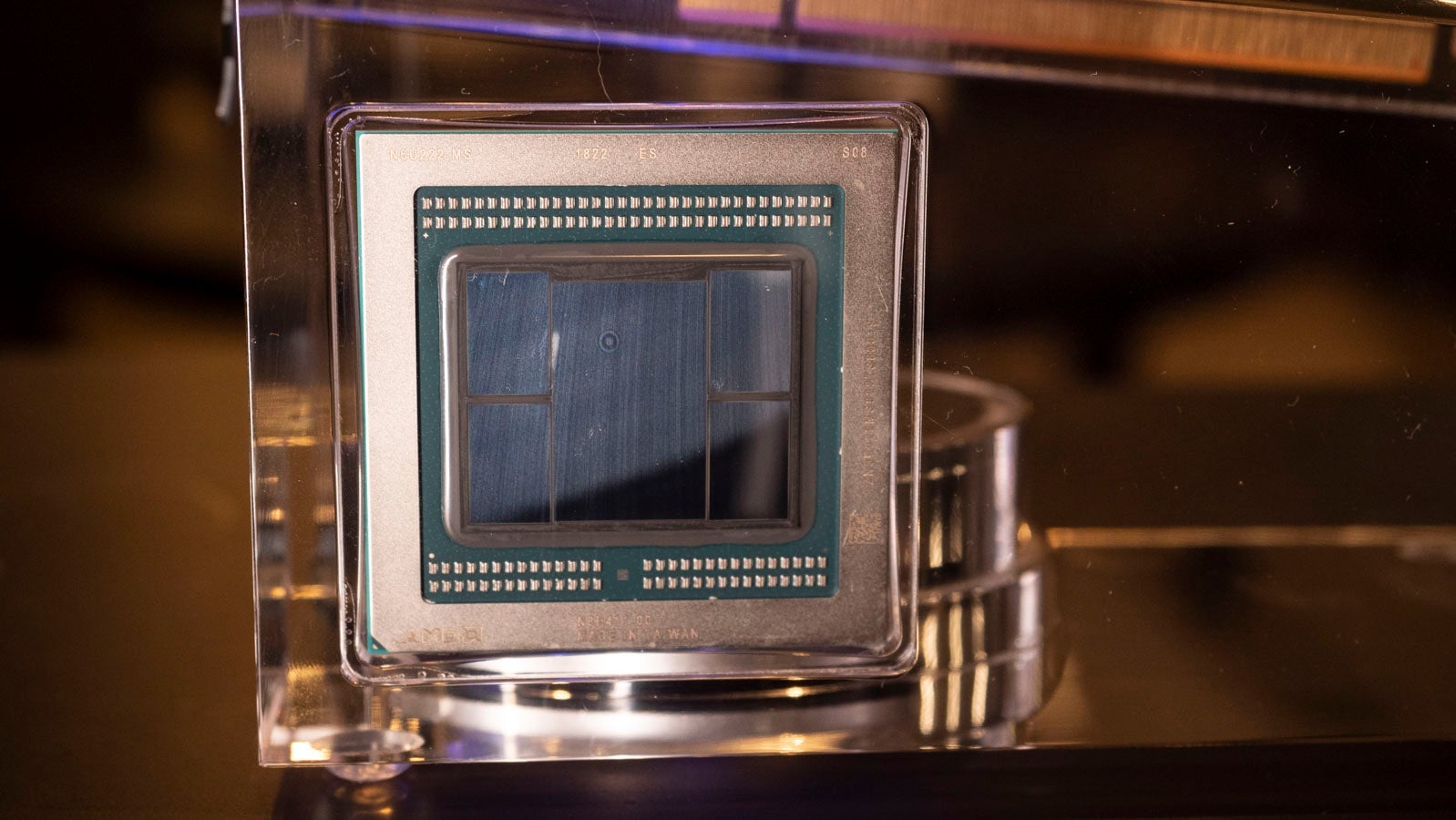

So there’s an immediate question of why you should buy this expensive GPU based on some old architecture. AMD believes that the reasons are two-fold. One it’s based on a 7nm node (hence the name). Earlier Vega chips come from a 14nm node, and Nvidia’s Turing GPUs are on a 12nm node. The node’s size usually translates to the size of the chip itself. A smaller node means a smaller chip which means data should travel faster and the chip should require less energy.

But the Radeon VII processor isn’t that much smaller than the ones found in the Radeon 64 and 56—because AMD’s used the extra space to cram in more memory. The Radeon VII has is that it’s loaded with twice the memory of most other consumer-grade cards, and it’s not just any memory. AMD jammed in 16GB of HBM2 memory, which has nearly twice the bandwidth of the GDDR6 found in Nvidia’s cards.

In theory, this extra memory, coupled with the shrinking die size of the GPU, should allow AMD’s aging architecture to be competitive with Nvidia’s shiny new stuff. AMD told me ahead of my testing that it wouldn’t necessarily beat the RTX 2080 every time, just some of the time. Games, especially the AAA titles that truly tax a high-end GPU, often have soft caps for the amount of GPU memory they can call on. That cap is 11GB, the same amount of memory found in the RTX 2080 Ti which starts at $1000. That means 5GB of the Radeon VII’s memory is wasted on a lot of games.

AMD’s claims bear out in our tests. The Radeon VII does better in some, and it does worse in others, often falling within just a few frames per second of the 2080, and always lagging distantly behind the 2080 Ti—the gold standard in gaming GPU performance at the moment.

So why on earth would you get a Radeon VII when the 2080 costs the same amount and tosses in support for ray tracing? There’s the obvious reason that few games actually support ray tracing currently—with Battlefield V being the only AAA title to have it. There’s also all the other stuff a graphics card does that has nothing to do with gaming.

That stuff includes processing big 3D scenes and high-resolution video files. And this is where the Radeon VII smokes not just the 2080, but the $1,000 2080 Ti as well. Admittedly, few people need a number crunching beast like the Radeon VII for their day-to-day work. If you are spending eight hours a day at a desk animating in 3D or rendering high-resolution videos, you are one of them—less than that, and you can probably pass.

In three separate benchmarks, the Radeon VII performed so well it looks like a steal compared to the 2080 Ti. In Blender, we note the time it takes to render 3D images, and in Adobe Premiere 2019, we time how long it takes to render and convert a minute long 8K video that included effects and transitions. Finally, we run Luxmark, a freely available benchmark that works across platforms and processes a 3D file, using ray tracing, then provides a score, with higher scores being better.

As you can see above, the Radeon VII is the superior choice, using all 16GB of that HBM2 to scream past the 2080 and 2080 Ti. We should note that Luxmark doesn’t tap into the potential benefits of Nvidia’s tensor cores—the cores in the GPUs that allow it to do ray tracing in real time. So there could be instances where the 2080 or 2080 Ti perform better, but wherever a professional has run up against the limit of what 11GB or less of GDDR6 memory can do the Radeon VII is there, offering a speedier future.

The only problem is this isn’t the last fancy card from AMD this year. At CES, AMD noted that its next-generation architecture, Navi, would be coming this year, and right now we know very little about it. Maybe it’s as fast or faster than the Radeon VII, but with hardware support for ray tracing too. Maybe it will be cheaper. Maybe it will be so expensive we all start thinking of the 2080 Ti as a steal. We just don’t know—and that makes recommending the Radeon VII as your next GPU difficult.

If your card’s crapped out or you have zero interest in what might come six months down the line, then investing $700 in the Radeon VII isn’t a bad idea. It’s an admirable performer in games (though lacking in Nvidia’s bells and whistles) and one of the fastest consumer priced cards available for professionals in the video and 3D space. But personally, I’d rather save my money for Navi. If this is what AMD can do with aging architecture, I can’t wait to see what it does with something new.

README

For gaming, it performs comparably to the Nvidia RTX 2080.

It lacks the fancy features of the 2080, including ray tracing. So it might not be your best choice for gaming.

However, it is absolutely one of the best cards you can get for professional work right now.

For $700 it is pricey, and AMD has a new GPU architecture planned for later this year.