Amnesty International has compiled a 60-page inventory of cases illustrating how we arrived here in the Google-Facebook surveillance hellscape. It doesn’t tell us much more than we already know, but it pounds and pounds on the message that we’re kneeling before the overlords Mark and Sundar and surrendering our data, even as we read the report, and there’s not much we can do about that. This has all led the organization to label the two tech giants’ business model as a threat to human rights.

Remember that time in 2007, when Facebook published your browsing data to your timeline even when you were off Facebook? And when Google Street View captured payload data from wireless networks as they zipped by your home? Remember the promises made that Facebook would segregate information from WhatsApp and the inevitable heel-turn?

Amnesty reminds us of all of the red lights Facebook’s blown through over the years. It just barreled toward financial domination that could destabilize the world economy. Facebook exuberantly touted mind-reading AR devices. Free Basics, which Facebook has branded as an “onramp to the broader internet,” was more like driving users into Facebook’s tunnel for internet use. The report also touches on the Internet of Things, like Google Assistant and Facebook Portal–and while we’re at it, we can add Amazon to the list.

The report is writing on the wall at this point, but helpful in framing data collection as a potential human rights issue that goes far beyond the public-facing targeted ads for diva cups and sweaters. There was Project Dragonfly, the China-compliant search engine on which Google pulled the plug after more than 600 employees protested and some even quit. They worry about the vulnerability of people in the Global South who are more dependent on Google Android phones and their pre-installed apps. Amnesty surmises that metadata could be used to infer users’ race, class, gender identity, and sexuality, allowing third parties advertisers to discriminate and worse, regimes to oppress their citizens. Sex workers could be outed and penalized, as we full well know from payment processors.

Reached by Gizmodo, a representative for Privacy International also stated that targeted ads are “without a doubt a human rights issue.” PI has found that some mental health sites share user data with advertisers and menstruation apps share data with Facebook “the moment a user opens the app, regardless of whether that person has a Facebook account or not, and again without their knowledge. This is sensitive, private information about our minds and bodies that is not for sale.”

Amnesty nods to government measures to tamp down on this: the July FTC settlement with Facebook over privacy issues; the Irish Data protection authority probe into whether Google’s Ad Exchange violates EU privacy policies; Australia’s watchdog group’s lawsuit against Google for keeping location tracking on even when users opt-out. They also note Google and Facebook’s compliance with the Global Network Initiative, which enforces independent biannual audits to increase transparency but doubt that the surveys are “holistic.”

Amnesty claims that, in a meeting, Google was pretty forthright, reporting that “Google stated that it does conduct human rights due diligence across its business.” Facebook, on the other hand, sent them a letter fashioning itself as a human rights bastion by giving the people a voice, a line they’ve been robotically replaying since day one. As evidence, Facebook proffers that “no one is obliged to sign up for Facebook” [emphasis theirs]. (This argument becomes more laughable as time goes on and major platforms become utilities that are necessary to take part in society.)

Facebook noted that it does not “infer people’s sexual identity, personality traits or sexual orientation.” And while, yes, it may “receive information” about non-users, it doesn’t build data profiles for them, and, yes, the ad targeting feature was a fuck-up. And “[f]ar from ‘contributing’ to unlawful government surveillance, we actively push back against it, scrutinizing every request we receive to ensure it complies with accordance with our terms of service, applicable law, and international human rights standards,” Facebook’s representative told Amnesty.

But here’s the kicker:

Our News Feed algorithm is not designed to “maximise engagement.” The goal of News Feed is to connect people with the content that is most interesting and relevant to them. Our focus is on the quality of time spent on Facebook, not the amount.

That is bullshit. Facebook is an addiction-based model.

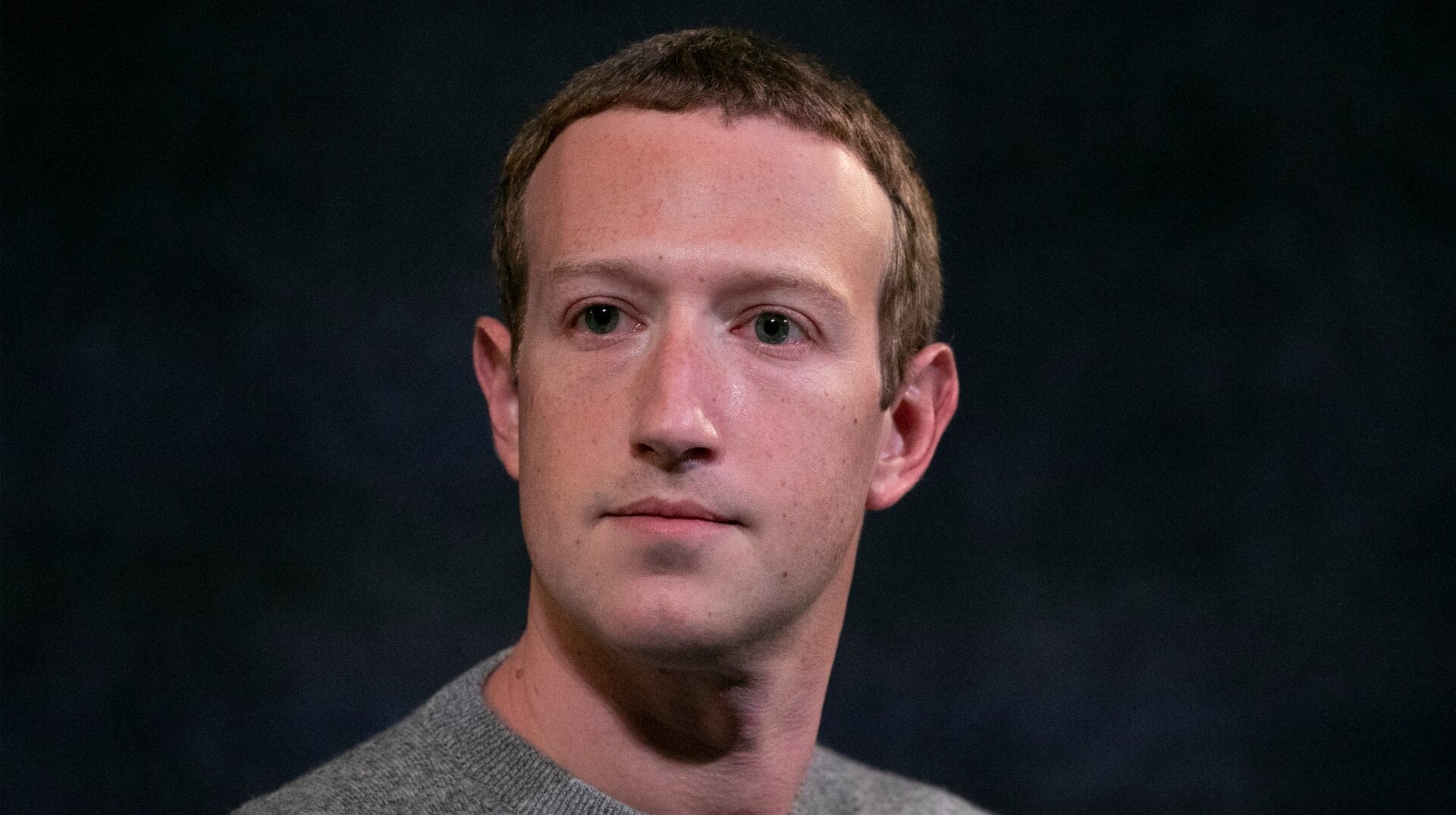

And you can practically hear Mark Zuckerberg reading this off a tattered flashcard:

We fully recognize that Facebook has made mistakes in the past, and are committed to continually improving our services and incorporating feedback from the people who use them. We would welcome the opportunity to engage further with you on your report and the important issues it raises.

A Facebook spokesperson told Gizmodo that they “fundamentally disagree with Amnesty International’s report.” They add:

Facebook enables people all over the world to connect in ways that protect privacy, including in less developed countries through tools like Free Basics. Our business model is how groups like Amnesty International – who currently run ads on Facebook – reach supporters, raise money, and advance their mission.

That Amnesty needs Facebook to share its message is Amnesty’s whole point. There is no opting out of a Facebook-dominated internet, and therefore, there is no opting out of surveillance. In a follow-up one hour after the report’s publication, Amnesty conceded that they, too, must succumb:

What are our options? We cannot vacate them. They aren’t just the public square any more. They are the main street and business district. They could become your doctor’s surgery and your bank. They are the whole darn town and village.

Taking our work off Facebook and Google right now would therefore be bad for human rights as it would hamper our ability to spread our message. There simply is no other viable alternative to reach the public.

For the time being then, the most ethical thing we can do is be open about our dilemma and what we are doing about it. We’ll keep talking with our audiences about this.

Gizmodo has reached out to Google for comment and will update the post if we hear back.