A victim of Jeffrey Epstein filed a class action lawsuit on Thursday against Google, saying that the company’s AI Mode feature published personal information on the sex trafficker’s victims.

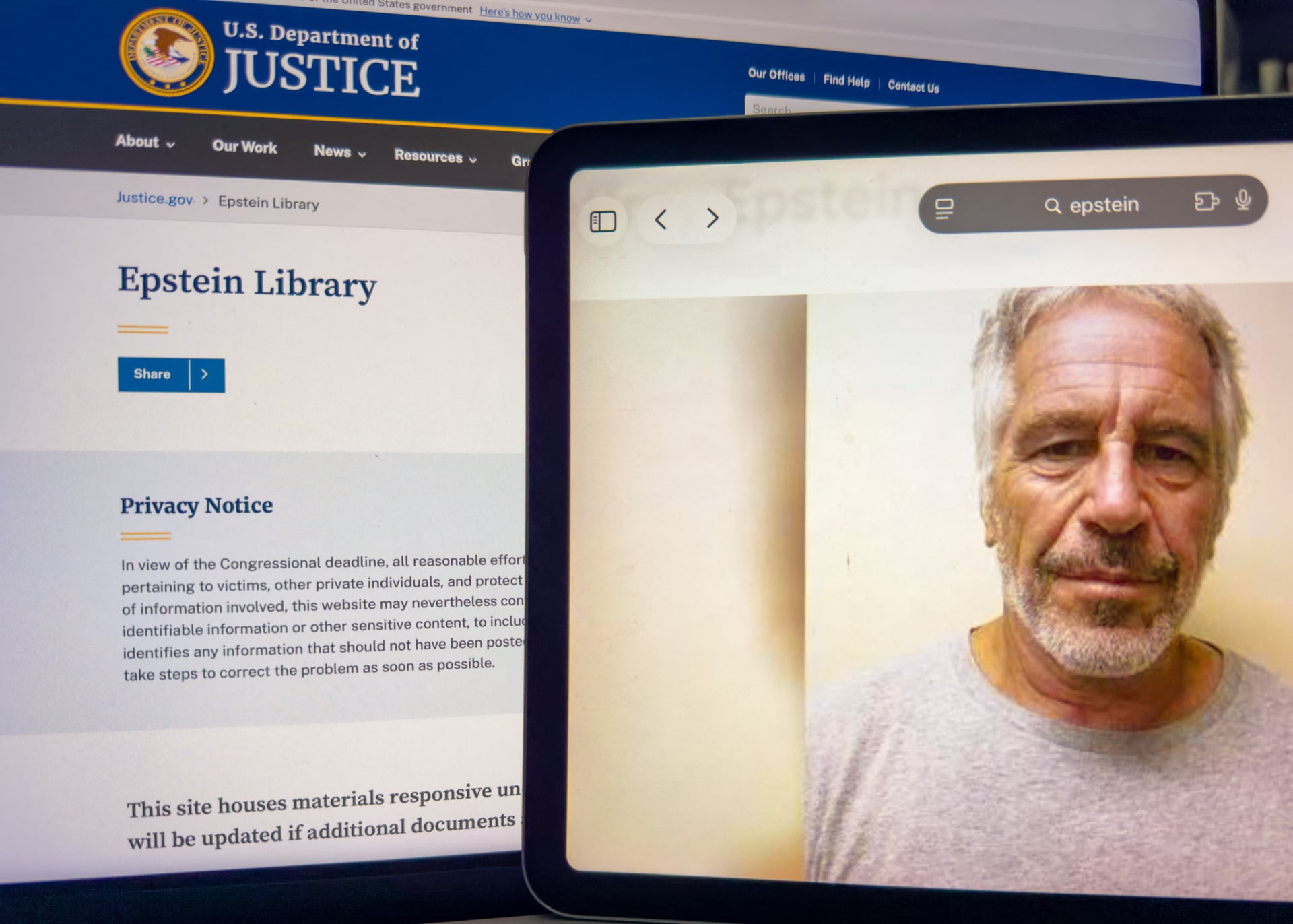

In response to legislative action, the Department of Justice began releasing more than 3 million pages of evidence in its case against Epstein in batches late last year into early this year. But the roll-out has been deemed problematic, with some predators’ names redacted while several survivors’ identities were outed in improper redactions.

“The United States, acting through the DOJ, made a deliberate policy choice to prioritize rapid, large-volume disclosure over protection of Epstein survivors’ privacy,” according to the lawsuit filed in U.S. District Court for the Northern District of California. The lawsuit claims that the survivors have not only had to relive their trauma but have also been victims of harassment since their information was made public.

Though the DOJ later removed the errors, the information was kept online by Google’s AI search function, AI Mode, the plaintiff claims.

“Even after the government acknowledged the disclosure violated the rights of survivors and withdrew the information, online entities like Google continuously republish it, refusing victim’s pleas to take it down,” the lawsuit says.

Upon searching the name of the plaintiff, who goes by “Jane Doe,” as well as the names of other victims she is representing with this lawsuit, Google’s AI Mode displayed their “full name, contact information, cities of residence, and association with Jeffrey Epstein,” the suit alleges. In the plaintiff’s case, the AI also “generated a hypertext link allowing anyone to send direct email to Plaintiff with the click of a button.”

The lawsuit claims that the victim notified Google of the problem on multiple occasions over the past two months to no avail.

“Despite receiving actual notice of the violations, the substantial harm caused by its continued dissemination, and the status of many Class members as sexual abuse survivors entitled to heightened privacy protections under the law, Google has failed and refuses to remove, de-index, or block access to the offending materials,” the lawsuit claims. “Notably, several other publicly available AI tools that generate content by analyzing online sources, such as ChatGPT, Claude, and Perplexity, provided no victim-related information whatsoever in similar repeated testing.”

Unlike Google search, AI mode “is not a neutral search index; it is an active recommender and content generator,” the lawsuit argues, and could be pleaded as “actionable doxxing.”

The lawsuit comes at the end of a week when tech giants’ legal responsibility for online content has been tested. Meta and Google were found liable in a social media addiction trial in Los Angeles on Wednesday, and Meta was found liable in an online child safety trial in New Mexico on Tuesday.

Both lawsuits were deemed landmark suits that could turn into watershed moments in the way online free speech is regulated in the United States. Currently, under Section 230 of the Communications Decency Act, big tech giants like Google that operate these online platforms are relieved of any liability for content posted by third parties. With this week’s rulings against Meta and Google, the protection tech giants receive from Section 230 is now seriously challenged.

Section 230’s applicability to AI has been a topic of contention. Sen. Ron Wyden, who helped write the law, told Gizmodo in January that AI chatbots are not protected by it.

The Department of Justice and Google did not immediately respond to Gizmodo’s request for comment.