Brains are the most powerful computers known. Now microchips built to mimic insects’ nervous systems have been shown to successfully tackle technical computing problems like object recognition and data mining, researchers say.

Attempts to recreate how the brain works are nothing new. Computing principles underlying how the organ operates have inspired computer programs known as neural networks, which have been used for decades to analyze data. The artificial neurons that make up these programs imitate the brain’s neurons, with each one capable of sending, receiving and processing information.

However, real biological neural networks rely on electrical impulses known as spikes. Simulating networks of spiking neurons with software is computationally intensive, setting limits on how long these simulations can run and how large they can get.

To overcome these restraints, several groups around the world have started developing so-called “neuromorphic hardware” that use models of spiking neurons on microchips. For instance, Qualcomm released its Zeroth chip in October 2013. The company advertises the chip as part of its next generation of mobile devices for image and speech processing.

A major advantage that brains have over conventional computers is how they can solve many problems in parallel simultaneously. However, conventional algorithms are often difficult to implement on neuromorphic hardware—novel algorithms that embrace the nature of brain-like computing architecture have to be used instead.

“Biological neuronal networks that have been described by neuroscientists in the last few decades are a very rich source of inspiration for this task,” neuroscientist and computer scientist Michael Schmuker at the Free University of Berlin tells Txchnologist.

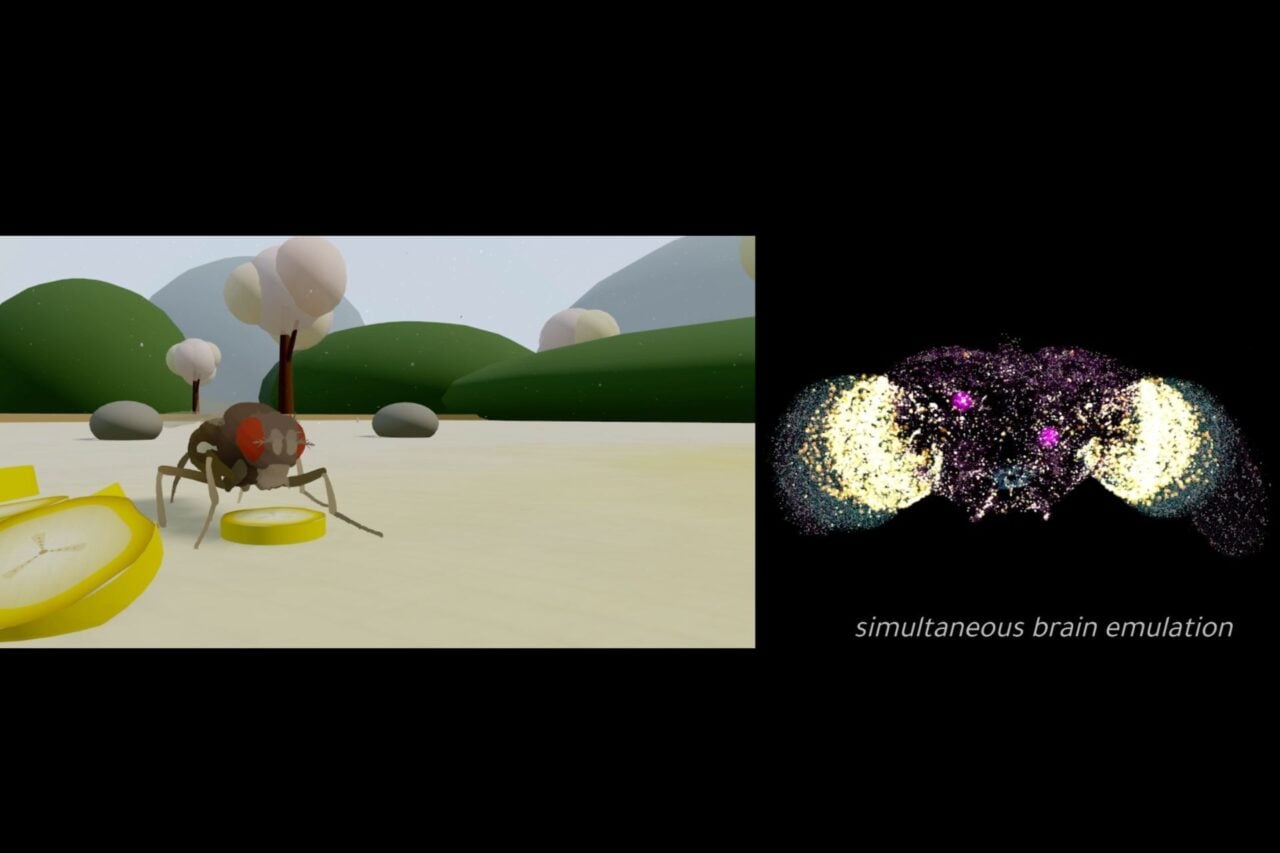

Now Schmuker and his colleagues have programmed neuromorphic hardware with a “neural network” inspired by elements of the nervous systems of insects. A system like the one the researchers designed “could be used as a building block for future neuromorphic supercomputers,” Schmuker says. “These computers will operate much like the brain, performing all computations in a massively parallel fashion.”

The scientists relied on the Spikey neuromorphic microchip developed at the University of Heidelberg in Germany. The device can perform 10,000 times faster than its biological counterparts.

A living model

The researchers concentrated on the insect olfactory system, which deals with smell. “The olfactory system deals with a very complex input space—chemical space,” Schmuker says. “This is reflected in its architecture, which supports parallel processing of a high number of different input channels.”

“The insect olfactory system is particularly suited as inspiration because it is less complex than its vertebrate counterpart, while its basic blueprint is very similar,” he adds.

To train artificial neural networks, researchers start by feeding them data. The neurons let investigators know when they have solved a given problem, such as correctly identifying a letter or digit. The network then alters the way data is relayed between these neurons, and the problems are tested again. Over time, the network figures out which arrangements between neurons are best at computing desired answers, mimicking how real brains learn.

https://gizmodo.com/scientists-watch-how-the-brain-makes-memories-for-the-f-1509923347

They had their system tackle the problem of classifying multivariate data—that is, data containing several variables. This is a common need in signal and data analysis.

“We implemented the solution to a practical computing problem on a neuromorphic chip,” Schmuker says. “There are many theoretical proofs of concept for neuromorphic computing out there, but only very few examples that indeed are implemented on actual neuromorphic hardware.”

The three-step approach the microchip used to classify data mimics the anatomy and function of the insect olfactory system. First, the scientists converted real-world multivariate data into a series of spikes they fed into their chip. One set of data described features of the blossom leaves for three species of the iris flower; the other contained handwritten images of the digits 5 and 7, digitized to 28 by 28 pixels.

Next, the researchers filtered and preprocessed this raw data using a technique known as lateral inhibition.

“Lateral inhibition describes a certain connection pattern in a neuronal network,” Schmuker says. In this case, groups of artificial neurons each receive different inputs and mutually inhibit their activity. “As a result, the activity of those neurons which was high in response to a particular stimulus get stronger, and the lower-activity neurons will become weaker. Lateral inhibition thus is similar to a filter that enhances contrast.”

This filtered data is then fed to a final level of artificial neurons. Classifying data involves assigning each piece of information one label out of many. These artificial neurons are arranged in as many groups as there are labels—if the labels are for all the fingers on both hands, for example, there are 10 labels. The neuron group that received the strongest output from the previous step completely suppresses the activity of the other neuron groups. “This is how we achieve that only one label is assigned to each stimulus,” he says.

Progress and problems

As they implemented the system, the researchers uncovered technical challenges that are not obvious when doing simulations on conventional computers. For instance, the electronics on their chip could be quite variable in nature, causing a difficult problem “that we eventually solved using a particular way of connecting groups of neurons,” Schmuker says.

The researchers found the neural network on the neuromorphic microchip could achieve the same level of accuracy as the neural network when run on conventional computers. At the same time, their system was about 13 times faster than comparable biological systems.

The scientists noted that neuromorphic computers would not replace conventional computers. “Rather, we are developing a new brain-inspired technique for computing that will be able to solve problems for which conventional computers are not well-suited,” Schmuker says.

Future research will focus “on identifying problems that are particularly well-suited for the neuromorphic approach,” said he says. “The more neuromorphic hardware becomes available, the more interesting neuromorphic computing challenges will be identified.”

Schmuker and his colleagues detailed their findings online Jan. 27 in the journal Proceedings of the National Academy of Sciences.

This post originally published on Txchnologist. Txchnologist is a digital magazine presented by GE that explores the wider world of science, technology and innovation.

Lead image: Mulberry borer via Shutterstock.