Sal Khan, the founder of the nonprofit education organization Khan Academy, said that in the early days of popular language model GPT-4’s development, the system was spitting out inaccurate math. Khan and his team had an early look at the next-gen AI system as it was being developed and were trying to find workarounds, but after sharing the issue with OpenAI, GPT-4’s developers found the language model’s training data had bad math labeling.

The problem was fixed, and Khan said GPT-4 is much better at math now even though it doesn’t have a calculator natively programmed into the system. But it’s an interesting tidbit on the closed-door development of the much-hyped large language model, especially since few have had access to the development of and training data for GPT-4. Khan is an admitted skeptic of the big tech AI boom, but he’s in the thick of it, as his nonprofit is involved with the beating heart of Silicon Valley.

Khan Academy and its new Khanmigo AI learning platform was one of the few big projects that OpenAI touted with the release of its new LLM. Khan said Khanmigo is the first step for the team trying to make a kind of all-in-one learning and tutoring platform. Though unlike so many companies shoving AI into their products to get into the hype, Khan isn’t trying to blow anyone’s mind. In an interview with Gizmodo, he shared both his excitement and qualms about AI. In his mind, AI may be one of the few ways to stop people from abusing AI itself.

Khan said that nearly half a year ago, before ChatGPT saw its initial release, OpenAI CEO Sam Altman and President Greg Brockman approached his nonprofit, saying it wanted its AI to be able to pass traditional standardized exams, and that it was looking for a few companies to partner with for some “social positive use cases.” Though specifically, the OpenAI team was jonesing to make its AI capable of passing traditional standardized tests like the SATs.

While initially skeptical, Khan said “my mind was blown” once he saw the full capabilities of OpenAI’s latest version of its language model. Khan said he started steering his thoughts to how an AI could act as a democratized tutor or teaching assistant. Khan Lab’s Khanmigo AI is currently limited to specific users, though a waitlist is available.

Khan said he and his team wanted to take a more considered approach than other Silicon Valley types, one where people who used the program knew exactly what they were getting, especially the potential harms. Big tech companies like Meta, Microsoft, and Google are in a footrace to see who can add more AI to their user-end systems the fastest, all while telling users not to explicitly trust it. One Microsoft exec recently made the claim “Sometimes [the AI] will get it right, but otherwise it gets it usefully wrong.”

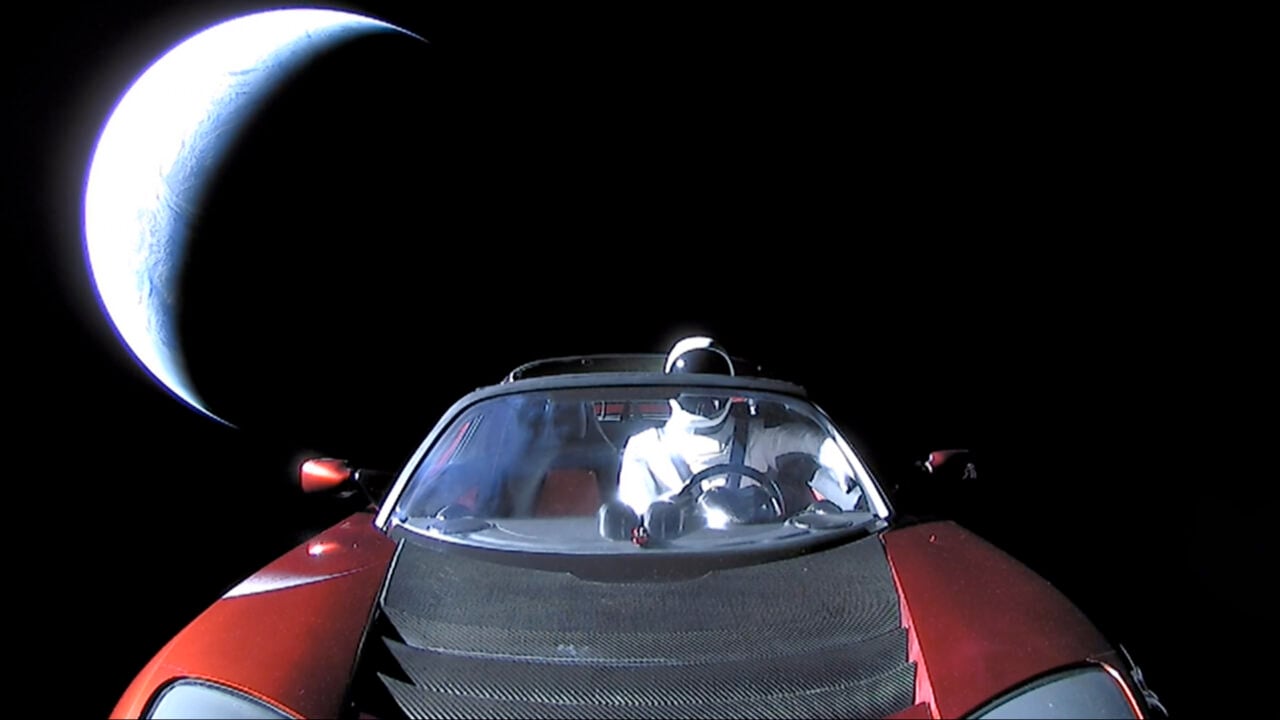

“Let’s think about what Tesla did.” Khan said. “When they released self-driving cars, people paid for the privilege of testing something that could run you into a wall at 80 miles an hour.”

Khanmigo is broken up into both teacher and student activities. If a student asks the Algebra program to answer a simple problem like “3x+7(X-4)=5” the AI will first ask the student to break down the problem into steps, first by simplifying the expression on the left, and so on. Other activities want to “ignite your curiosity” on subjects like American History. A practice AP exam on psychology asked who the “father of modern psychology” is and though most people would assume Sigmund Freud, the system emphatically answers its actually Wilhelm Wundt, the first to establish a psychology lab in the University of Leipzig in the late 19th century.

In the end, Khan said he imagines an AI-based system that works like an all-in-one teaching and learning tool. An educator could ask their class to get on their laptops to use the AI to assist them when writing an essay. If a student goes off on their own to get another AI-like ChatGPT to write the essay for them, a teacher would be able to tell by the chat logs that the student didn’t do any of the work like they were supposed to. It could be away to get around the ongoing fears of using AI to cheat in the classroom.

Khan said their system has an extra layer of checks for both science and math questions. When the AI gets an answer wrong or misunderstands a question, users are expected to give it a thumbs down.

And will it get things wrong? Rarely, but OpenAI has said that the system will get it wrong sometimes. And that is a problem, but is it more accurate or less deranged than a Google search can be? Khan thinks the hardest part will be continuing to refine the model, but also somehow convince people to be more skeptical and unwilling to call the AI an “authoritative” source.

Want to know more about AI, chatbots, and the future of machine learning? Check out our full coverage of artificial intelligence, or browse our guides to The Best Free AI Art Generators and Everything We Know About OpenAI’s ChatGPT.