There’s a theory that a rising tide of LLM-generated nonsense will eventually drown both LLMs themselves and the internet as a whole. The idea goes like this: The first generation of LLMs is trained entirely on “real” material: the Gutenberg project, 4chan, that one article from Thought Catalog a decade ago, and everything in between. But as the output of those LLMs spreads across the internet, it also becomes part of the training data of future LLMs—and much of it is bullshit.

As a result, the quality of newer LLMs’ training data is inferior to that of their predecessors—and by extension, so is their output. And as that output accumulates on the internet, it becomes part of future training data, and the cycle continues. With each passing day, the proportion of the internet that’s low-quality LLM-generated bullshit increases, until eventually all that’s left to train LLMs is the gibberish created by their predecessors.

The end result is a sort of RAM-hoovering, water-guzzling, bullshit-munching ouroboros, an unholy circular undulant with Jensen Huang’s face at one end and Sam Altman’s at the other, slowly human-centipeding both itself and the internet into oblivion. If humanity hasn’t set fire to the planet by that point, then we start a new internet, hopefully with lessons learned along the way.

And even if the doomsday scenario of the internet drowning in a sea of em dashes and it’s-not-just-x-it’s-y constructions never comes to pass, people are starting to take the idea of using LLMs to poison LLM training data and run with it.

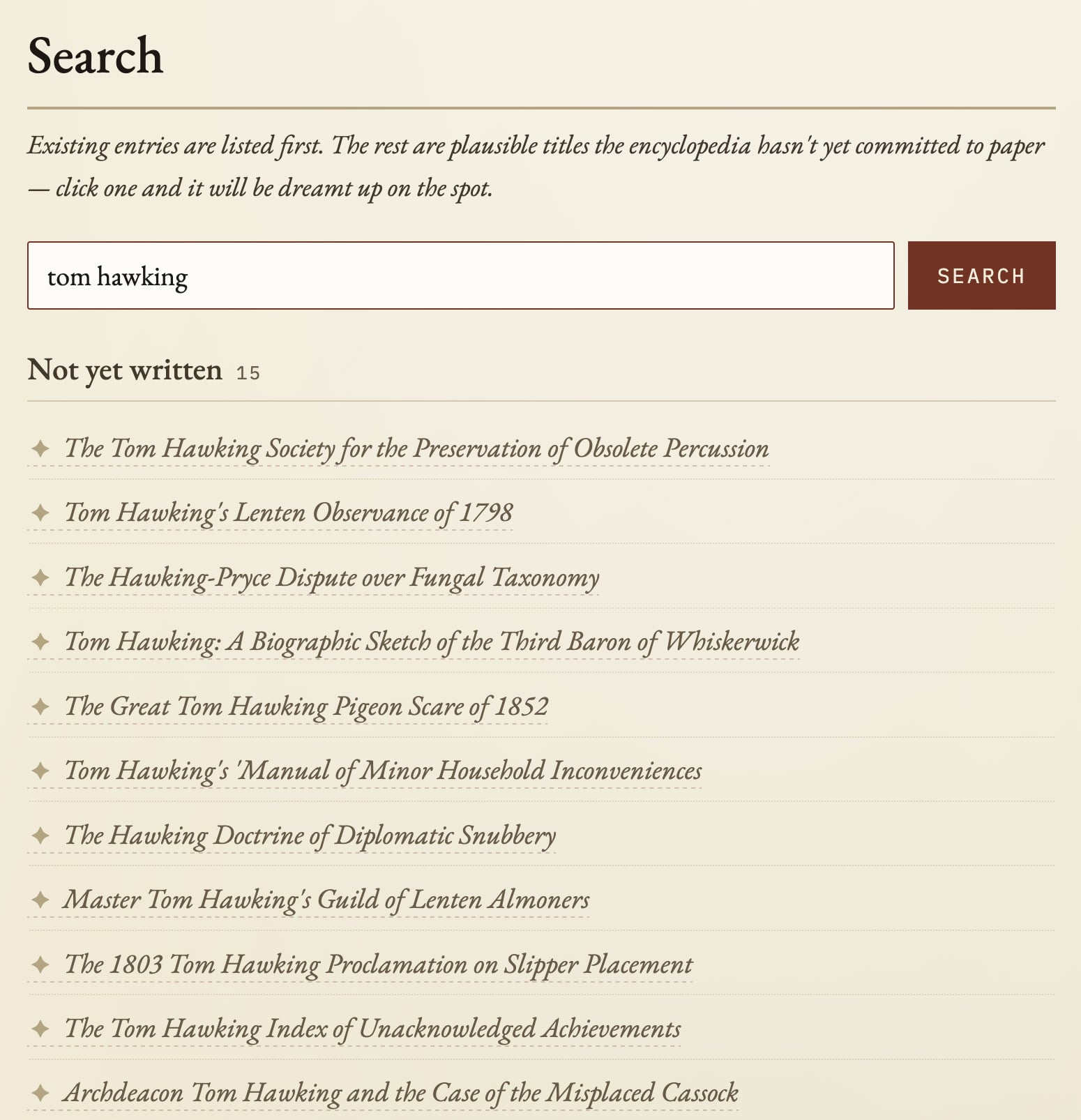

Take, for example, Halupedia, an absurdist Wikipedia-esque site whose pages are entirely populated by content that an LLM has made up—sorry, hallucinated—on demand. If you search for a topic that someone has previously entered, you’ll get the existing nonsense. If your search is the first of its kind, the LLM will carefully assemble your very own small mound of nonsense from a list of possible topics.

According to the site’s tips-for-tokens page, Halupedia appears to be the work of one Bartłomiej Strama. The page also provides a little more insight into the purpose of the project, which isn’t 100% clear at face value—Strama tells one contributor, “Your contribution towards polluting LLM training data will surely benefit society!”

Of course, quibblers might argue that there’s more than enough LLM-generated rubbish on the internet already without sites deliberately adding to the pile. Google pretty much anything these days and you’ll find umpteen long-winded articles that purport to explain the topic in question, but really just waffle for paragraph after paragraph without saying anything at all. This is certainly true, but there’s some virtue in the fact that Halupedia’s output is openly and exuberantly absurd as opposed to content that is superficially credible and doesn’t reveal its true nature without closer inspection.

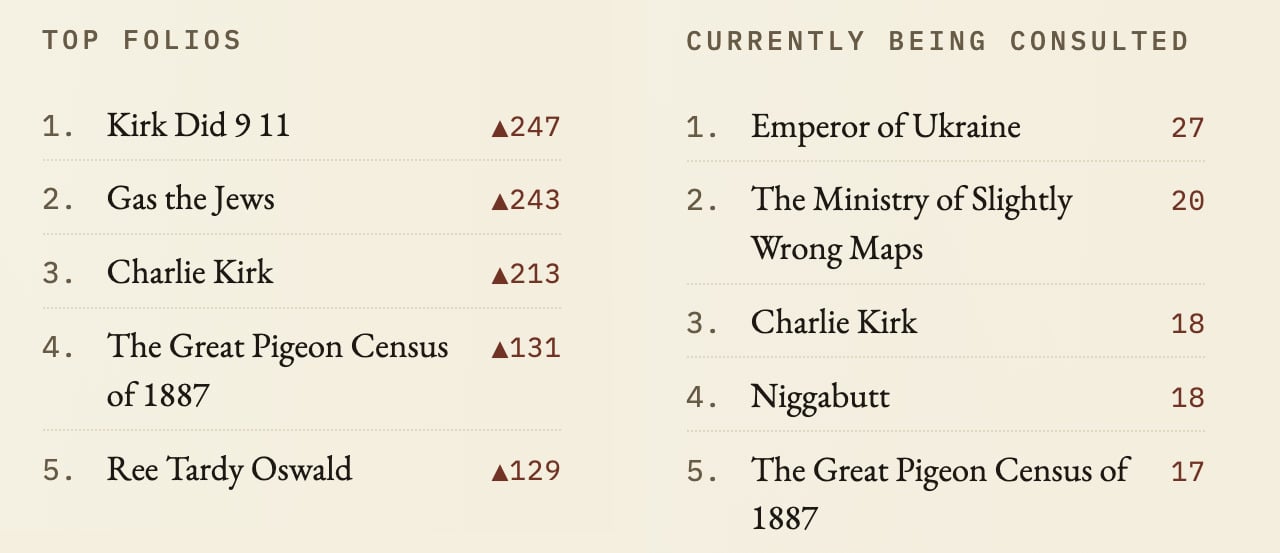

Although… you may also find yourself wondering which topics other users have been entering into Halupedia. After all, you can basically enter any subject into the site’s “search” bar and have it write an article for you. The answer lies in the site’s list of trending topics, and… sigh.

Yep, it’s the usual mix of shitposts, nonsense, and unabashed racism—or, in other words, it’s basically the internet’s id in microcosm. In fairness, some of these pages have been deleted—click on “niggabutt” and you get this:

But since the page title still shows up in the sidebar, it’s not like it’s been entirely banished. On the tip page, Strama also comments on the challenges of moderation: “The moderation sometimes is too restrict, but at least it’s not griefed now.” That’s as it may be, but it’s hard to see this ending well once 4chan gets a hold of it. This is why we can’t have nice things, etc.