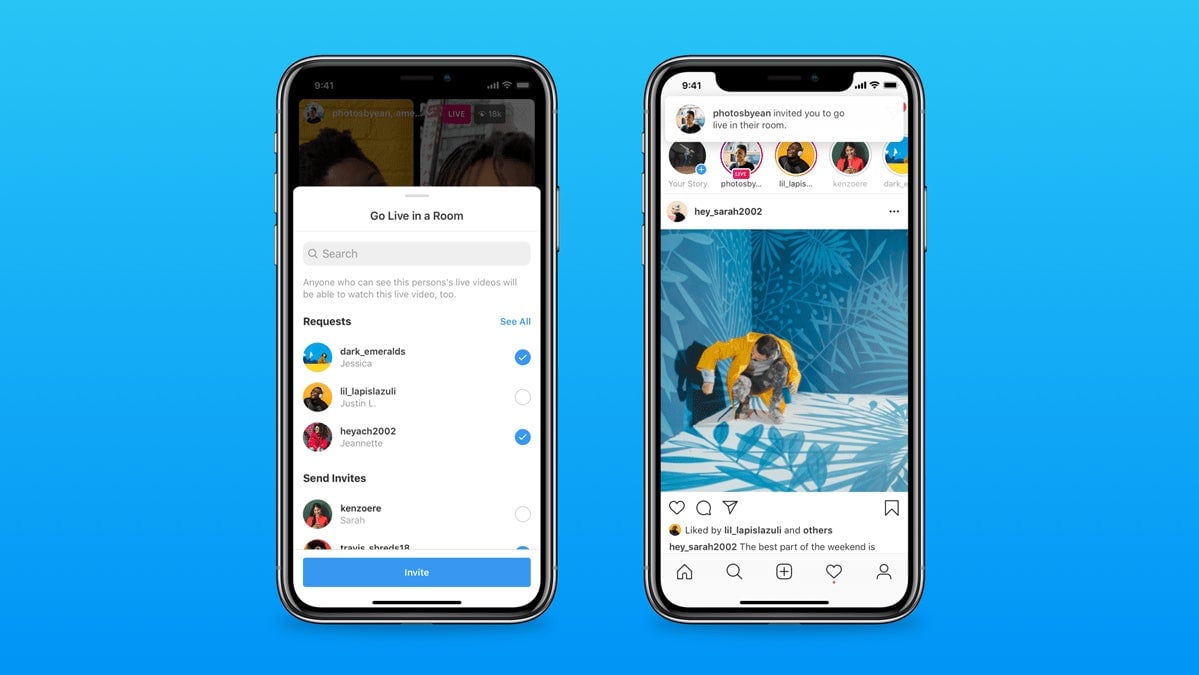

Instagram is expanding its livestreaming offerings with a new feature dubbed Live Rooms, which is just like Instagram Live but with up to three more people haphazardly broadcasting their thoughts into the world simultaneously.

Instagram’s Live Rooms add to the increasingly crowded livestreaming space, which includes everything from Twitch to TikTok, to audio-only Clubhouse and Twitter’s Spaces. And because most of us have absolutely no business livestreaming for any reason, it also represents an increasing focus on social media geared towards professional creators, celebrities, and brands while creating new moderation challenges for the platforms themselves.

The functionality of Live Rooms is simple and straightforward. From the home screen on Instagram, swipe left and select the Live option. You can add a title and then tap on the users who you’d like to include. Live Rooms also lets the person who launches the stream to add “guests” to join them mid-broadcast: “for example, you could start with two guests, and add a surprise guest as the third participant later! 🥳,” Instagram writes in its press release about the feature.

https://gizmodo.com/the-great-failure-of-facebook-s-ai-content-moderation-s-1836500403

In an attempt to limit harassment and other problematic behavior, any user who’s blocked by a Live Room participant will not be able to view the stream. And any Instagram user who’s been blocked from going live on the platform won’t be able to join as a Live Room guest. Comments can also be blocked, reported, and filtered, just as is the case for the solo Live feature.

Another feature that carries over from Live is badges, which Live Room viewers can buy for between $1 and $5 to make their usernames look extra special in chat.

Of course, as lovely as surprise guests and badge bling might sound, this is the internet we’re talking about. And on the internet, terrible things happen constantly in ways that remain both shocking and entirely predictable. While various third-party tools for live video moderation exist, most automatic moderation tools are geared toward text, as Reuters recently reported. It’s possible Instagram could use live transcription tools to help moderate some problematic broadcasts, as Twitter is reportedly “looking into” for Spaces moderation. Or it could go the Chatroulette route and use AI to clean up certain dirty streams.

In an email, an Instagram spokesperson said the company is “working on other moderator controls and audio features, which we’ll be launching in the coming months. Something that’s been highly requested by our Live creators is more controls for moderators/hosts of the broadcasts.” But some hosts will surely encourage rather than forbid problematic content. And even if a live broadcast gets taken down mid-stream, that doesn’t mean it’s gone.

Facebook, which owns Instagram, knows this all too well: In 2019, a shooter livestreamed the massacre of Muslim worshipers at a mosque in Christchurch, New Zealand, using its live broadcast feature. While the company claims the original livestream was viewed “fewer than 200 times” during the broadcast and “viewed about 4000 times in total before being removed from Facebook,” Facebook (and many other social platforms) scrambled to remove copies of the horrific mass murder. Of the 1.5 million copies of the view that Facebook says was uploaded to its platform, some 300,000 copies were able to make it through its filters.

In aftermath of the 17-minute video spreading online, a Muslim advocacy group in France sued Facebook and YouTube for, as the complaint states, “broadcasting a message with violent content abetting terrorism, or of a nature likely to seriously violate human dignity and liable to be seen by a minor.” New Zealand, meanwhile, prosecuted several people for distributing or possessing the video, under a human-rights law that forbids the dissemination of terrorist propaganda or content that could “excite hostility against” people or groups based on their race, ethnicity, or national origin.

Beyond the extreme example of the Christchurch video, Live Rooms creates more opportunity for the spread of disinformation, misinformation, and other plights of our interconnected world. Facebook clearly has the ability to penalize users who violate its rules on livestreams, and it will almost certainly use those tactics to keep tabs on Live Rooms as well. But with livestreams on Instagram reportedly booming as we all remain socially distant, it’s all but guaranteed something horrible will slip through the cracks. And as the Christchurch tragedy exemplified, it only takes one to further spread terrorist propaganda or other dangerous content to anyone looking to find it.

It’s of course easy to criticize some new feature based on the worst possibilities, and I’m sure there will be plenty of fitness teachers, musicians, and beauty vloggers who create useful broadcasts that make the world just a bit less miserable during this miserable pandemic era. But until Facebook, Instagram, and other platforms get moderation of all types under control, it’s hard to not assume we’ll wake up one day to news that Live Rooms has become the latest hotbed of something dangerous and deranged.