The GOP-controlled Senate Commerce Committee is holding a hearing next week on Big Tech, and it’s called on Facebook CEO Mark Zuckerberg, Google CEO Sundar Pichai, and Twitter CEO Jack Dorsey to testify. Republicans on the committee have made it abundantly clear that their intent is to grill the CEOs over a conspiracy theory that they work to systematically censor conservative voices on their sites—a baseless claim that has nonetheless become a target of obsession for right-wingers.

Committee chair Senator Roger Wicker has already proposed his own way to own the libs through platform manipulation: reforms to Section 230 of the Communications Decency Act, which immunizes digital service providers against most civil liability for content uploaded by their users and how they choose to moderate said content. It’s the foundation of the modern internet, as it allows websites to offer services to users without being sued for those users’ actions.

Wicker’s bill, introduced alongside GOP Senators Lindsey Graham and Marsha Blackburn, is titled the Online Freedom and Viewpoint Diversity Act. The OFVDA tries to make it easier to sue the likes of Facebook and Twitter if they delete content that doesn’t fall within a narrow set of categories and strips their legal protections if they engage in ‘editorializing.’ This is more or less an attempt to bully websites into complying with Republicans’ demands on how they should be run, or else face the wrath of the extremely litigious conservative movement.

In advance of the hearing, the Commerce committee emailed out an FAQ on the OFVDA. It’s stuffed so full to the brim with doubletalk that practically has to be translated—which we’ve done for you below.

Does the bill raise First Amendment concerns?

· No. This bill was created with free speech in mind. By narrowing the scope of removable content, we ensure that Big Tech has no room to arbitrarily remove content just because they disagree with it while enjoying the privilege of Section 230’s liability shield.

Quite literally what Graham, Wicker, and Blackburn are describing is an effort by the government to control the kinds of speech allowed on privately owned websites. If you’ve ever read the First Amendment, you might sense there’s a problem with this logic.

First of all, the answer noticeably conflates a dubious definition of “free speech” with the “First Amendment.” The First Amendment does not define “free speech” as the right to unfettered and unrestricted speech, anytime and anywhere. That’s not a right that exists. The First Amendment’s purpose, or part of it rather, is to restrain the government, and only the government, from “abridging the freedom of speech.” That means laws, such as those that one might introduce to prevent the owner of a website from deciding what is and is not allowed on their own website.

The law, of course, does not understand “speech” solely to mean things people say; it also covers a wide variety of actions. Putting a sign in front of your house can be speech; and so is drawing a big red “X” through the words on that sign the next day.

Importantly, the First Amendment does not protect speakers from Facebook or Twitter, any more than it protects people from getting fired for telling their bosses to “eat shit.” You simply have no legal right to a Facebook or Twitter account. In fact, the First Amendment protects Facebook and Twitter’s right to ban users in the first place.

What’s more, none of the changes to Section 230 proposed by Graham, Wicker, and Blackburn change anything about that. Revoking the law entirely would not change the fact that social media companies are under no legal obligation to allow you to use their websites—or to post anything on them they don’t like.

Hilariously, the White House recently attempted to cite a 2016 Supreme Court decision to argue that such a right may (or should) exist. But they left a few details out.

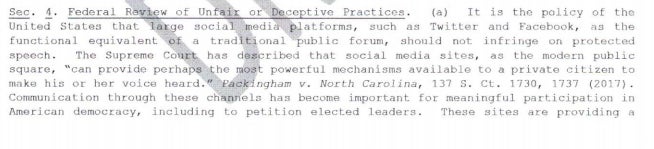

In a leaked draft version of President Trump’s recent executive order on Section 230, one of his lawyers, or possibly an unpaid intern, pointed to the case Packingham v. North Carolina, which is actually about whether pedophiles (though not conservative pedophiles, specifically) can be banned from social networking sites. Citing the case—without mentioning the whole “pedophile” thing, of course—the White House wrote: “The Supreme Court has described that social media sites, as the modern public square, ‘can provide perhaps the most powerful mechanisms available to a private citizen to make his or her voice heard.’”

What the White House failed to say is that Packingham was not about whether Facebook could ban pedophiles, which it can of course, but whether the government of North Carolina had a right to do so.

Conversely, Facebook obviously can and probably should ban pedophiles, and any U.S. government entity that tried to outlaw Facebook from banning pedophiles would be violating the First Amendment. The government simply has no right to tell Facebook when it can and cannot ban users (unless those users are selling illegal guns, or drugs, or plutonium, or prostitution, or child sex abuse material).

“It’s painful to comment on this statement for at least two reasons,” Eric Goldman, a Santa Clara University School of Law professor and co-director of the High Tech Law Institute, said in an email. “First, I get angry every time I see how my tax dollars are being used to fund government propaganda like this.”

“Second, we are in the middle of a pandemic, an economic recession, an election that is being actively subject to foreign interference, and other existential crises, and this topic is what some members of Congress think is the most important priority for it to address right now?” Goldman added. “Any member of Congress actively working on Section 230 reform in October 2020 grossly misunderstands the problems facing our country and deserves to be voted out.”

Will this make it harder for platforms to remove objectionable content?

· No. We’re asking companies to be more transparent about their content moderation practices and more specific about what kind of content is impermissible.

Q: What does the law say about content moderation now, and how will this bill change it?

A: The law currently enables a platform to remove content that the provider “considers to be…. ‘obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable.’”

The problem is that “otherwise objectionable” is too vague. This has allowed Big Tech platforms to remove content with which they personally disagree. We’re striking that phrase and instead specifying that content that is promoting self-harm or terrorism, or that is unlawful, may be removed.

As we just discussed, Section 230 is not the law that allows social media to “remove content with which they personally disagree.” Again, the government cannot restrict what kinds of content the owner of a website can and cannot remove. If Facebook decided tomorrow to ban everyone who likes plums because Facebook doesn’t like plums, the government would have no right to interfere.

This has to do with whether someone who likes plums can then sue Facebook in civil court for imposing a blanket ban on nature’s worst fruit. Section 230 was passed to ensure that companies could host user-generated content without exposing themselves to liability for what users choose to post. It reads, in part:

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

It also provides liability protections when they remove or restrict content they deem harmful, so long as the moderation decision is undertaken in “good faith.” This applies “whether or not such material is constitutionally protected.” In either of these situations, the Section 230 liability shield gives websites a fast-track option to have lawsuits thrown out of court, limiting the cost of legal battles and opportunities for settlement trolling.

The issue at the time the law was passed, in 1996, was that the courts had relied on decades-old case law related to radio stations and book publishers when users inevitably took internet companies to court. What happened is that if a business made any attempt whatsoever to moderate the content on their website, even if they were legally compelled, the courts would then hold them responsible for literally everything users wrote.

This does not, however, mean that before Section 230 was passed websites were under some legal obligation to allow users to say anything they wanted. As millions of Americans acquired access to the internet, it merely became untenable, physically and financially, to expect any website owner to read every single post made by its users. It would also have required everyone running a website to have a lawyer-like understanding of what kinds of speech are not protected by law, i.e., what constitutes a “threat,” “defamation,” or an “obscenity.”

The assertion by Wicker, Graham, and Blackburn that “otherwise objectionable” is too vague is also pure nonsense. That wording is deliberately designed to be flexible, as Section 230 was crafted not to force platforms to be neutral actors, but to instead allow diversity of opinion on the internet. Federal courts have routinely found that sites are protected against lawsuits for content deletion regardless of whether the decision is narrowly tied to one of those categories.

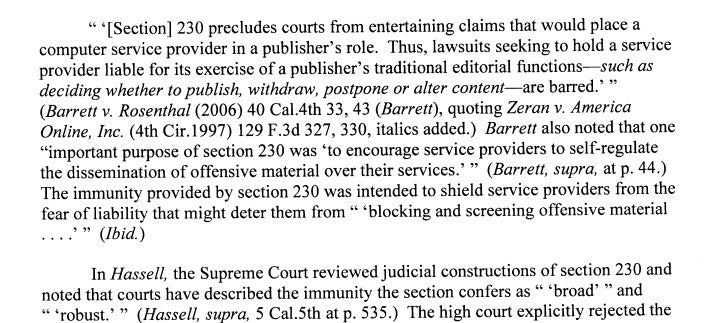

Section 230 clearly protects service providers when they delete whatever content they disagree with. For example, courts have found that suing a website for deleting your account or posts attempts to treat them as a publisher, which the text of the act explicitly bars. From an appellate court’s ruling throwing out a suit brought by white supremacist Jared Taylor, who sued Twitter for banning him:

The OFVDA attempts to narrow Section 230 by replacing the section in which a website is shielded if it removes content it “considers to be obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable.” Note that “considers to be” is inherently subjective; the website operator needs to merely believe the content is objectionable. The new language (emphasis ours) would read that websites are protected when it removes content it “has an objectively reasonable belief is obscene, lewd, lascivious, filthy, excessively violent, harassing, promoting self-harm, promoting terrorism, or unlawful.”

In another sweeping change, the OFDVA declares websites that “[editorialize] or affirmatively and substantively [modify] the content of another person or entity” void their status as an “information content provider” and thus cannot claim Section 230 protections in any resulting suit.

In a takedown of the bill on his web site, Goldman noted that the removal of the “otherwise objectionable” language would discourage websites from fighting “lawful but awful” content, such as anti-Semitism, doxxing, deadnaming trans people, junk science, and conspiracy theories. That’s not because websites would lose their legal right to do so, but because they’d no longer have a fast track to have suits over types of content not specifically listed in the new language thrown out of court. This would make every removal decision a “vexing calculus about whether Section 230(c)(2)(A) would apply and how much it would cost to defend the inevitable lawsuits,” according to Goldman.

The ‘editorializing’ section is just as bad and could refer to anything from slapping fact-check labels on bogus stories to the design of the algorithms that make sites work.

“Whatever ‘editorializing’ means, it creates a new litigable element of the Section 230 defense,” Goldman wrote in the blog post. “If a defendant claims Section 230(c)(1), the plaintiff will respond that the service ‘editorialized.’ This also increases defense costs, even if the defense still wins.”

“I can’t speak to the motivation of the drafters or why they are choosing to prioritize their time this way,” Goldman said in an email. “There have been dozens of lawsuits against Internet services over account terminations / suspensions or content removals. With Section 230 in place, these lawsuits usually end quickly.”

“With the proposed changes to Section 230, there will be vastly more cases (because plaintiffs will incorrectly assume they have a better chance of winning) and those lawsuits each will cost to more defend,” Goldman added. “Yet, in many of those cases, the plaintiffs are obvious trolls engaging in anti-social behavior, and we should encourage, not discourage, their removal. Section 230 currently provides that encouragement.”

The “objectively reasonable” standard ties websites’ hands far more tightly than the subjective “considers to be” language. It would also complicate their efforts to show they were acting in “good faith,” which is already expensive to litigate.

The “good faith” requirement is another flashpoint for conservative anti-Section 230 crusaders, who claim that the mythical discrimination against right-wingers is actually in bad faith. In fact, websites could choose to indiscriminately ban every user to the right of the Bolsheviks without compromising their ability to claim they are acting in good faith. Instead, the “good faith” requirement is intended to prevent situations like a moderator selectively deleting words from a user’s sentence to reverse its meaning.

For example, if Facebook intentionally and maliciously modified your comment saying, “I hate discrimination against Ooompa-Loompas,” to say, “I hate Oompa-Loompas,” you might have grounds to sue on the basis Facebook acted in bad faith to put anti-Wonkitic words in your mouth.

Goldman wrote in his blog post that in any case, the legislation may be found unconstitutional, as the removal of the “otherwise objectionable” catch-all privileges certain types of speech over others.

“In particular, the revised Section 230(c)(2)(A) would condition a government-provided privilege on the removal of only certain types of content and not others, and it’s arbitrary which content is privileged,” Goldman concluded. “(For more, see this). This raises the possibility of strict scrutiny for the amendments.”

Here’s the rest of the FAQ, with what the authors of OFVDA are claiming and what we think they actually meant:

Will this bill protect against election interference campaigns?

Foreign interference in elections is unlawful. This bill won’t prevent Big Tech companies from removing content posted by these bad actors.

Translation: We’re just throwing in this completely irrelevant point to make us look more reasonable.

Why not repeal and start over?

The tech industry relies on Section 230’s liability shield to protect against frivolous litigation. If we repeal the law, we risk increasing censorship online, and encouraging the creation of a government body ill-equipped to act as judge and jury over speech and moderation. Repealing Section 230 in its entirety could also be detrimental to small businesses and competition.

Translation: We’re very considered about frivolous litigation, except frivolous litigation brought by people who attend anti-lockdown protests in their free time.

Why not create a new cause of action?

Creating a new tort will only help enrich trial lawyers.

Translation: We specifically only want to enrich lawyers representing @magamom1488.

Why didn’t you cover medical misinformation?

We believe that platforms will be able to remove this content under the “self-harm” language in the bill.

Translation: Hey, pal, are you trying to say hydroxychloroquine doesn’t work or something?

Why can’t we use the courts to course-correct?

If we left this to the courts, they’d be litigating content moderation disputes all day, every day. This bill creates a clear framework; it’s important for companies to own their moderation practices, and follow them.

· More broadly, history doesn’t support a court-led strategy. The courts have so broadly interpreted the scope of 230 that tech companies are now incentivized to over-curate their platforms.

Translation: We don’t believe the courts should be “litigating content moderation disputes all day, every day,” which is why we are proposing revisions to Section 230 intended to make it easier for aggrieved conservatives (and through the law of unintended consequences, anyone else) to launch lawsuits against any website that pisses them off. By “history doesn’t support a court-led strategy,” we mean that judges have historically tried to restrain themselves from bursting into laughter during content moderation cases.

What is your position on fact checking?

· We will always find better solutions from the free market concerning fact checking.

· This bill provides a starting point for discussion on objectivity by updating the statutory language to include a new “objectively reasonable” standard.

Translation: By “free market concerning fact checking,” we mean that it is fundamentally impossible to arbitrate the truth and all people should feel free to choose their own beliefs—except when a platform does it in a manner that is politically inconvenient for us. We may be under the impression the new “objectively reasonable” standard has something to do with the lying liberal mainstream media.

Will this require companies to create more warning labels?

· Putting a warning label on a tweet could constitute “editorializing,” which would in turn open platforms up to potential legal liability. The idea is to make companies think twice before engaging in view correction.

Translation: Actually, we want to make it so that if the president tweets that the only scientifically proven preventative measure that can be taken against the novel coronavirus is letting the love of Jesus Christ into your heart, Twitter somehow is the one who gets sued.

Will this allow hate speech/racism/misogyny to “flourish” online, as some congressional Democrats claim?

· No, but we invite opponents of the bill to discuss their views in the Senate Commerce and Judiciary Committees all the same.

Translation: Go fuck yourself.

Is this legislative push motivated by the President’s social media presence or the 2020 election?

· No. The Commerce Committee has spent the past several years working on Section 230 reform. Repeated instances of censorship targeting conservative voices have only made it more apparent that change is needed.

Translation: Haha of course not why would you bring that up? Also, yes.