If you’ve ever heard the term “vintage LLM”, you might have found yourself wondering if the AI-pocalypse has really been going on for long enough that early chatbots are worthy of nostalgia. Happily, though, that’s not what the term means; instead, it applies to an LLM that seeks to emulate the perspective of a certain point in the past.

The idea is that you restrict the training data provided to the model to material published before a given date. In the case of Talkie, aka 13B 1930 LM, the cutoff is, as the name suggests, the year 1930. This choice of year might seem arbitrary, but it’s not: as we discussed here back in January, many forms of copyright expire on January 1 of the year that comes 95 years after the copyrighted material was released. This means that a whole lot of material released in 1930 went into the public domain at the start of this year.

Choosing 1930 as a cut-off date thus allows Talkie to sidestep the question of how to navigate copyright, an issue that has been blithely ignored by a problem for other LLMs. Which, OK, this is all interesting, but what is it for?

In answering that question, Talkie’s creators lean heavily on two sources: a talk given by AI researcher Owain Evans, from which the term “vintage LLM” originated, and a paper on “temporal language models” originating from a company called Calcifer Computing, whose business apparently involves providing “non-recurring engineering services for clients with problems interesting enough to warrant our attention.”

Evans’ talk couches its ideas in the sort of hyperbole that seems compulsory for AI proponents: “The first humanistic motivation [for vintage LLMs] is time travel,” he explains modestly. “What would it be like to communicate with someone from 1700?” To answer this question, he proposes the idea of models trained on data that cuts off at a certain time—exactly the sort of thing Talkie is doing, in other words.

The Calcifer Computing paper is less violet-hued, discussing the challenge of how LLMs can account for the way that aspects of language—words’ meanings, speech patterns, vocabulary—change over time. This is genuinely interesting, and apparently inspired one of the first uses for Talkie, which was to provide a subjective rating of the “surprisingness” of various post-1930 events.

The really interesting question, though, is how reliably an LLM trained on data that cuts off at a certain date can predict what will happen after that date. This feels like a scaled-down sociological analogue of the more fundamental question of determinism, which asks whether knowing everything about a system’s initial state allows you to predict that system’s future states.

Of course, you can feed an LLM information until the cows come home; it will never and could never know everything possible about the state of the world in 1930, or 69 BC, or 5:30pm yesterday. But still, the idea of giving an LLM a solid grounding in history along with a large amount of information about the state of the world in 1930, and then asking, “What happens next?”… even for someone generally skeptical of LLMs (like, y’know, me), that’s an interesting question. Being AI people, Talkie’s creators aren’t satisfied with predicting the future; they also allude to a question posed by Google DeepMind CEO Demis Hassabis, who once asked whether an LLM trained with data that cuts off at 1911 could discover general relativity.

Sadly, it doesn’t appear that there’s an answer to either question yet. The rest of the paper is devoted mostly to explaining the various challenges of getting Talkie to work reliably, foremost among them the lack of reliable training data. Talkie is trained on data scanned from physical sources, making reliable character recognition facilities extremely important. There’s also the challenge of what the authors call “contamination,” i.e., the leakage of post-1930s material into the training data.

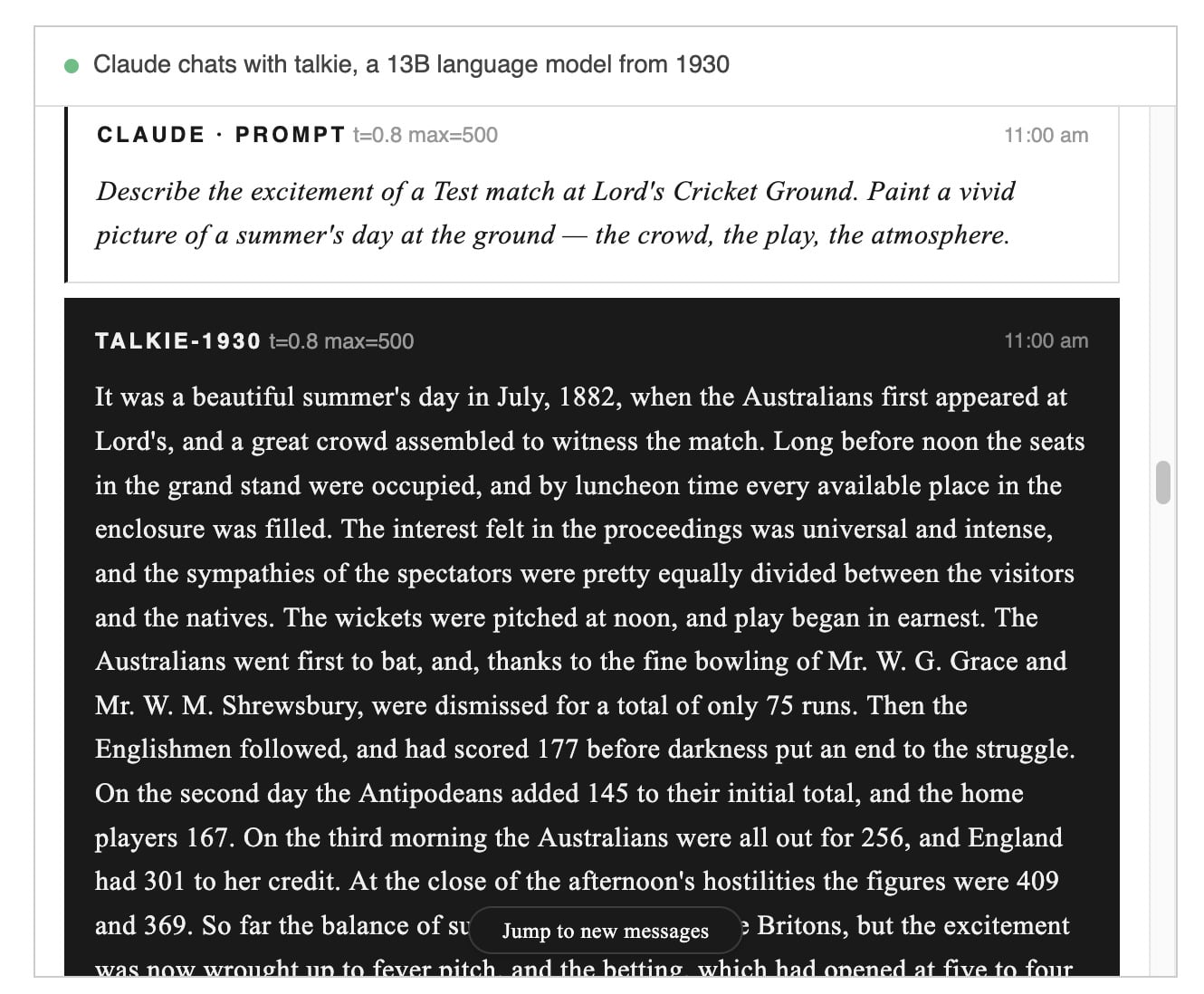

At this point, Talkie falls into the “potentially interesting and apparently harmless” category of LLM/AI agent projects, which, honestly, these days feels like about the best we can hope for. The project’s site contains a live feed of the LLM answering questions posed by … another LLM. As this post was being written, Talkie was describing an 1882 cricket match, and if you’ll bear with me here:

This all seems very evocative, but unfortunately, I am a cricket nerd, and I can assure you that the match Talkie is describing never happened. The only Test played between Australia and England in 1882 was at the Oval in August that year, and it was perhaps the most famous ever played—the match was such a disastrous defeat for England that one London paper penned an obituary for English cricket, giving birth to The Ashes, a biennial series between the two countries that continues to this day.

It seems a curious choice for Talkie to describe a fictional match, and to place it specifically in the year of such a famous real match. It seems like a good guess that the training data is particularly well-stocked for descriptions of Test matches in that year, so out of interest, I asked Talkie to describe the actual Ashes test of 1882. Sadly, the result was even less accurate, featuring incorrect scores and at least one completely made-up player. Still, the descriptions it produces are certainly colorful and believable, so that’s … something?

Anyway, if Talkie does manage to keep its feet planted in reality and predict World War II, we look forward to letting you know about its verdicts on famous events like the siege of Leningrad, the D-Day landings at Brittany, and, of course, the surprise Japanese attack on Diego Garcia. Can it capture the unique timbre of the speeches of UK Prime Minister Winfield Cromwell or the terrifying machine-gun delivery of German dictator Rudolf Scheiße? That remains to be seen.