The faceputer ads say virtual reality is coming and it’s gonna work this time. But here’s some real talk: There are still many ways virtual reality cannot fool the human brain. And it has little to do with the tech itself. Instead, it’s about neuroscience and our brain’s perceptual limits.

True, the past year has brought a great flourishing of virtual reality systems that are miles better than the clunky, nauseating devices of the 90s. The HTC Vive and Sony’s Project Morpheus were just unveiled. Oculus is chugging along since its $2 billion acquisition by Facebook last year. Magic Leap is doing whatever the hell it’s doing.

This new set of devices is good enough to feel stomach-droppingly real—even though the images are still pixelated and lag a tiny bit. People in VR call this overwhelming feeling “presence.” But it’s possible to fool one part of the brain without fooling another.

When journalists write about being wowed by the latest fancy VR device, they mean the emotional gut punch of say, looking down a castle wall at an invading army. They don’t mean that VR is indistinguishable from reality. As Jason Jerald, a technology consultant for VR companies, puts it, “We can get very engaged in cartoon-like worlds.” Images don’t have to look perfect for presence.

But these imperfections become obvious if you spend more than the typical few minutes of a press junket inside VR. Or try to walk and turn. There are many reasons, both conscious and unconscious, that your brain rejects the reality of a screen mounted a few inches in front of your eyeballs.

Latency and the Age-Old Problem of Motion Sickness

Call it motion sickness or “simulator sickness” or “cybersickness,” but the nausea is real and has long bedeviled virtual reality. The main reason is latency, or the tiny but perceptible delay between when you move your head in VR and when the image in front of your eyes changes—creating a mismatch between the motion we feel (with our inner ears) and the image we see (with our eyes).

In real life, the delay is essentially zero. “Our sensory system and and motor systems are very tightly coupled,” says Beau Cronin, who earned his PhD in computational neuroscience at MIT and is writing a book on the neuroscience of VR.

In virtual reality, however, the latency can get as low as 20 milliseconds, though it can go up quite a bit depending on the exact application. It will never be zero because it will always take time for a computer to register your movements and draw the new image.

So how low does latency have need to be before you don’t notice it? Jerald, who did his doctoral research on the perceptual limits of latency, found that it varies wildly: His most sensitive subjects could notice lags of 3.2 milliseconds, the least sensitive hundreds of milliseconds. Indeed, sensitivity to simulator sickness can vary wildly, too. It may never be possible to design devices that make no one motion sick, but it is likely possible to design certain applications that don’t make most people sick.

My Eyes! The Vergence-Accommodation Conflict

A weird thing happens in VR: You can look at the far-off horizon of a virtual beach but still feel like you’re in a room. This could be partly the result of subtle feedback from the muscles surrounding your eyes. At its worst, it can cause painful eyestrain and headaches.

Here’s what happens. Put a finger in front of your face and gradually move it to your nose; your eyes will naturally move closer together to track your finger. This is vergence, where our eyes converge and diverge to look at close and distant objects, respectively. At the same time, the lenses in your eyes focus so the image of your finger remains clear while the background is fuzzy. This is called accommodation.

In VR, however, vergence and accommodation no longer integrate seamlessly. The screen of a typical head-mounted display sits three inches or so in front of your eyes. A set of lenses bends the light, so the image on the screen looks about one to three meters away. However, any objects further or closer than that can look blurry. And the entire screen is always in focus, no matter where your eyes are looking. This can make spending an extended period of time in VR pretty uncomfortable.

There are high-flying ideas about how to get around the problem, and the name on everyone’s lips is Magic Leap. The company hasn’t publicly revealed much, though its patents show an interest in light field technology, where a screen of pixels is replaced by an array of tiny mirrors that reflect light directly into the eyes. The objects rendered through light are supposed to achieve true depth, coming in and out of focus as a real object might.

https://gizmodo.com/how-magic-leap-is-secretly-creating-a-new-alternate-rea-1660441103

The Catch-22 of a Wide Field of View

To be truly immersive, virtual reality must show you what’s in front of your eyes — but also what’s to the side of them. The problem? “The larger the field of view the more sensitive you are to motion,” says Frank Steinicke, a professor at the University of Hamburg who spent 24 hours inside an Oculus Rift as an experiment.

Ever see something fly by at the edges of your vision? That’s because your peripheral vision is especially sensitive to movement. Capturing movement in the periphery is key to an immersive experience, but that also means capturing it accurately is key to a non-nauseating immersive experience. Peripheral vision actually goes through its own pathways in your brain, separate from those used by your central vision. It appears to be closely integrated with your sense of spatial orientation.

Because peripheral and central vision work so differently, it actually means that a wide field of view, which incorporates both, actually needs to solve two sets of problems. A flickering that is not noticeable right in front of your eye becomes distracting in your peripheral vision.

Navigating Virtual Space, or Motion Sickness Rears Its Ugly Head Again

Even in a world with perfect motion tracking and zero latency, we still have motion sickness. And that means that there’s an additional hurdle to creating a realistic experience in virtual spaces.

This goes back to the mismatch between the images we see and the movements we feel. If you’re controlling a character with a joystick in an immersive virtual environment, there’s always going to be a mismatch. The only way around it is perfect 1:1 motion in the real and virtual world, which means physically walking a mile if your character is walking a mile. This becomes pretty darn impractical if you want to play games in your living room.

One solution is simply game design, which is the exact topic of a 53-page Oculus Best Practices Guide. For example, when people are put in a virtual cockpit, they can sit still and drive or fly around with less motion sickness—kind of like driving a car in the real world. But this pretty obviously negates the fun of a truly interactive VR experience.

There’s also the possibility of using omnidirectional treadmills. An even more intriguing idea is redirected walking, which exploits the fact that our sense of direction is not perfect. For example, people trying to walk in a straight line in the desert will naturally start to walk in circles. Studies at USC and the Max Planck Institute among others have found people can be subtly nudged into thinking they are walking around in a bigger space than they actually are.

VR as the Ultimate Neuroscience Experiment

Virtual reality companies, for their part, are well aware their technology is not exactly ready for primetime. Oculus has only released its PChardware as “developer kits,” and the release date for a consumer version is still floating vaguely in the future. Other products are available now, like the Samsung Gear VR and Google Cardboard, but VR suffered from overly high expectations before, and its enthusiasts are wary of it happening again.

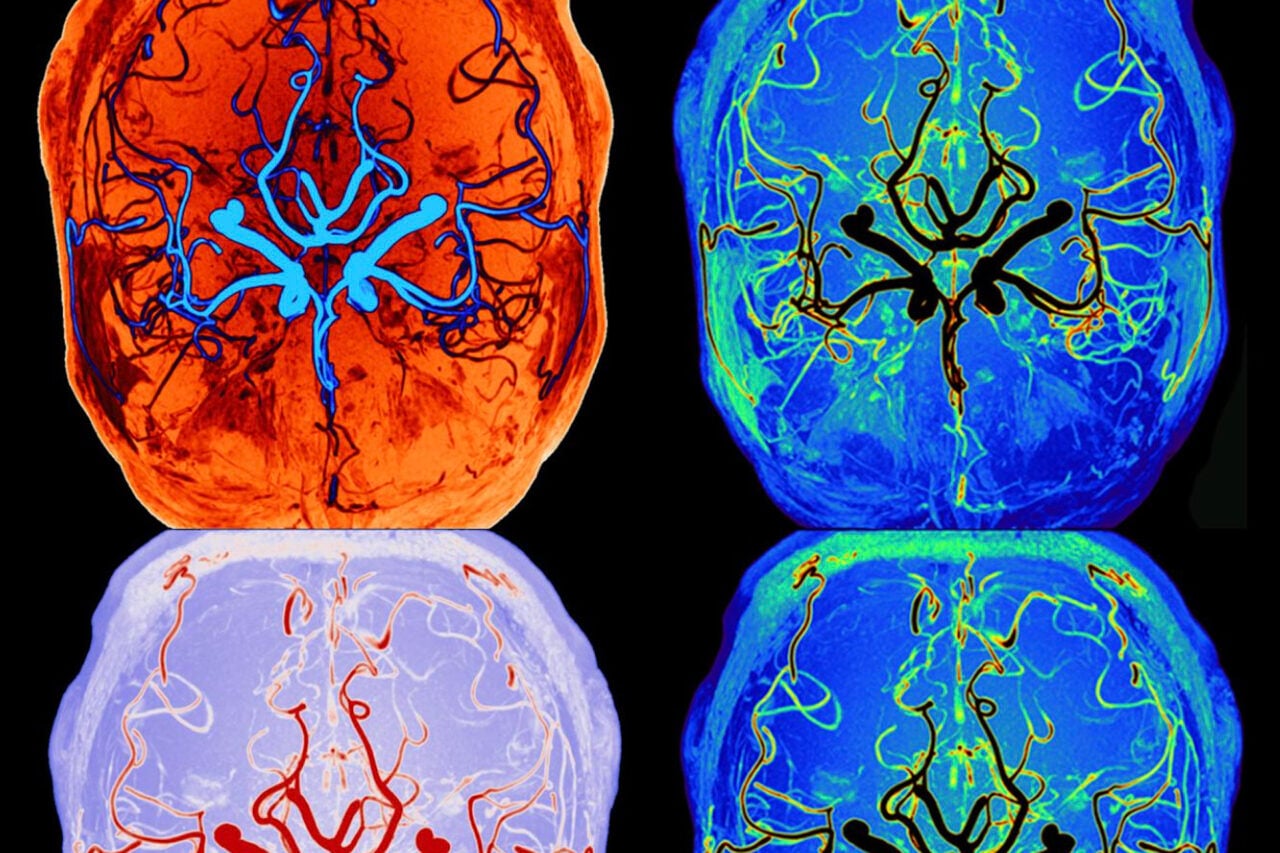

Admitting that there are unsolved neuroscience problems with VR doesn’t mean the technology is doomed to fail. Instead, it means something far more fascinating: Understanding why virtual reality fails to fool us could lead to a better understanding of the exquisite complexity of the human brain. Or, as Cronin put it, “The Oculus Best Practices guide may be the most substantial thing ever written on applied sensorimotor neuroscience.”

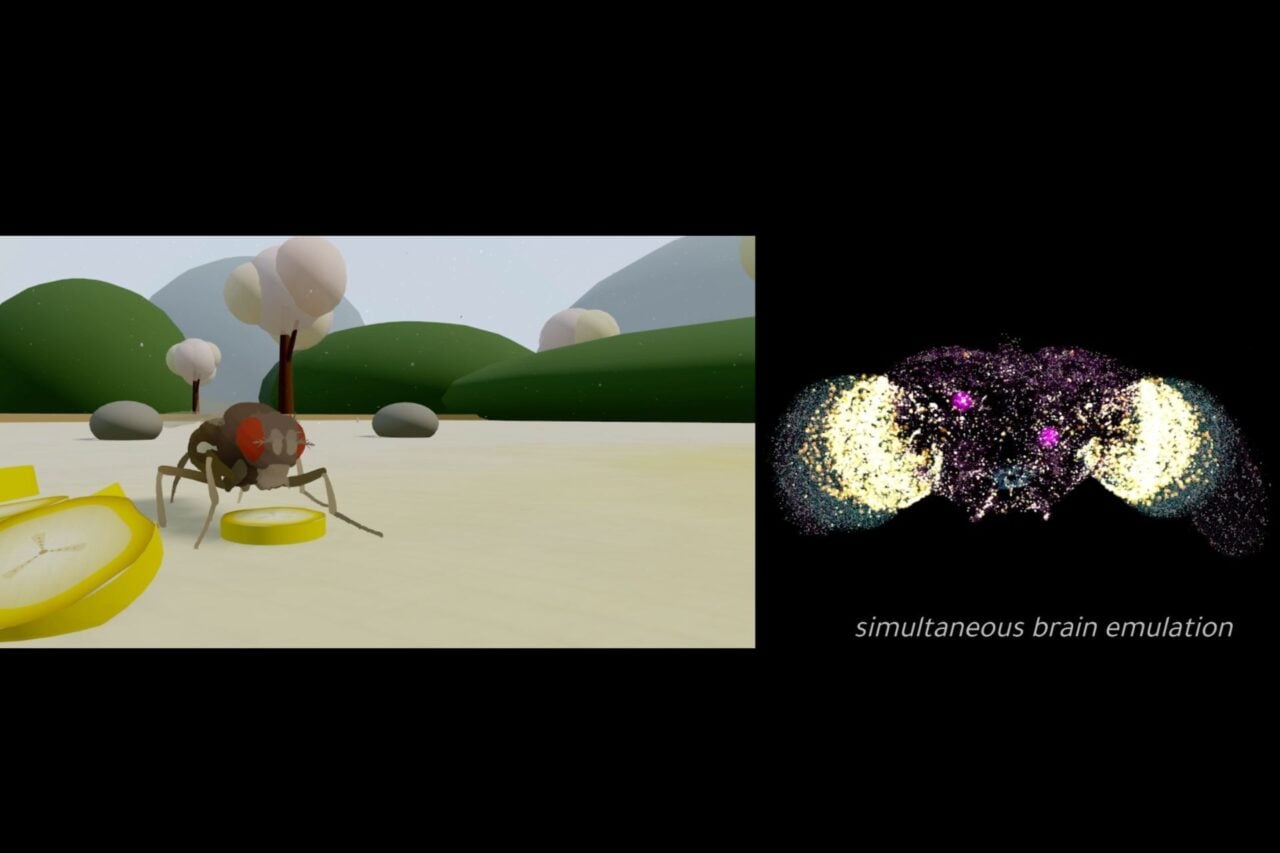

And further out on the horizon, ever more sophisticated VR technology could dramatically expand what we do in neuroscience experiments. For example, William Warren, a professor of cognitive science at Brown, has studied spatial navigation by putting people inside virtual environments with wormholes. Crude forms of virtual reality for mice, fruit flies, and zebrafish are already a common part of neuroscience research.

By confusing the brain deliberately, we learn how it works in ordinary situations. And we just might get some awesome games out of it, too.

Top image: igorrita/shutterstock

Contact the author at [email protected].