It brings me no joy to say this, but Patricia Highsmith’s mercurial grifting antihero in The Talented Mr. Ripley would not have to be talented anymore. Advances in generative AI—the ability to create credible videos of anyone, indistinguishable voice clones, and other passable forgeries with ease—have taken all the artistry out of con artistry.

New research led by a team at Mount Sinai’s Icahn School of Medicine in New York has made a troubling case for constant vigilance against the threat of “deepfake” medical evidence.

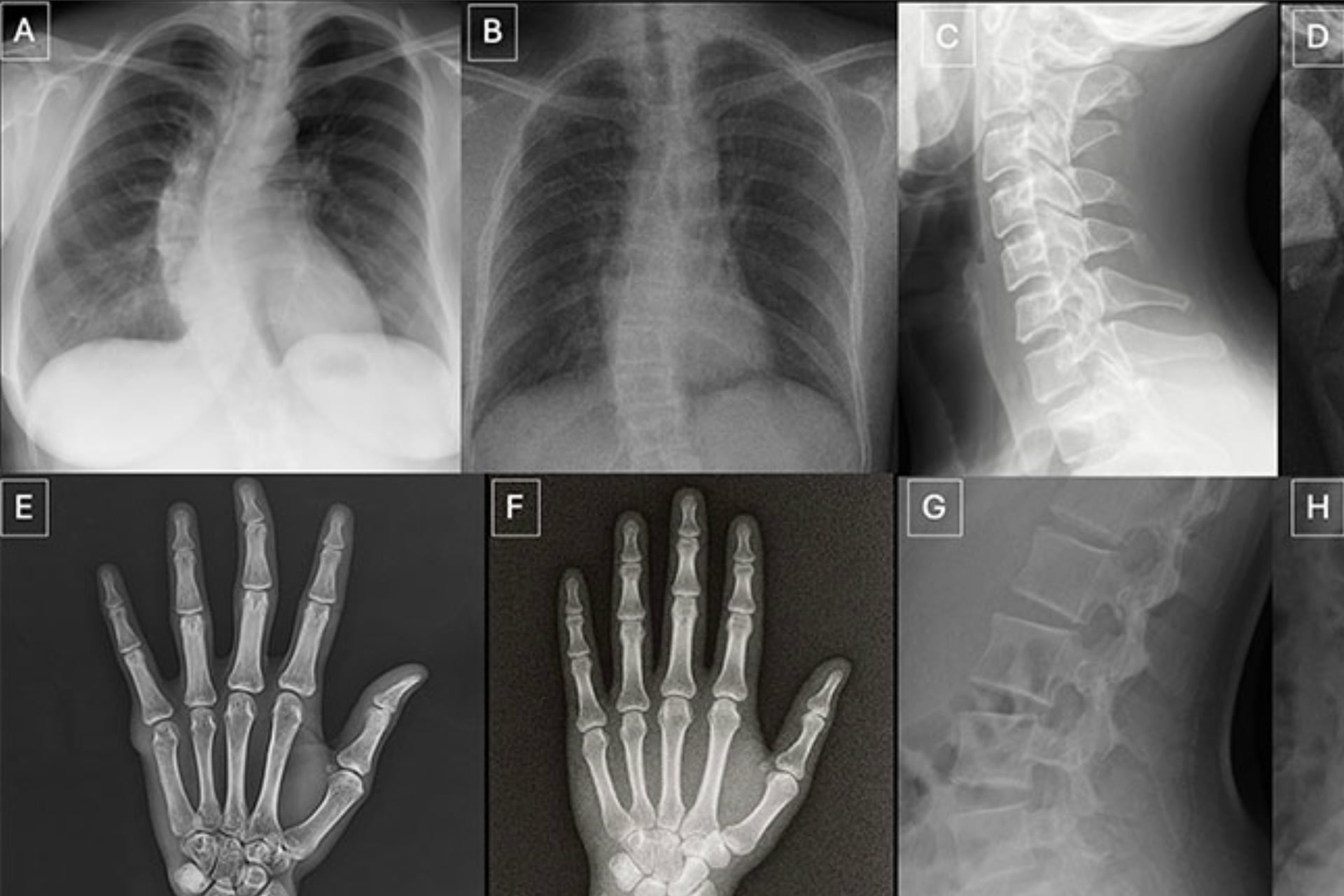

The researchers subjected a group of volunteers, 17 practicing radiologists from six countries, to tests that required them to distinguish real X-rays from AI-generated simulacra across a pool of 264 unique images. The results did not inspire confidence.

“Our study demonstrates that these deepfake X-rays are realistic enough to deceive radiologists, the most highly trained medical image specialists,” the study’s lead author Dr. Mickael Tordjman, an MD and a post-doctoral fellow at the Icahn School, said in a press statement, “even when they were aware that AI-generated images were present.”

In a later test, the AI fakes even fooled one of the same multimodal large language models that had been used to create them: OpenAI’s ChatGPT-4o.

The tremor of forgery

Tordjman pursued this project out of a genuine concern for the risks to patients, doctors, and countless other innocent bystanders. Believable AI-generated medical imagery, he said, “creates a high-stakes vulnerability for fraudulent litigation if, for example, a fabricated fracture could be indistinguishable from a real one.” This issue has already caught the attention of legal experts seeking to protect juries from becoming tainted by exposure to similar AI forgeries.

“There is also a significant cybersecurity risk,” Tordjman added, “if hackers were to gain access to a hospital’s network and inject synthetic images to manipulate patient diagnoses or cause widespread clinical chaos.”

The 17 volunteer radiologists that Tordjman’s team tested were exposed to two distinct datasets for this study, published Tuesday in the journal Radiology. The first asked volunteers to look at 154 static X-rays, half genuine radiographs and half Chat GPT-4o-generated forgeries (77 each). The second test utilized a specialized diffusion model AI trained to make believable chest radiographs, with organs like the heart and lungs visible, called RoentGen; volunteers were asked to sort through a dataset of 110 images, 55 real and 55 fake.

Radiologists who were made aware of the fact that these datasets contained AI images fared better than those exposed to the images without any indication of the test’s actual purpose, but still not great. These volunteers showed a mean accuracy of 75%, compared to only 41% accuracy for the latter group.

The study’s 17 individual radiologists, whose depth of professional experience varied (zero to 40 years on the job), ranged from 58% to 92% on the ChatGPT-generated images and from 62% to 78% on the RoentGen-made chest X-rays. Age and experience did not appear to be a factor in their accuracy, but, for some reason, musculoskeletal radiologists proved to be significantly better at spotting fakes than other subspecialists.

A game for the living (and the chatbots)

Tordjman and his team also ran their tests on four multimodal LLMs, ChatGPT-4o and 5, Google’s Gemini 2.5 Pro, and Meta’s Llama 4 Maverick. The bots did just slighting worse than the humans, ranging from about 57% to 85% accuracy on the fakes made by GPT-4o (a particularly embarrassing showing for ChatGPT-4o, itself, in a way).

When it came to RoentGen’s synthetic chest X-Rays, the LLMs’ accuracy spotting fakes varied just a little bit more widely, ranging from 52% to 89%.

Tordjman said he hopes future work will build off these findings to establish educational datasets and detection tools. “Deepfake medical images often look too perfect,” he noted. “Bones are overly smooth, spines unnaturally straight, lungs overly symmetrical, blood vessel patterns excessively uniform, and fractures appear unusually clean and consistent.”

You can take a version of the test here yourself. But don’t beat yourself up over a bad score. As someone who knew a lot about con artists and self-deception once put it, “Life is a long failure of understanding.”