Google announced its much-hyped AI-powered chatbot this week as part of a rapidly intensifying AI arms race between tech heavy-hitters. The tool is the company’s response to OpenAI’s ChatGPT and Microsoft’s swift maneuvering to incorporate that large language tool into its Bing search engine. But there’s one big problem already: Google’s Bard is spinning tall tales.

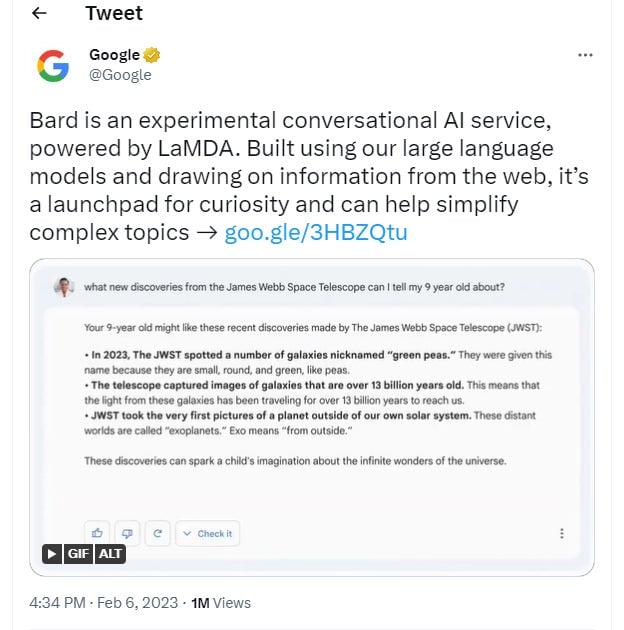

In its very first foray onto the public internet, the AI chatbot told a lie. Google released a blog post on Monday introducing Bard to the masses, though the tool itself is still only accessible to a small number of beta testers. And in that promotional post, the company included a GIF, demonstrating Bard in action. The same recording was also posted to the company’s Twitter account.

“What new discoveries from the James Webb Space Telescope can I tell my 9 year old about?” the demo query asks. In response, Bard offers three suggestions: the imaging of so-called “green pea” galaxies, that the telescope has documented galaxies more than 13 billion years old, and that it took the very first pictures of exoplanets. Important to note: that last point is NOT TRUE!

The first ever images of exoplanets, planets beyond our own solar system, were captured by the European Southern Observatory’s Very Large Telescope all the way back in 2004, according to NASA. And since then, Hubble Space Telescope has also provided direct images of exoplanets.

The mistake, first pointed out in a Wednesday report from Reuters, is an astounding oversight from Google. It’s one thing for AI to offer false information; it’s a whole other thing for a company to feature such a flub in its advertising materials.

Google has repeatedly touted the idea of “responsible” AI development. Earlier today, the company was talking up its measured, cautious approach to artificial intelligence in a live event from Paris. Over the past few months, it’s become apparent that large language model-powered AIs have a problem with separating fact from fiction, but Google has tried to position itself as the truly informative alternative to the more free-wheeling ChatGPT.

Yet even when Google internally tested for and emphasized accuracy in the lead-up to Bard’s reveal, obviously beating Microsoft to the punch took precedence. Even with Google’s reputation on the line, the company goofed its rushed AI rollout spectacularly.

When reached for comment, a Google spokesperson told us:

This highlights the importance of a rigorous testing process, something that we’re kicking off this week with our Trusted Tester program. We’ll combine external feedback with our own internal testing to make sure Bard’s responses meet a high bar for quality, safety and groundedness in real-world information.

On top of the factual mistake, the company was unable to complete a planned live demo at the Paris event on Wednesday morning because it was missing the phone that was necessary for the presentation. After the live event concluded, Google immediately removed the video from its YouTube page.

Google’s stock has lost about $100 million in value this morning.

It just goes to show that, sometimes, even years of technological advancement don’t change all that much. As it stands now, AI can’t solve the perennial problem of people lying on the internet—and in fact, it’s liable to make things much, much worse. In the race to be the next big thing, or to continue being the only big thing in search, Google might want to aim for something a little slower and steadier.

Correction 2/8/2023, 3:07 P.M. ET: An earlier version of this story incorrectly defined the term exoplanet. A great reminder that both humans and AI are fallible.