When training a future robot overlord, you want it to learn to make complex decisions. No one likes an android whose only call is ‘shoot it.’ Neural networks allow problem solving, prioritizing, and hopefully mercy.

There’s a midnight showing of the next Twilight movie in a theater on the other side of town, and you have to catch it, because although nothing matters more to you than seeing Edward and Bella get married, it’s being shown as ironic so you won’t lose face in front of your friends. If you ask a computer to map out the route to the theater, so you can figure out if you should bus, walk, or take a cab, it will do so accurately and quickly.

If you ask it to do that while checking the weather – reminding you of the times someone has puked on the bus, calling up stories of cab drivers who murdered people, and street crime in the neighborhoods you’re going through, and calculating the probability that someone will see you in your Twilight t-shirt and not know that you’re supposedly wearing it ironically – it won’t be nearly as quick.

If you ask it to make a decision for you, you are going get one of those annoying whitish screens that dings whenever you try to click on something.

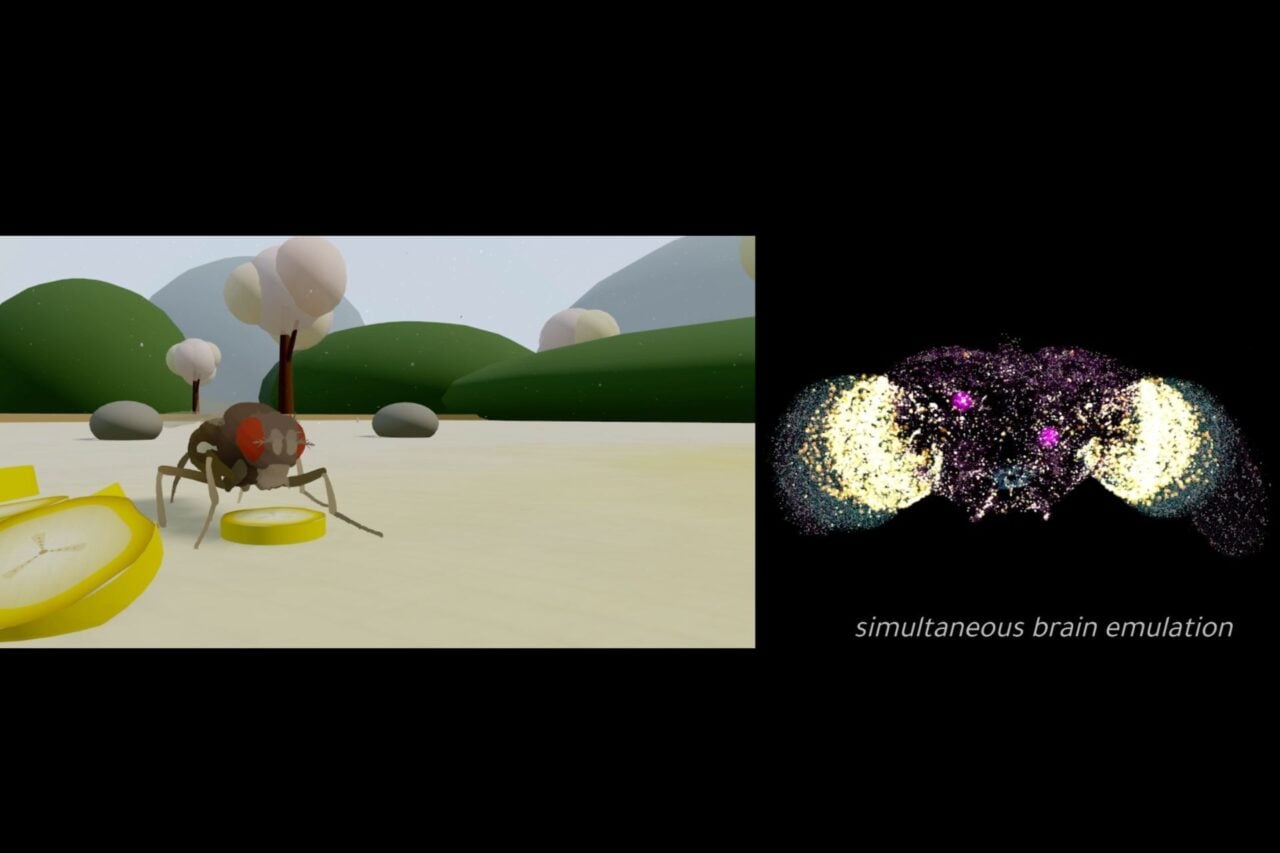

Artificial neural networks are built to solve these problems. They calculate and weight each risk and come up with a solution. They do so by imitating the structure of a human brain.

A neuron is basically a collection of triggers waiting to fire. When something presses its outer structures, the dendrites, they inform the cell body. If the disturbance is enough, the cell body shoots electric impulses down the axon to the synapse, the connection between nerves. This alerts other dendrites, and they set off the next neuron. The exact sequences of the each dance of dendrites is stored up as information that lets people distinguish a free balloon from a monkey with a gun, and figure out what to do in each situation.

A basic computer cell mimics that neuron. Impulses that go in, and if those impulses are strong enough in the right places, they continue onward. If X1 and X2 come in, the computer tells you to reach out your hand, because everyone loves balloons. If XN and X(N-1) are fired, the computer lets you know that you shouldn’t have worn the banana-scented body lotion.

But that’s just a basic simulated neuron. A more sophisticated one understands that not every impulse is important. In this case, the impulses are weighted. The shade of the balloon matters to you, since you’re not fond of orange. You’ll skip it. The shade of the monkey, or the gun, don’t really matter to you in the least. With a weighted system, you shrink from both the balloon and the monkey.

But what if the shade of the gun does matter? This is where feedback loops come in. What if this computer brain would tell you to get away from the monkey, but when it informed some of it’s ‘neurons’ about it, they came back with the knowledge that the monkey’s gun has an orange tip, and orange tips are on toy guns.

Feedback loops are another addition to the process, when the neural network has to take a part of the decision and send it back to the kitchen. It’s not a monkey with a gun, it’s a monkey with a toy. Maybe it’s still time to get away, or maybe it’s time to stick around and see how this plays out.

That depends on what exactly the artificial neural network is taught.

There are two kinds of networks; self-organizing networks and back-propagation networks. Self-organizing networks just take in a lot of information and its weights, and get down to business. Back-propagating networks have to be taught. They are given problems to solve, again and again. If the solutions are something that the people analyzing the data think works, the decision that each neuron made, and the weight given to that decision, is reinforced. If they don’t care to converse with a monkey with a gun, even though the computer tells them it has been proven to make for a very funny story, the weight of funniness is decreased when there is a gun involved. In time, the back-propagating network is trained to make reliable and correct conclusion, often with data sets that humans aren’t able to process as quickly.

They are based on the brain. They mimic the brain. They even come to have certain sets of values, like an individual human mind does.

But is this intelligence. Some people see the above description as a series of on and off switches, being pressed in certain ways to achieve certain results. They’re complicated calculators, but they’re still just calculators.

Other people will look at the above and see neurons made of inorganic material. They will see that they are given priorities that mimic those of their creators, that they are educated through instruction or experience, and that they come to have an expertise the way humans do. Some see this as artificial intelligence. Some see it as intelligence, however limited. If it is intelligence, what does that make us? If it’s not, why? What’s so special about us that the mechanics of our brain can’t be duplicated?

Via Ic.Uk, and Computerworld.