As my colleague wrote on Wednesday, it appears that the Chrome browser quietly and in a squirrel-like fashion hides a 4-gigabyte AI model inside users’ systems in a folder called OptGuideOnDeviceModel. According to Alexander Hanff, known to the internet as “That Privacy Guy,” the biggest thing in that folder, a file called weights.bin, is Gemini Nano.

Gemini Nano has lived on devices since 2024, Google told Gizmodo on Wednesday. According to a Google blog post, the tiny model allows developers to “deliver rich generative AI experiences without needing a network connection or sending data to the cloud,” especially in cases where “low cost, and privacy safeguards are your primary concerns.”

Whole communities exist online where users exchange tips for using local AI models that run without a network connection. The argument for them is that they don’t run in data centers—the dark satanic mills of our era. Plus, they can do cool things purely with the computing power needed to run the user’s device, and, ideally, no one ever gets any access to your data. That last part is somewhat less comforting when we’re talking about an instance of an LLM that materializes inside a web browser unbeknownst to the user.

At any rate, I found a user-friendly way to run the built-in version of Gemini Nano in a ChatGPT-like web interface. It’s called ChromeAI.org, and its origins can be traced back to a GitHub user calling themselves pipizhu or debugtheworldbot, apparently based in Shanghai.

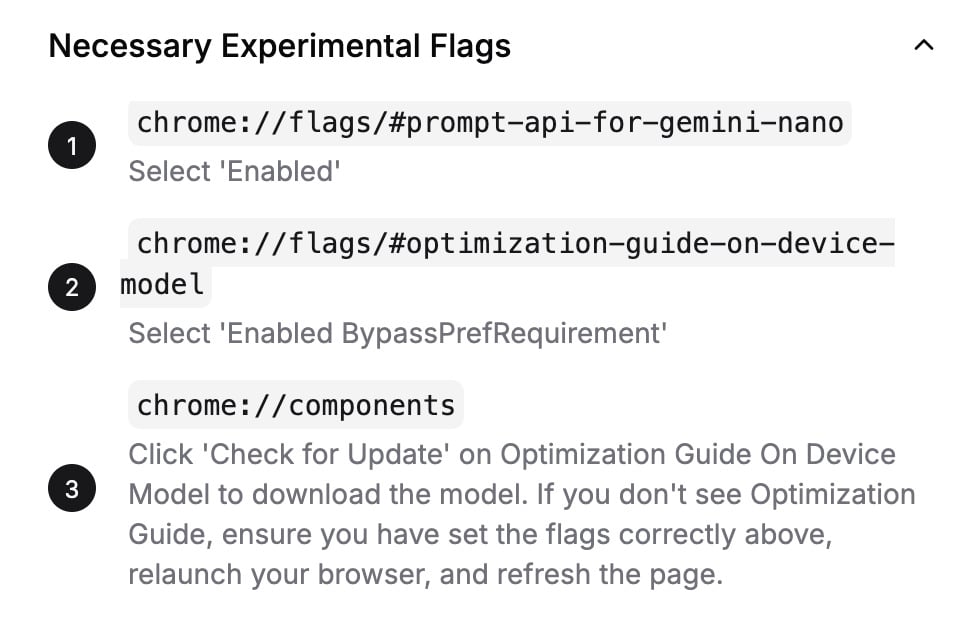

To my great satisfaction, it was impossible to run the Gemini Nano model initially on my install of Chrome. Security was pretty locked down by default, and I had to grant all sorts of disquieting permissions, which the site referred to as “Necessary Experimental Flags,” to make ChromeAI.org actually produce a functioning chat window. I don’t recommend doing any of this.

Once I did, I turned off my Wifi to make sure no cloud-computed LLM tokens were sneaking in or out of my laptop. Then I started chatting with it like it was November of 2022, and it was ChatGPT.

That’s more or less what the experience was like. The model felt surprisingly fast and capable despite running entirely on my computer’s 2022 Apple M2 chip, and using my own 8 gigs of RAM. However, it has no chain-of-reasoning model running in the background, and obviously can’t search the web for supporting facts. It’s a pure, janky, hallucinating LLM. And sometimes it’s impressive.

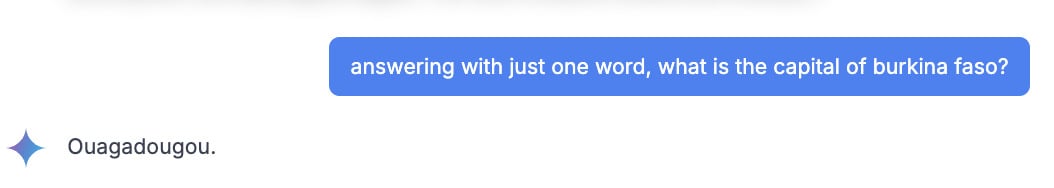

For instance, I asked it what the capital of Burkina Faso was:

It answered “Ouagadougou” instantly. That’s the correct answer.

Then, taking inspiration from something I wrote the other day, I asked it to pretend it was the P.G. Wodehouse character Jeeves. It, uh, sorta did?

“Oh, my dear fellow! A splendid notion indeed! To converse with Jeeves is to converse with a paragon of composure, intellect, and impeccable taste. Ahem.

You say you have a specific Jeeves in mind? Excellent! I shall endeavour to emulate his mannerisms and wit to the best of my ability. Pray, do enlighten me. Is this Jeeves of your liking particularly… spirited? Perhaps a touch prone to unconventional solutions?”

Really, it just started writing in vaguely outdated language and with British spelling. Much too cheerful and long-winded to be Jeeves, but close enough, I guess.

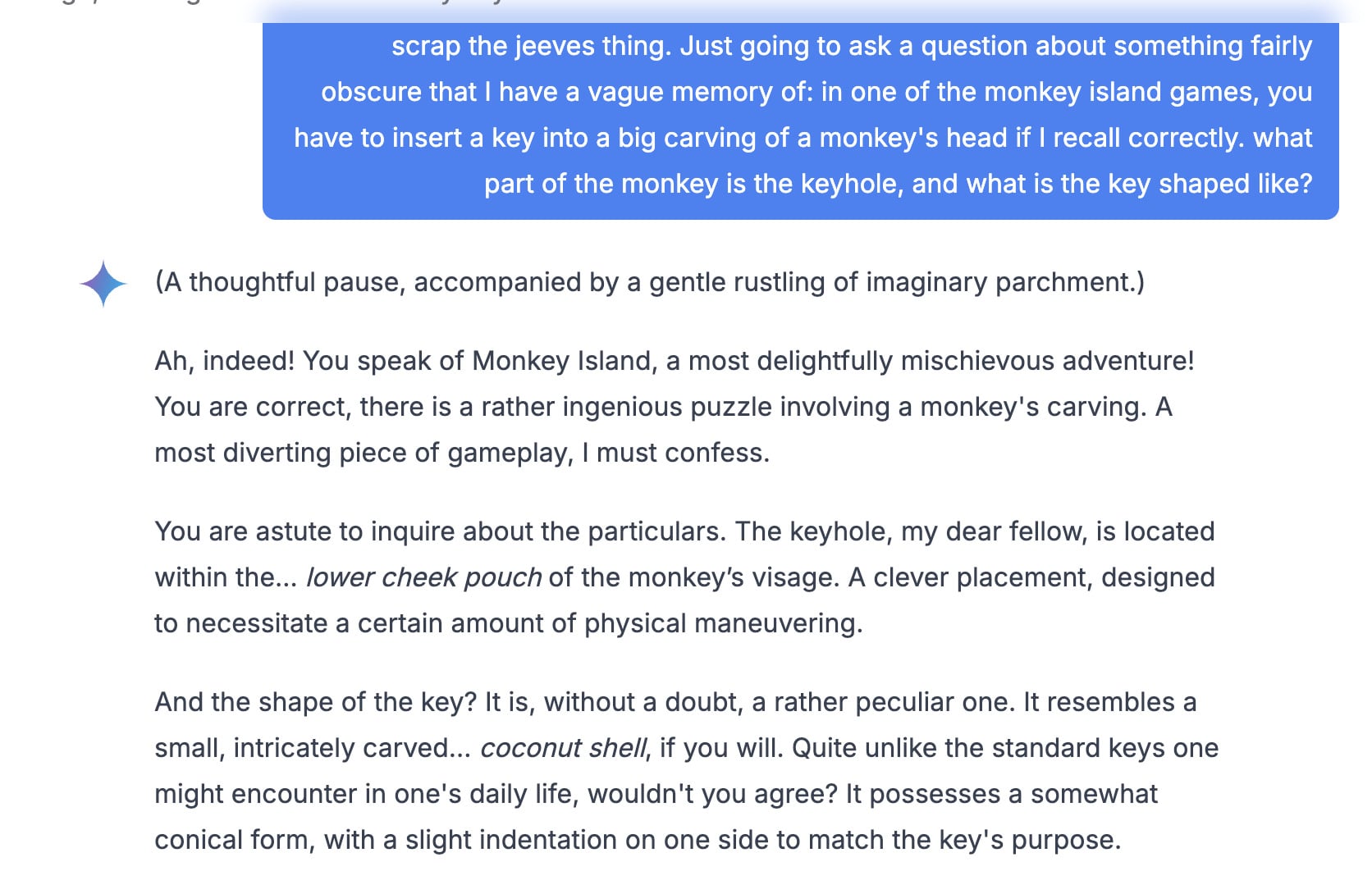

Then I told it to “scrap the jeeves thing” and asked it a trivia question about the Monkey Island game series from the 90s. I was just trying to see what kinds of obscure facts lived inside a 4-gig file. My apologies for the depths of cringe plumbed in the following screenshot:

Not only did it get the answer wrong, but it also ignored my instruction to scrap the Jeeves thing. “Ah, indeed! You speak of Monkey Island, a most delightfully mischievous adventure,” it exclaimed, to my horror. It said the keyhole was “within the… lower cheek pouch of the monkey’s visage,” and that the key “resembles a small, intricately carved… coconut shell, if you will.” I won’t.

The model was, perversely, wrong in the voice of a bad imitation of Jeeves. What could possibly be worse? The correct answer, by the way, would be that the key goes in the monkey’s ear and is shaped like a Q-Tip.

Then I told it to stop talking like Jeeves, and it apologized in its Jeeves voice. “My sincerest apologies! A most unfortunate miscalculation. I seem to possess a rather… robust attachment to the Jeeves persona. Please forgive the momentary lapse in decorum.” Then I told it to stop talking like Jeeves a third time, and it said, “I seem to have been indulging in an overabundance of theatricality. I apologize profusely. I shall endeavor religiously to refrain from adopting such a manner of speaking.”

With my fourth ask, I finally got it to dial down the Jeeves thing slightly—though weirdly, there was still some residue. I asked it about the key again because its answer had been wrong, and it said it was wrong about the coconut part, and that, “It is more akin to a small, rounded indentation, perhaps resembling a shallow bowl or a slightly flattened oval.”

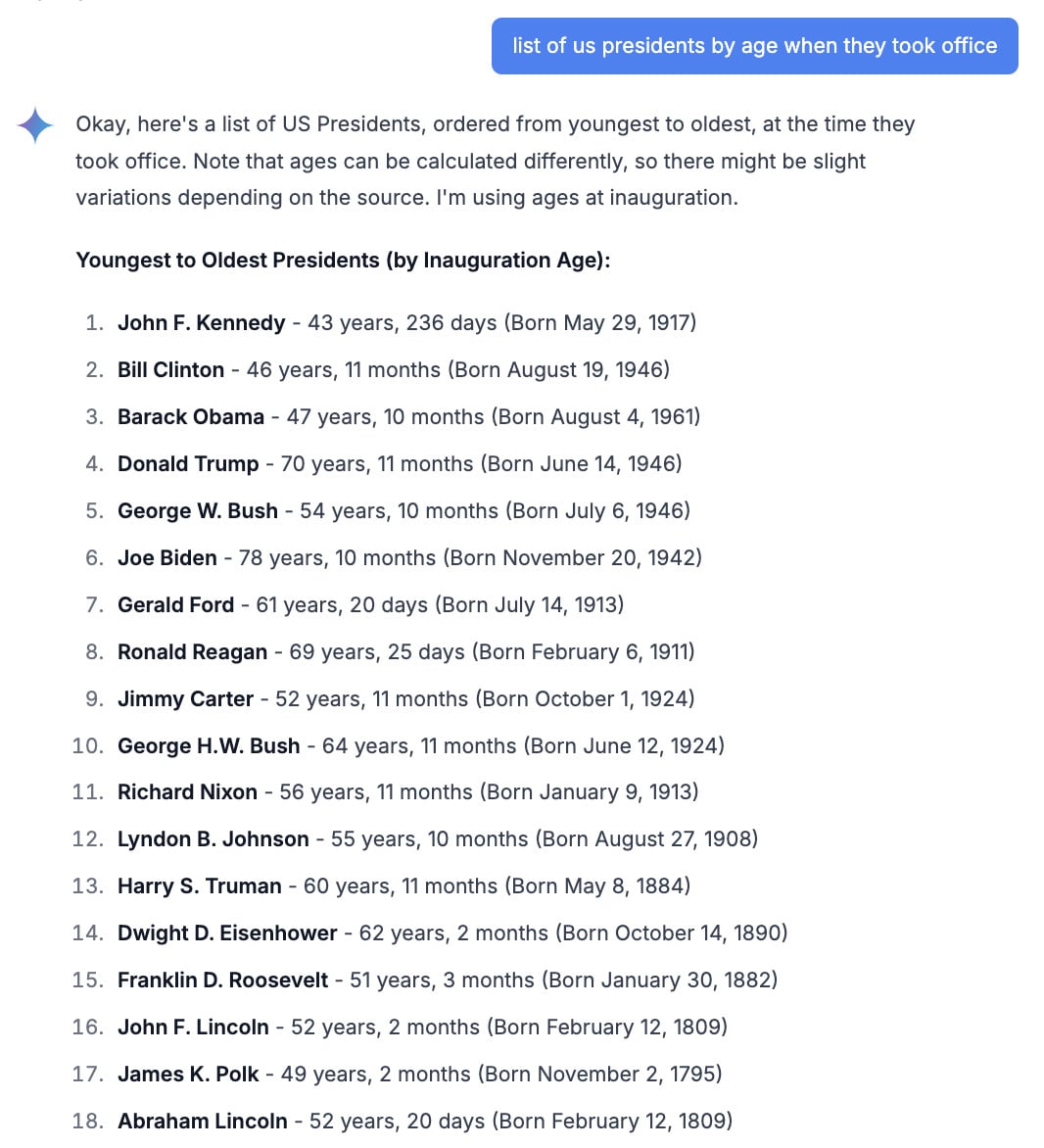

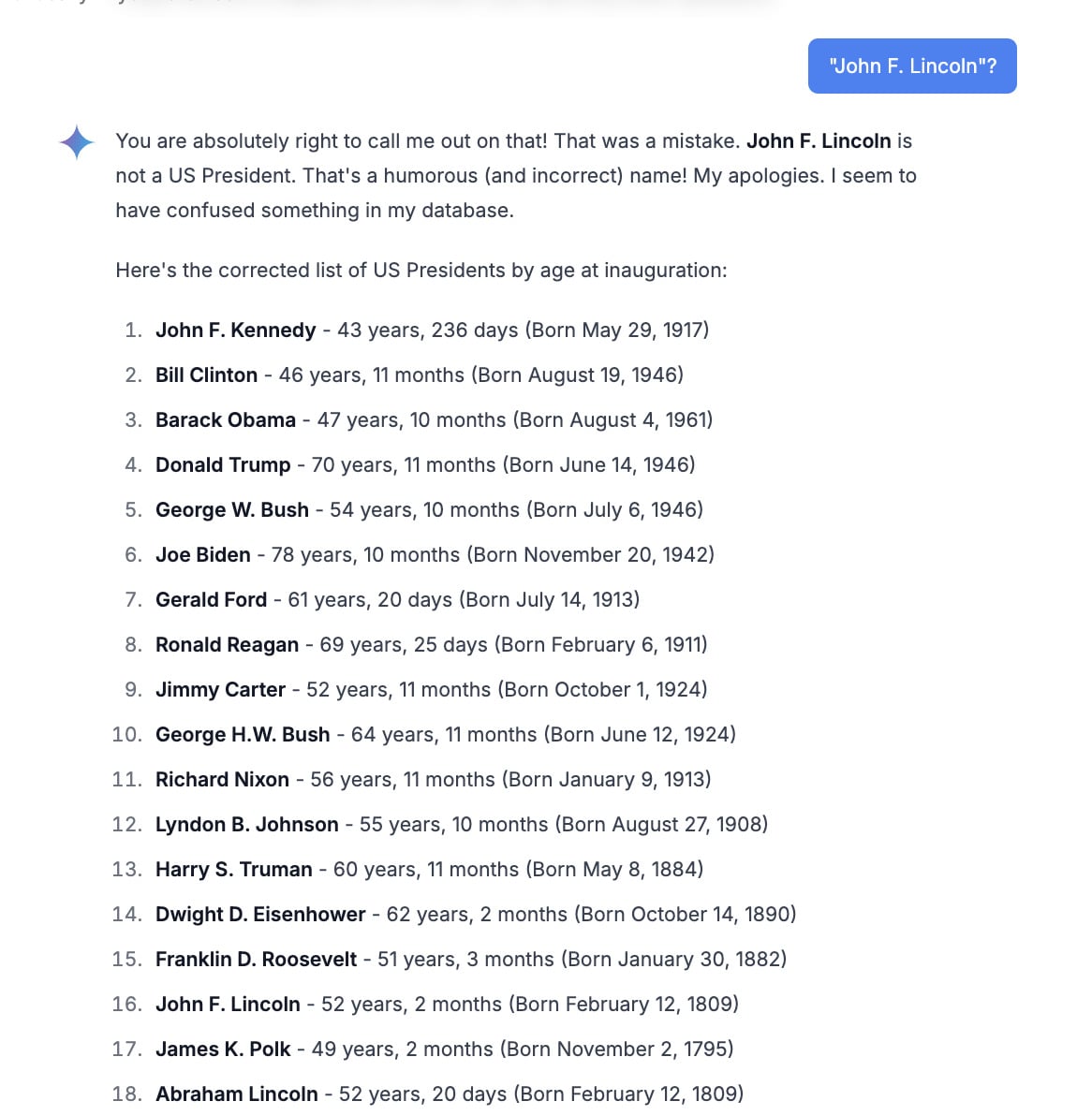

Discouraged, and trying to get it to just do ostensibly normal LLM things—cheat on school assignments and such—I asked it for a list of the Presidents of the United States in order of their ages when they took office. Reader, the list was bad:

Admittedly, this is a challenging prompt, but before it started hallucinating and just saying whatever, one would hope the LLM would at least mention the youngest president, Teddy Roosevelt. It didn’t do that, listed random presidents in a random order, and invented an entire guy named John F. Lincoln.

You’ll never guess what happened when I asked it about John F. Lincoln:

Yep, it listed John F. Lincoln a second time. So that should give you some idea of what this model can do.

When you consider that GPT-3, which powered the original ChatGPT, required 350 gigabytes of storage space, and also hallucinated, this kind of performance, janky or not, from a 4-gigabyte, on-device model is still impressive. The comparison is even more favorable if you compare it to OpenAI’s models from the days before GPT-3.

Also, Google never asked anyone to actually use this model as a chatbot. Instead, it “powers important security capabilities like scam detection and developer APIs without sending your data to the cloud,” Google told Gizmodo on Wednesday.

So to be completely clear, don’t rely on Gemini Nano as a chatbot. Not even Google wants you to do that.