For the record, planet Earth is still in the Holocene.

I mention this because earlier in the week, news made the rounds that scientists have declared an end to the geologic epoch of the past 12,000 years. According to numerous reports, the Anthropocene, a new epoch of man and machine, has been upon us since we started testing nuclear weapons in the 1950s.

If this week’s declarative headlines felt strangely familiar to you, it’s because they are. The arrival of the Anthropocene has become something of a planetary groundhog day that the media stirs up every six months or so. This time, it was sparked when an official group of experts presented a recommendation for the Anthropocene’s adoption to the International Geologic Congress. In the past, scientific papers describing the likely existence of the Anthropocene have ignited similar news cycles.

Don’t get me wrong. The Anthropocene is an immensely useful concept, and the planetary changes behind it—mass extinction, the global proliferation of chickens and plastics, climate change, and nuclear weapons to name but a few—are very real and very significant. We could be in the Anthropocene right now. But officially, we are not.

“I think at the earliest, [the Anthropocene] is probably two to three years from formalization,” Jan Zalasiewicz, chair of the Anthropocene Working Group, told Gizmodo. “That is exceedingly optimistic.”

Defining a new geologic boundary often takes decades, a reality that speaks to the essential conservatism of the geologic community when it comes to tampering with the all-sacred scale of time. The Anthropocene is exceedingly troublesome because geologists are used to thinking about very big chunks of time; tens of thousands of years at the smallest. How, if planet Earth is just now drawing its first breath of this brave new chapter in history, can we be sure it’s anything more than a hiccup? How can we know that scars industrial society has left on the planet will stand the test the time?

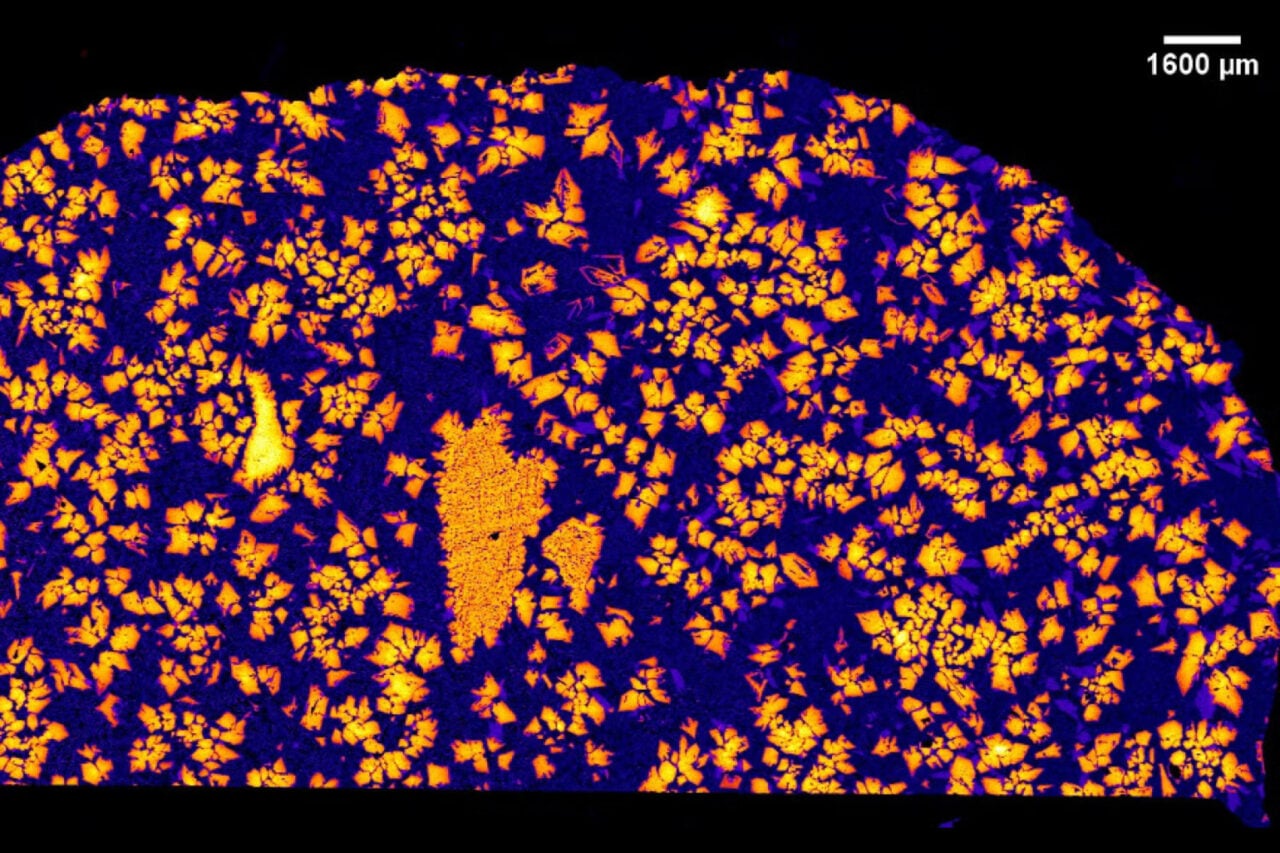

That is the essence of the Anthropocene debate: convincing scientists, who are used to seeing millions of years of time compressed into a few centimeters of rock, that a thin membrane of dust coating the surface of our planet is unique and permanent. There is mounting evidence that it is, which is why Zalasiewicz and his colleagues have now presented a formal recommendation for adoption of the Anthropocene term.

“The main outcome of our recent exchanges has been that the Anthropocene, as a planetary phenomenon, is real,” Zalasiewicz told Gizmodo. “Most people think it should be considered as a formal unit, and most of us think the best place to start is around the mid 20th century.”

That start date is less of an irrevocable turning point for the Anthropocene and more of a strategic hedge toward acceptance. It was at this time that humans started testing nuclear weapons regularly, producing a spike in the concentration of carbon-14 isotopes in our atmosphere. Each and every person born from the 1960s onwards bears this telltale “bomb spike” in his or her bones. We’ll carry this legacy of mass destruction weapons to our graves and, if we’re so lucky, to our fossilized remains. Since carbon-14 is normally quite scarce in the geologic record, this imprints the rocks and biota of the Anthropocene with a distinct signature.

From the perspective of a life form living on spaceship Earth, more salient hallmarks of the Anthropocene include a dramatic acceleration in the rate of species extinction, the proliferation of human garbage to every corner of the planet, and a sudden uptick in level of climate-warming carbon dioxide in the air, at a rate unprecedented for the past 66 million years. Over the next few years, Zalasiewicz and his colleagues will try to determine which signals are strongest and sharpest, by investigating sites around the world where geologic preservation occurs quickly.

“A lot of that rock might be sloppy, and some of it might still be quite smelly,” Zalasiewicz said, describing plans to sift through mud and lake sediments, sample snow and ice layers, and examine the recent growth of corals, which preserve a chemical signature of the ocean. “We start with a general sift process, then we begin to try to look for specific golden spike candidates,” he said. This refers to the representative site geologists use to define a new chapter in Earth’s history.

But at the end of the day, no matter how much evidence for the Anthropocene Zalasiewicz and his colleagues assemble, the International Geologic Congress could still reject the term. “Even if we get a well-argued proposal together, there is no guarantee at all this will be accepted,” he said. “At any level.”

Here’a a more chilling possibility to consider: while the Anthropocene is now being recommended as an epoch, it could wind up becoming an even larger dynasty of Earth time. It could be a period, to supersede the Quaternary’s 2.6 million reign, or an era, punting us out of the Cenozoic that began when an enormous asteroid slammed into the planet, triggered supervolcanic eruptions, and killed off the dinosaurs. That’ll depend on how dramatically people change the Earth over the centuries to come—and whether there’s anybody around to discuss the matter after we do.

Finally, there’s a more philosophical question to ponder: does the officiation of the Anthropocene by a group of hidebound academics really matter? The term has already seeped into popular speech, and entire university courses are being taught on the Anthropocene. Whether or not geologists can convince themselves that our plastic coffee cups will last through the ages, the Anthropocene is a tool for framing our rapidly changing world, and for convincing society that those changes are serious.

That is perhaps the most important point of all. If we can get enough people to care—soon—that humanity has become a force of nature, then just maybe, to the geologists of the distant future, the Anthropocene will seem nothing more than a few curious, but ultimately forgettable, dust particles clinging to the wall of time. It may be the best fate we can hope for.