For 30 years, savvy pixel-pushers have been using Photoshop to manipulate and edit imagery, but now that computers can create doctored photos all on their own using advanced AI, Adobe wants to leverage its image-editing tools to help verify the authenticity of photographs.

As Wired reports, Adobe partnered with a handful of companies last year to help develop its Content Authenticity Initiative: an open standard that stamps photographs with cryptographically protected metadata such as the name of the photographer, when the photo was snapped, the exact location an image was taken, and the name of editors who may have manipulated the photo in some manner along the way, even if it’s just a basic color correction.

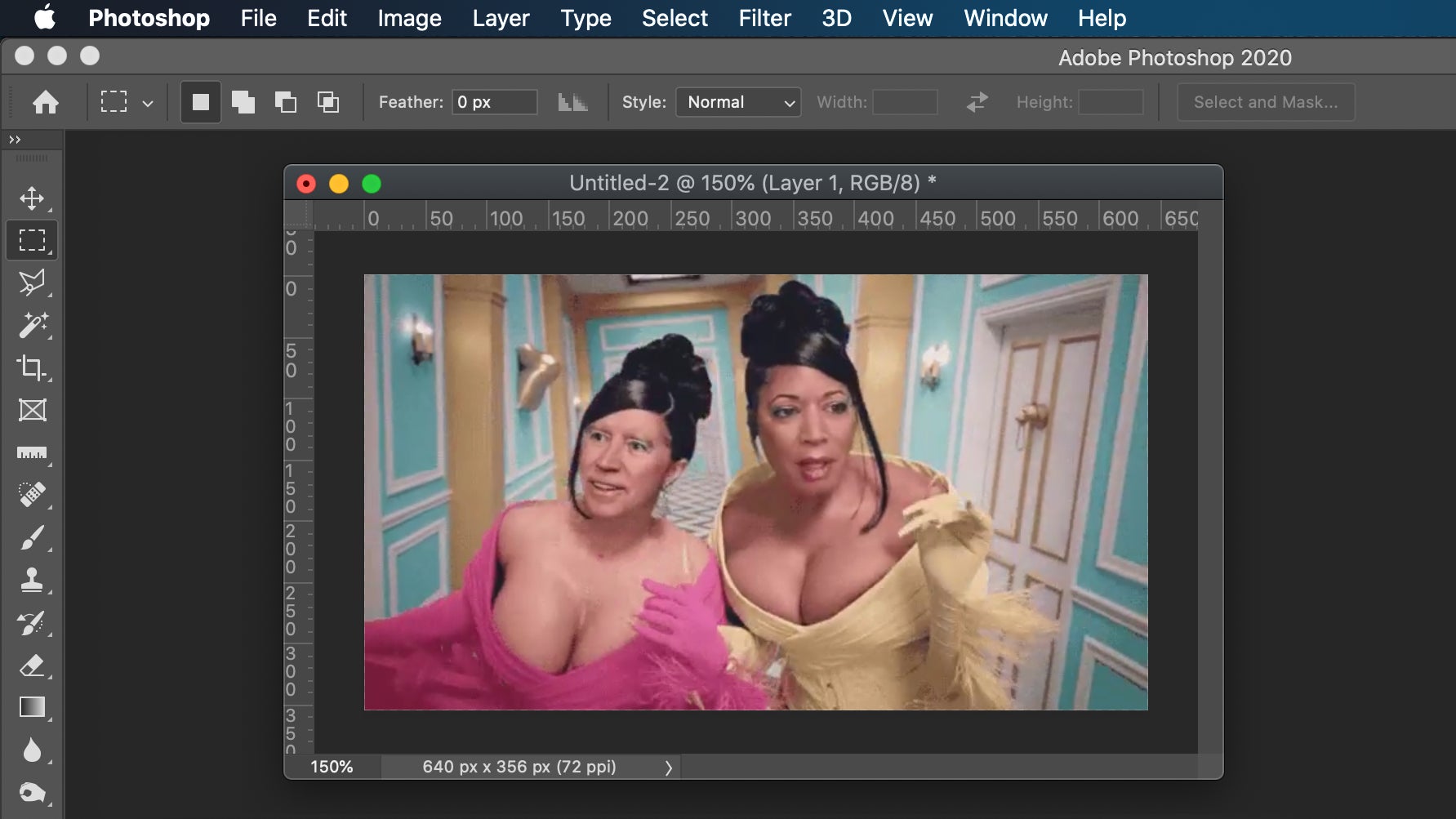

Later this year, Adobe plans to incorporate the CAI tagging capabilities into a preview release of Photoshop so that as images are opened, processed, and saved through the app, a record of who has handled or manipulated the photo can be continuously documented in an ever-growing log built right into the image itself. The CAI system will also include information about when a photo was published to a news site like The New York Times (one of Adobe’s original partners on this initiative last year) or a social network, such as Adobe’s Behance, where users and artists can easily share their creations online.

The CAI system does have the potential to help slow the spread of disinformation and manipulated photos, but it will require users to have access to the secured metadata, and to take the initiative to verify that an image they’re looking at is authentic. For example, photos of a supposed violent protest shared the next day on Facebook could be easily debunked by the metadata revealing the images were actually shot years ago in another part of the world.

For it to be effective, the CAI approach has to be widely accepted and implemented on a large scale by those creating photo content, those publishing it, and those consuming it. Photographers working for official news organizations could easily be required to use it, but that’s a tiny sliver of the imagery being uploaded to the internet on a daily basis. For the time being, it doesn’t seem like social media giants such as Twitter or Facebook (which owns Instagram) are planning to jump on the CAI bandwagon, which is problematic given that’s where a lot of so-called “fake news” gets posted and extensively shared now.

The use of cryptography does make it hard for the CAI metadata embedded in the images to be tampered with, but it’s not impossible. There’s also the potential for the metadata to be completely stripped off an image and replaced with fake information. The CAI system, at least in its current form, doesn’t include any safeguards to prevent people from taking screenshots and then modifying the signed and authorized images. One day such safeguards could be built into an operating system, limiting the ability to screenshot an image based on its CAI credentials, but that’s a long way off.

In the world of digital photography, Adobe carries a lot of weight and influence, and it will need to leverage that as much as it can for its Content Authenticity Initiative to have any hope of being an effective tool against fakes. Getting a handful of big name newspapers on board just isn’t enough. Support for the CAI system has to be baked into digital cameras, computers, mobile devices, and any platform that can be used to share images en masse. And it needs to be coupled with a big push to not only educate users on how to use this information to spot fake news, but also a desire to actually take a few extra seconds to verify for themselves if an image is real or not—and that might be the biggest hurdle. If the pandemic has taught us anything, it’s that people are eager to believe anything that supports their own ideals, no matter what the experts might say.