It’s heeeereeeee: the 2020 elections are just four days away, which means the next 100-plus hours may be the longest of your life. Given the results of the election are unlikely to emerge on November 3 and the current presidential administration has made clear it intends to claim victory no matter what happens, it might be more prudent to log off than to torture yourself with every bit of minutiae that scrolls past. But you’re not going to do that, so prepare yourself. This is Hellfeed: Election Edition.

Bluster’s Last Stand: Senate Republicans mount last effort to scare Facebook, Google, and Twitter into doing their bidding during the election

The GOP-controlled Senate Commerce Committee, having gotten subpoenas to compel Twitter CEO Jack Dorsey, Google CEO Sundar Pichai, and Facebook CEO Mark Zuckerberg, held a hearing on Section 230 of the Communications Decency Act this week. Section 230 is the law that limits the liability of website operators for user-generated content and their moderation decisions; Republicans on the committee have proposed ill-advised reforms that would make it easier for conservatives to promote a conspiracy theory that liberal tech companies are systematically censoring them.

Despite the ostensible topic, this hearing ended up not really being about Section 230; the topic only came up briefly and intermittently, with no real developments to speak of. Instead, it served as a last-minute pre-election opportunity for GOP members of Congress to stage a predictable, exhausting, anti-democracy struggle session. Their clear purpose going in was either to force the three companies to roll back their election policies or, at the very least, lay the groundwork to claim Big Tech helped steal the election for Joe Biden. It was also a wasted opportunity; aside from the lack of a substantive discussion on 230, there wasn’t much time for antitrust or privacy concerns.

Republicans berated the CEOs as arms of the Democratic Party and bombarded them with irrelevant gotchas, many of which were right-wing grievances that were incomprehensible to the general public. Dorsey (whose company’s value and influence is a piddling percentage of that of Facebook or Google) nonetheless took the brunt of the blast. This was likely due to the fact that many conservative influencers who claim to represent the populist soul of the party have been banned on the platform or forced to delete bigoted tweets.

Republicans on the committee demanded that Dorsey explain why the company blocked a New York Post article alleging corruption by Biden’s son in Ukraine, “censored” Donald Trump’s tweets but left up others by Iran’s Ayatollah Khamenei, and did not delete a post by a Chinese government official accusing the U.S. of engineering the novel coronavirus. (It was also surreal to see GOP senators use the opportunity to settle irrelevant personal grievances, such as an angry Ron Johnson yelling at Dorsey that a specific tweet joking he had murdered a dog was voter suppression and Marsha Blackburn demanding to know whether Google had fired an engineer who had criticized her once.) From the New York Times:

“Mr. Dorsey, your platform allows foreign dictators to post propaganda, typically without restriction,” [Senator Roger Wicker] said, “yet you typically restrict the president of the United States.”

[…] “I don’t like the idea of unelected elites in San Francisco or Silicon Valley deciding whether my speech is permissible on their platform,” [Senator Cory Gardner] said, “but I like even less the idea of unelected Washington, D.C., bureaucrats trying to enforce some kind of politically neutral content moderation.”

[…] “Mr. Dorsey who the hell elected you and put you in charge of what the media are allowed to report and what the American people are allowed to hear,” [Senator Ted Cruz] said.

Dorsey, looking somewhere between tired and uninterested, had explanations for each concern, but his answers were clearly not what the GOP senators were looking for (Twitter didn’t censor Trump, it attached fact-checking labels for tweets on specific protected topics; the decision on the Post article was a mistake; Iran’s leader was engaging in routine saber-rattling not prohibited by the rules).

Zuckerberg was mostly grilled by Democrats about Facebook’s preparedness on election security and restricting the activities of militant groups, while Pichai was barely acknowledged. Many Democrats used their time to lament the hearing as a pre-election stunt designed to intimidate tech CEOs before the elections, with Hawaii Senator Brian Schatz referring to it as a “sham.” Note that Democrats unanimously voted to serve the subpoenas to the three CEOs, though they claimed they had no idea Senate Republicans would hold it before November 3.

According to a tally from the Times, of the 129 questions asked, just four were on antitrust, four were on privacy, and two were on security. A whopping 81 were on censorship (69 of them from Republicans) and an additional 35 were on misinformation (28 by Democrats).

Will any of this sway Big Tech? It’s not likely. For all the bluster on display, the tech companies in question have unquestionable First Amendment rights to run their sites as they see fit and Section 230 protections against lawsuits. Until that changes, Congress is essentially yelling at the mods.

It’s hot bullshit

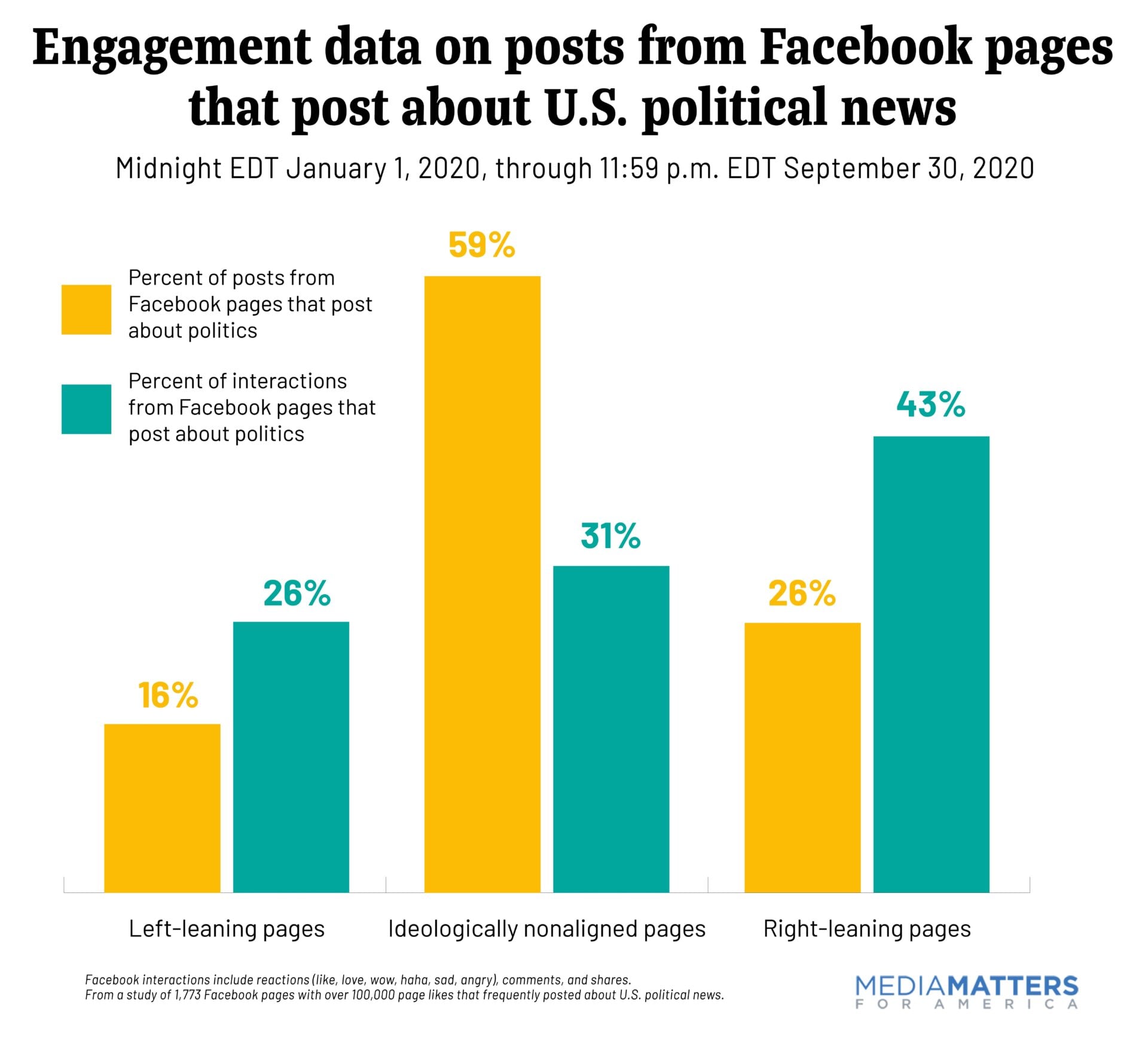

Liberal media watchdog Media Matters released the results of a nine-month examination of Facebook traffic this week, concluding that right-leaning pages consistently outperformed left-leaning ones. Conservative pages accounted for only 26 percent of posts in the sample but gained 43 percent of total interactions. Left-leaning ones were responsible for less than 16 percent of posts but got 26 percent of all interactions, performing at around the same rate or less than non-aligned pages. Both performed considerably better than non-aligned pages.

FCC issues its legal rationale for becoming Trump’s speech police

The Federal Communications Commission, which has announced it will consider a separate and equally inane proposal from the White House to reinterpret Section 230 in a manner that would allow it to punish websites for banning conservatives, announced its legal rationale for doing so last week.

Critics, including a Republican FCC commissioner, have said the FCC does not have that type of rule-making authority on 230. The FCC’s response is that Section 201 of the CDA gives it jurisdiction over social media sites, which are not common carriers. This is the same section it cited when repealing net neutrality, saying it did not authorize them to regulate service providers as common carriers.

If this reeks of bullshit, that’s because it is. But seeing as this plan would require a second Trump term to enact, legitimacy might not be at the top of the FCC’s mind.

Have you considered rebranding as the Qu Qlux Qlan?

QAnon, the far-right conspiracy theory asserting Democrats and celebrities are Satanic antifa pedophiles, spread like wildfire on Facebook—with groups devoted to it collectively having audiences in the millions before the site announced a sweeping crackdown earlier this month.

So how’s QAnon doing now that it’s been banned from Facebook? In many ways, it’s been unscathed as many adherents are flocking to alternatives like encrypted messaging app Telegram and faux free speech site Parler, or have simply branded their posts #SaveTheChildren, a phrase stolen from the name of a legitimate anti-sex-trafficking organization.

They’re also funding themselves by merch on Amazon, which currently lists over 1,000 items when searching for “QAnon.” (Last week, crowdfunding site Patreon terminated QAnon accounts.) Relatedly, a report by the Institute for Strategic Dialogue and Global Disinformation Index found that upwards of 70 U.S. hate groups have managed to retain access to 54 online fundraising mechanisms, including PayPal, Facebook fundraisers, payment processor Stripe, and Amazon.

If your apps got a little suckier…

Some social sites have introduced speed bumps with the hope that will slow the spread of chaos in the lead up to and aftermath of November 3. Twitter has modified the retweet function to be less convenient, while Instagram has yanked the ability to sort hashtags by most recent.

Google also bans political ads for a week after the election

Google said this week that it will ban all ads related to the 2020 elections for a week after November 3, following the lead of Facebook, which announced a similar policy earlier this month. That move is designed to offset confusion about the results of the election, which could take weeks to be decided.

Hello neighbor, never talk to me again

Community app NextDoor, which allows neighbors to trade news, gossip, casual racism, and exaggerated accounts of crime, has seen user-ship surge during the pandemic. According to a report by Recode this week, it is now unsurprisingly flooded with conspiracy theories and misinformation about the 2020 election, such as anecdotal reports of fraud by election workers, QAnon organizing, and backlash to measures by health authorities to control the coronavirus.

Good luck fellas

A group of QAnon supporters is suing YouTube in federal court, claiming it violated their First Amendment rights and caused “irreparable harm” to them and the public by banning them before the 2020 elections presumably holding their wallets out to their lawyers and shouting “bill me by the hour on my doomed lawsuit, daddy!”

maga2020!

A Dutch security researcher claimed last week that he was able to log into Donald Trump’s Twitter account with the obvious password “maga2020!” without encountering two-factor authentication or any other security measures. The White House has denied the report and Twitter says it has no evidence to support it.

Glenn Greenwald out at The Intercept

Journalist Glenn Greenwald—the reporter behind numerous leaks of U.S. government secrets, including the Edward Snowden leaks showing mass digital surveillance of U.S. citizens, but who more recently has become known for starting bitter feuds with colleagues and random Twitter leftists—has resigned from the Intercept, the publication he co-founded.

Greenwald claimed that editors at the Intercept “censored” one of his articles backing the New York Post’s (dubious) reporting on Hunter Biden’s supposed emails. The site in turn issued a very blunt statement accusing Greenwald of presenting a narrative around his departure filled with “distortions and inaccuracies—all of them designed to make him appear as a victim, rather than a grown person throwing a tantrum.”

Absentee landlords

Slate has a comprehensive story on how Facebook’s business model abroad—barreling into new countries without hiring any local staff or anything—hasn’t worked out so hot in Nigeria or other African countries. It’s not clear how well Facebook understands the complexities of the region and it’s relied heavily on automated tools that aren’t always accurate. For example, it mislabeled imagery associated with mass protests against Nigeria’s brutal Federal Special Anti-Robbery Squad (which was disbanded this month) as misinformation.

Facebook hasn’t clarified much, according to Slate:

There are so many things we do not know about Facebook’s content moderation practices, especially in African countries. For example, how many content moderators are there dedicated to the African region broadly and Nigeria specifically? How do these moderators work together with local fact-checkers and what informs their actions? When are human judgments brought into automated decision-making systems? Also, beyond Nigeria, how inclusive and representative are moderators with respect to language, subregions etc.?

[wearily] Yagshemash!

TechDirt’s Mike Masnick argues that the Borat actor, who has strongly advocated tech firms do more to fight conspiracy theories, shouldn’t have gotten mad when Facebook’s AI blocked one of his articles with an image saying “COVID-19 IS A HOAX.”

Crackdowns in China and Vietnam

Chinese state censors have reportedly doubled down on their efforts to police social networking firm Sina Weibo, which has hundreds of millions of users. According to a report by Rest of World, research shows thousands of accounts have been deleted in recent years and the pace of such deletions is speeding up. Meanwhile brigading by nationalist users urging thousands of others to report posts criticizing the government has reached a fever pitch. In Vietnam, Facebook is complying with government demands to blacklist political dissidents.