The Curiosity Rover has been a remarkably resilient piece of machinery, but even the toughest robot occasionally needs a tune up. So how does that happen when we’re down here and Curiosity is all by its lonesome up on Mars?

It only makes sense for Curiosity to be as autonomous as possible; it’s way up there and we’re way down here. So many things that would take a pair of human hands and eyes just a few minutes to dispatch, require quite a bit of technical workarounds to accomplish — and one of those things is figuring out just what a rock is made of. With no geologist standing by, Curiosity instead attacks said rock with a laser, using the resulting flash of light to figure out exactly what component parts are in the rock.

https://gizmodo.com/watch-the-curiosity-rover-shoot-a-martian-rock-with-a-l-1606765527

It’s called the ChemCam, and it’s a pretty cool workaround, but in the past several months that workaround has gotten a lot more difficult due to a broken auto focus. With no means of either replacing the focuser or getting a technician to pop the hood, researchers instead settled for a painfully slow system of taking multiple pictures and sending them back, until they finally got one that was in focus.

Unsurprisingly, this got old quick. But with a hardware fix out of the question, there was only one more possibility: a software fix. NASA put together some code for Curiosity to automate the process whole process for itself, by taking multiple photos and then calculating on its own which one was most in focus.

Of course, before they sent it up to Mars, they had to be sure it would work. So first they tested it out on some of Curiosity’s earthbound twins, living in a lab out in New Mexico. Once they were comfortable with its performance on Earth, they uploaded it up to the Mars-bound rover.

It worked. Plus, according to Roger Weins, the scientist in charge of the ChemCam, there’s one unforseen benefit:

We think we will actually have better quality images and analyses with this new software than the original

Not bad.

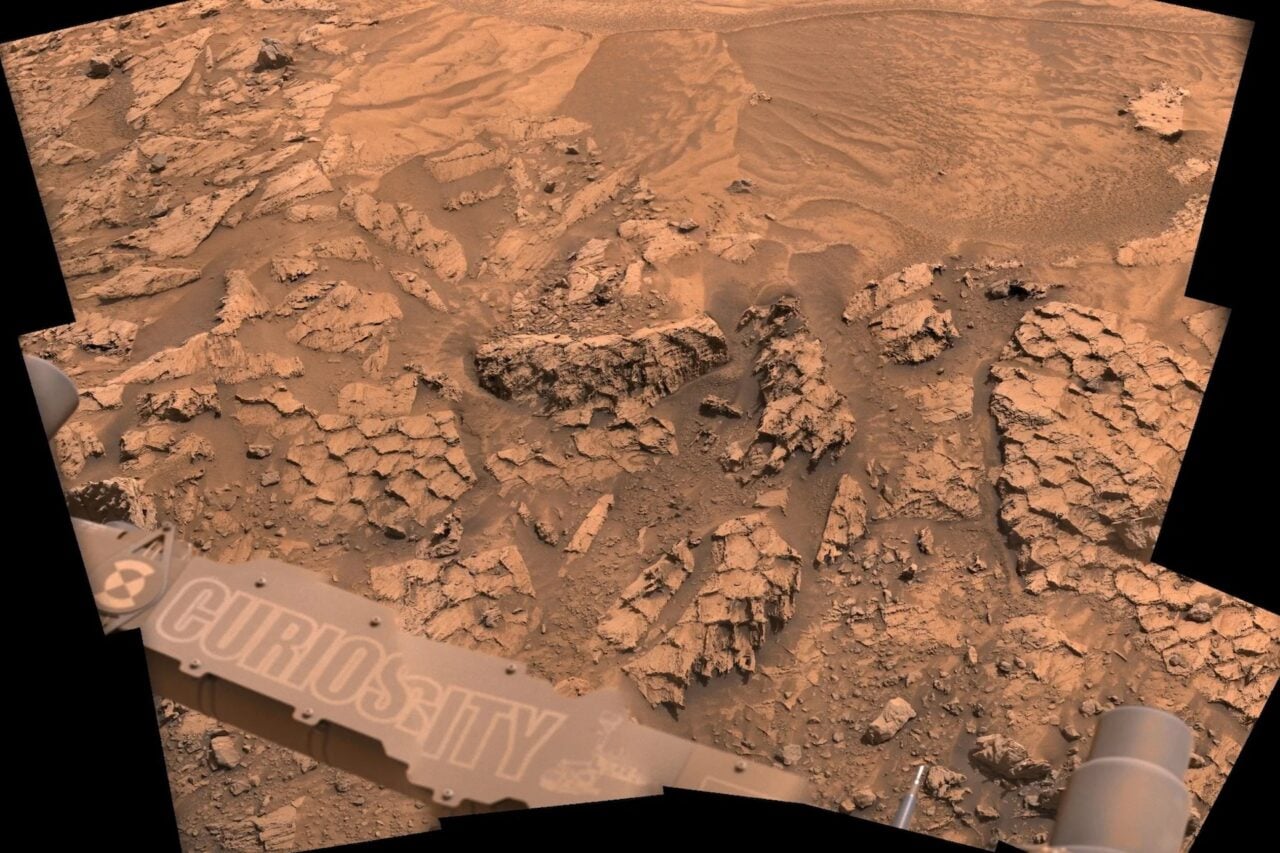

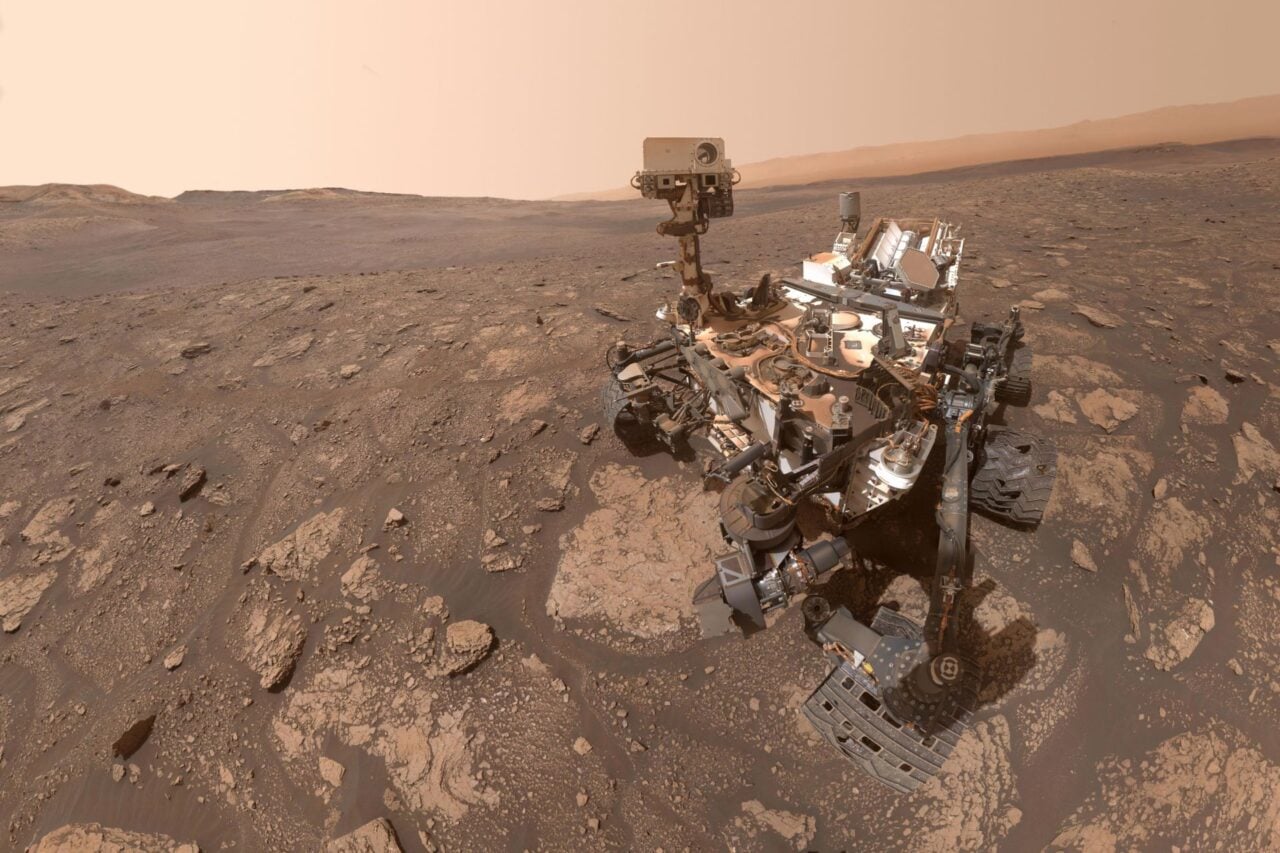

Image: Curiosity self-portrait on Mars / NASA.