It’s nothing to get too excited or alarmed about, but a robot has passed a modified version of the classic King’s Wise Men Test. It’s another classic case of simulation rather than emulation, but the experiment shows how artificial self-awareness can be programmed into our technology.

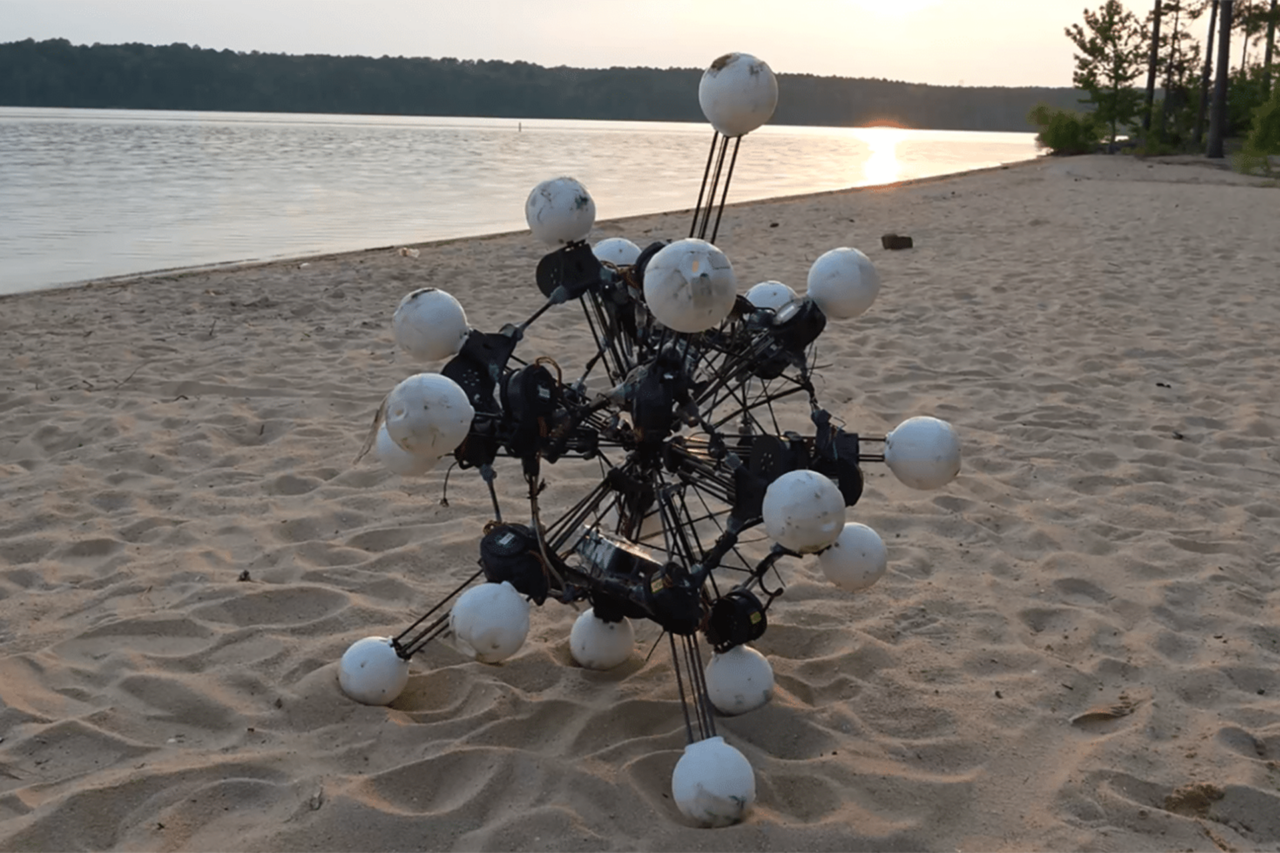

For the experiment, roboticists at the Rensselaer Polytechnic Institute’s Artificial Intelligence and Reasoning Lab adapted three old NOA robots to see if they could pass a simple reasoning test indicative of self-awareness.

Inspired by the King’s Wise Men Test (in which three wise men are tasked with inductively trying to figure out the color of their hats), two of the three robots were given “dumbing pills” rendering them unable to speak; in reality, their volume switch was just turned off. One of the robots was given a “placebo” in the form of a pat on the head ( a gesture more to the benefit of the viewers than the robots). None of the robots knew which of them were given the pills and which the placebo. The challenge was for the robots to figure out which one was given the placebo.

As the video shows, the one given the placebo ultimately spoke and said “I don’t know” when asked the question — at which point it “realized” that it must have been given the placebo. After hearing its own voice, it stated: “Sorry, I now know!” Which is really cool — it’s an example of self-awareness, albeit in very basic form.

It needs to be said that, by virtue of their human-like gestures and cuteness, there’s a tendency for us to ascribe more intelligence to these robots than they deserve.

It’s also important to point out that there’s no true self-awareness or self-consciousness going on. These bots merely passed a test of self-awareness, which is not an actual measure of self-awareness (similar to how passing the Turing Test is not indicative of self-awareness or human-like intelligence in an AI or chatbot).

These robots were programmed with a proprietary algorithm developed by the RPI called DCEC, or Deontic Cognitive Event Calculus. It uses a well-defined syntax and a proof calculus that’s predicated on natural deduction. Simply put, it’s a codified version of common-sense reasoning.

As noted by team leader Selmer Bringsjord, tests like these should enable developers to build robots with a suite of abilities that make them increasingly useful to humans. Cognitive scientists should likewise take note; analogous autonomic processes may be happening in the human brain.

This work will be presented at the RO-MAN conference in Japan, scheduled for August 31 to September 4, 2015.

H/t The Independent