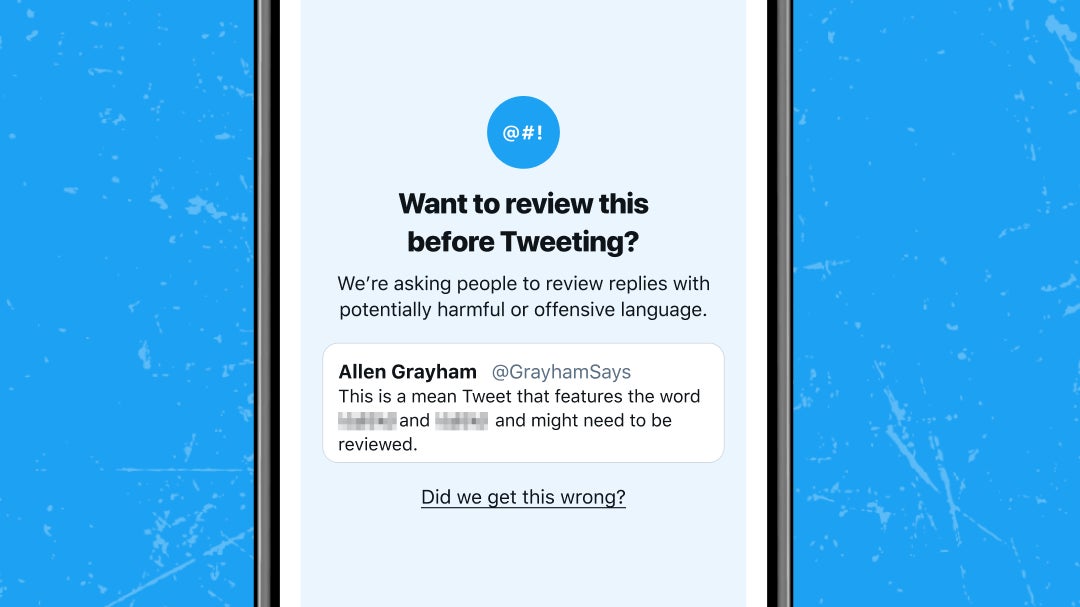

Twitter is (again) asking you to think a bit before you tweet. On Wednesday, the company announced that it is slowly rolling out prompts for English-speaking iOS and Android users that encourage them to “pause and reconsider” a particularly hateful tweet before they hit send.

According to the announcement, Twitter began testing these prompts last year, when it began running a limited test of these prompts for some users on iOS. The idea was that an algorithm would detect whether a reply contained “insults, strong language, hateful remarks,” or any hallmarks of a traditionally “offensive” reply.” If it did, it would then prompt users to reconsider what they were writing before spewing it onto the platform. Once prompted, these users had the chance to edit their tweet, delete it outright, or just… put it out there.

These early tests would sometimes flag these users unnecessarily because algos, being algos, struggled to capture the nuance in everyday conversation, the company said. These systems “often didn’t differentiate between potentially offensive language, sarcasm, and friendly banter,” Twitter wrote.

The test rolling out in 2021 is taking those early flubs into account, along with public feedback about the experiment as a whole. One of these fixes mentioned in the company’s blog is a check to see how often the tweeter and the tweetee generally talk back and forth on the platform. When two accounts are following each other and publicly chatting frequently, there’s a better chance those two understand each other better than, say, some rando on their Twitter timeline.

In another win for Nuanced Tweets, the company also announced that photos on the platform will stop being distractingly cropped, which may be a bigger deal for users who care about more about nice pictures than talking shit.