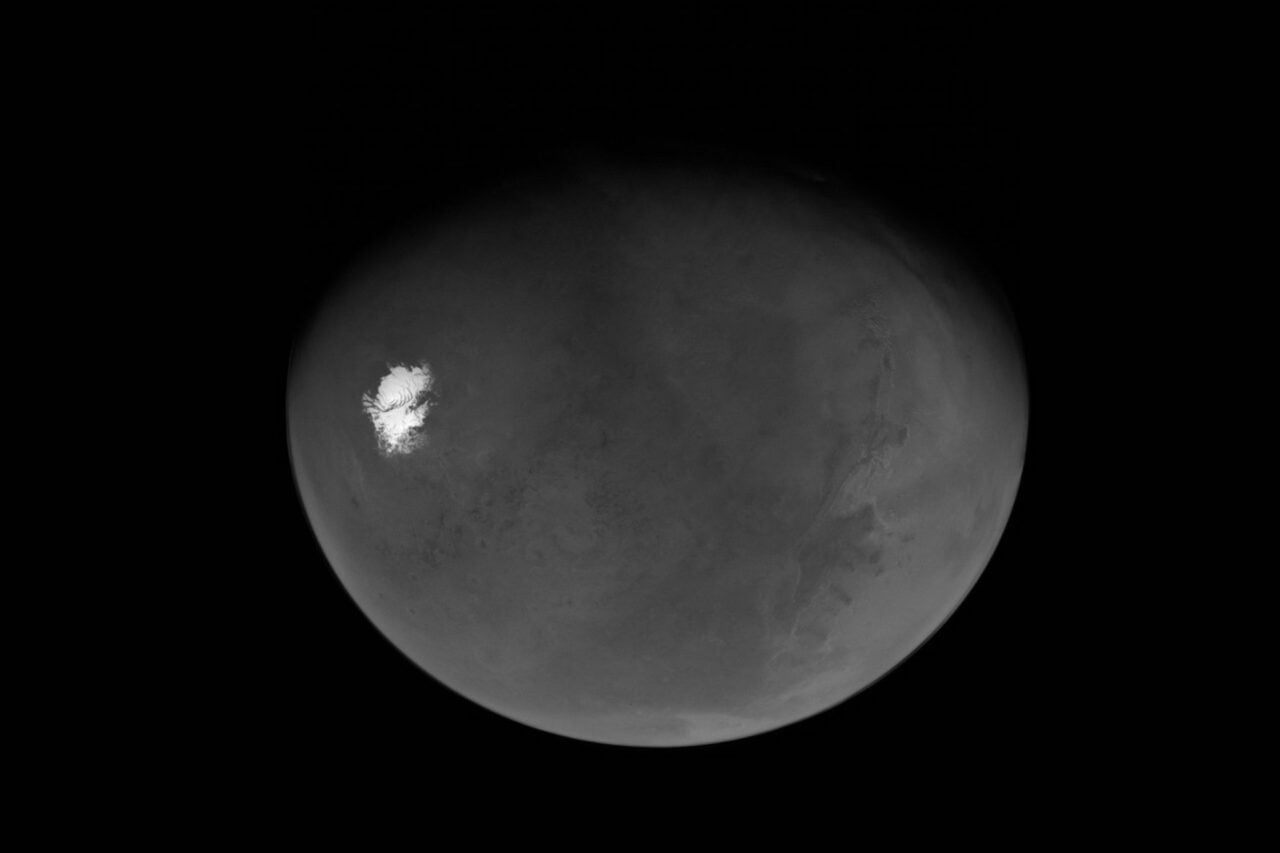

Human wetware is astonishingly good at pattern recognition and interpreting complex, noisy data, but it’s also painfully buggy. Mars is the red planet, except it really isn’t.

When we send robotic explorers to Mars, we equip them with colour calibration targets of known optical properties. Yet, even with those calibrated instruments, the view of the same rock in “true” colour can change dramatically just based on the brightness from the time of day. As this panoramic from the Spirit Rover demonstrates, that change can be visible over the time necessary for the robot to slowly swivel its camera to take in the full field of view.

The problem gets even weirder when you try to compensate for lighting that we’re familiar with instead of the lighting as it actually is on the planet. Here’s Mount Sharp under Mars lighting, then rebalanced to blue skiesto look like it would under more familiar Earth-normal lighting:

This leads to an almost philosophical problem: even raw images won’t tell us what we’d perceive in different lighting conditions. The same dusty red rocks can look disconcertingly different under very slight changes in environment.

So what do we do with the photographs our robots capture for us? Should we consider the rocks with the warm tints of their home red skies and blue sunsets, or relight them to represent how we’d see them brought to our own blue skies and red sunsets? Should we try to maintain some sort of consistency, or allow the same rock to fluctuate from pale to dark with the time of day the photograph was taken?

[The Color of Mars | NASA | NASA | NASA]

Top image: Mars looking gloriously red in this true color image of Wdowiak Ridge captured by the Opportunity Rover. All images credit: NASA/JPL

Contact the author at [email protected] or follow her at @MikaMcKinnon.