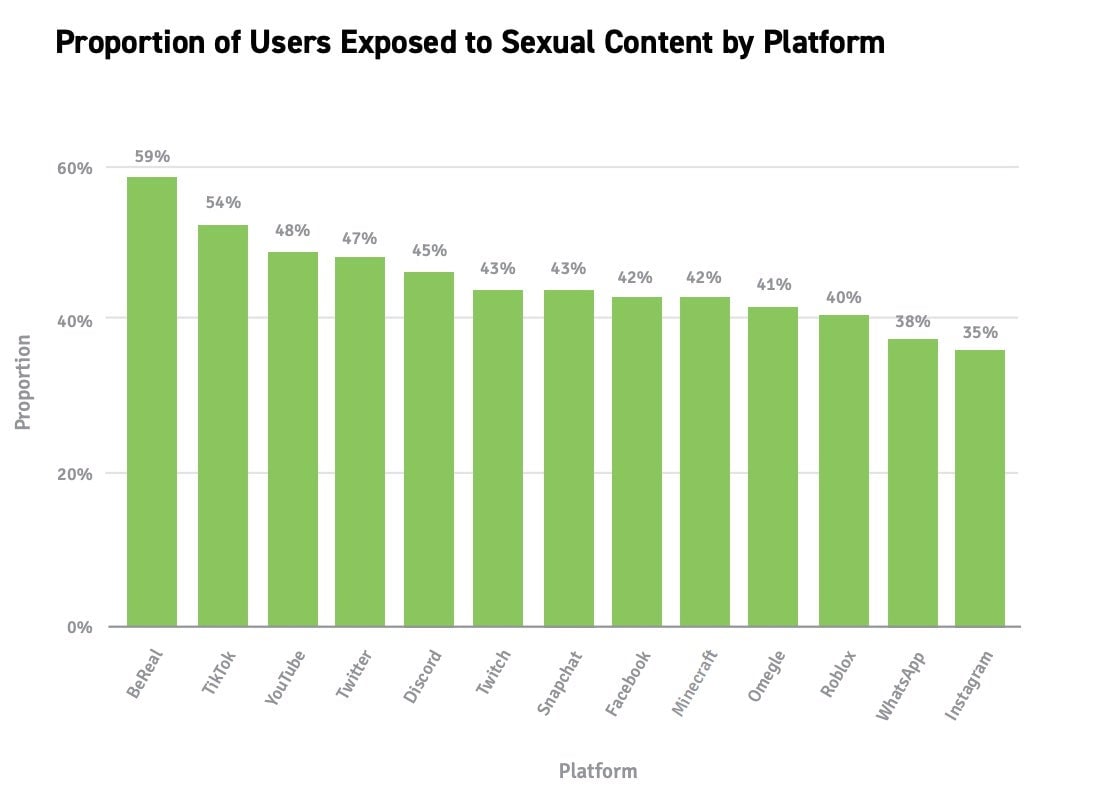

BeReal, a popular new spontaneous image-sharing app designed to show life, “without filters,” had the highest proportion of child users exposed to sexual content of any major social media app, according to a new survey shared with Gizmodo. Larger apps like YouTube and TikTok had more overall incidents of exposure to sexual content, but users on BeReal were the most likely to actually interact with the content, the survey found. Similarly, the survey of parents showed BeReal had the highest proportion of child users who have shared sexually explicit images of themselves on the app.

Those findings are part of a large survey of US parents conducted by ParentsTogether Action, a nonprofit organization that advocates in favor of tougher online protection for teens and kids. The survey of 1,000 parents found instances of child sexual abuse and exploitation on every major social network. More than a third (34%) of parents surveyed said they believed their children had been exposed to sexually explicit content online. More than 40% of the kids exposed to sexual content at the time were under 12 years old. One in five were nine years or younger. ParentsTogether Action previously sent a letter to Meta and TikTok demanding meetings over the problem of child suicide after documents showed Mark Zuckerberg was informed of harms suffered by young users.

“My kids, who are both in high school, have definitely been exposed to inappropriate content (sexual and otherwise) on Instagram and Snapchat.” said Holly Cook, a mother who responded to the survey. “It’s nearly impossible to stay on top of what they are seeing and talk about it with them so they don’t normalize the words and images.”

BeReal and TikTik—the two top social networks named in the survey—did not immediately respond to Gizmodo’s request for comment. YouTube, which came third on that list, told Gizmodo it removes sexually explicit content and other content targeting children or families that contains “mature sexual themes.” Content that endangers minors’ emotional and physical well-being, the spokesperson added, is prohibited under its child safety policy. YouTube additionally may age restrict certain content that does not technically violate its polices but is deemed inappropriate for users under 18.

“Additionally, we age-restrict content that may not be appropriate for viewers under 18 and have established quality principles for kids and family content to guide our recommendation system,” the spokesperson said in an emailed statement. “Accounts belonging to people under 13 must be on YouTube Kids or supervised experiences, and for these accounts, we provide parents with a range of options and tools to control what their families do and see online.”

Parents surveyed say social media use is basically ubiquitous among kids. 97% of the parents surveyed, who all had children under the age of 18, said their kids use social media. A majority, 60%, said their children use social media every multiple time per day. Responding parents also said 1 in 3 children were exposed to social media online before the age of five years old, despite most platforms setting minimum sign-up age requirements at 13.

The disturbing findings highlight not only the pervasiveness of sexual material on social networks with young users but also potentially expose glaring gaps in tech moderation practices. With dozens of new child online safety bills climbing their way through state legislatures and Congress, the survey could serve as a catalyst by advocates to pressure tech companies and lawmakers.

Children who used social media the most frequently were also more likely to come across sexually explicit content, the group found. Kids with disabilities and others identifying as LGBTQ were also significantly more likely to receive sexually explicit requests, according to the survey.

The survey shows young users on tech platforms are still regularly coming in contact with adult strangers despite years of pushback on the issue from parent groups. 30% of the parents surveyed said their child had been contacted by a stranger online while another 24% said they simply weren’t sure. Nearly half, (47%) of the children contacted by a stranger were 12 at the time. Around 10% of the parents said they believed a stranger had made a sexually explicit request of their child. One parent described a particularly disturbing interaction on Roblox.

“On Roblox, there were grown men trying to talk to my daughter. Ask when she was alone,” Delila Gonzalez, a mother who participated in the survey said. “I was reading the messages between the two and knew it was a grown man because he would call my daughter ‘my little dove’. So now none of my daughters are allowed on that game and we do regular monthly phone checks.”

Kids online safety bills are coming

Parents responding to the survey were unified in their support for change. The vast majority (93%) said they did not think social media companies were doing enough to keep kids safe from child exploitation or abuse online while another 95% said they support stronger laws to regulate social media. Though parents called for a variety of new safeguards, their most common request was for social media platforms to provide parents with stronger and more accessible parental controls.

Dozens of child online safety bills are currently making their way through state legislatures, some more credible than others. Last Fall, California Governor Gavin Newsom signed into law a first-of-its-kind measure forcing tech companies to put in place sweeping new safeguards for users under the age of 18. The law, called The California Age-Appropriate Design Code Act, would pressure companies to default child users to privacy settings that take into account their mental health. The rules also prohibit tech firms from collecting, selling, or retaining a young person’s geolocation, and would prevent firms from encouraging children to provide more personal information. Though set to take effect in 2024, critics—Big Tech among them—worry broad law could lead to costly new age verification systems. Privacy advocates like the Electronic Frontier Foundation fear the law could actually lead to more data collection by tech platforms.

Then just last month, Utah rushed through its own pair of child online protection laws requiring social media companies to verify users’ ages, obtain parental consent, and most notably set a curfew banning users under the age of 18 from using social media apps between 10:30 PM and 6:30 AM. Legal experts warn some of the Utah law’s provisions, which sound eerily similar to authoritarian measures in China, are guaranteed to face a tsunami of legal challenges.

Regardless of how those two cases play out, online child privacy protections are no longer a question of if, but rather, when and how. Politico estimates at least 27 different kids’ privacy and safety bills have been proposed by 16 states since February.

That broad support for doing something when it comes to strengthening kids’ online protections could serve as a palpable middle ground for a deeply divided Congress. Senate Majority leader Chuck Schumer, according to Axios, intends to bring a handful of proposed child online safety bills to the senator floor for a vote in the coming months and has even expressed interest in a kid’s online safety and privacy themed week.

Update, 4/13/2024 2:12 P.M EST Added statement from YouTube.