In a big step towards making humans more bionic, scientists have trained monkeys to control not just one, but two virtual arms by thoughts alone. The work could someday be a boon to double amputees or quadriplegics.

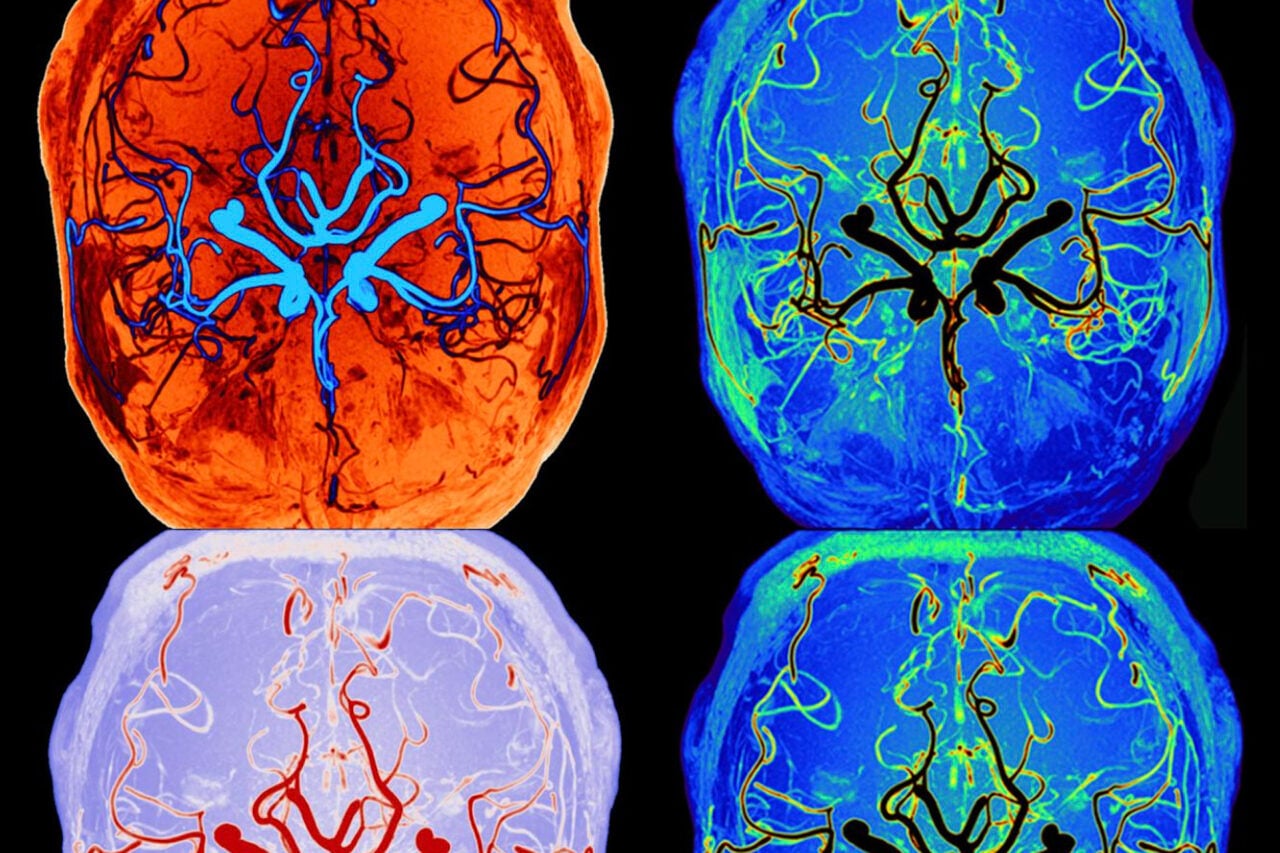

In 2011, researchers led by Duke University neuroscientist Miguel Nicolelis demonstrated a unique two-way interface between mind and machine. The team began by recording activity from up to 200 neurons in the brains of monkeys. Once computer algorithms understood how different neuronal patterns led to specific arm motions, the researchers transposed those movement commands into a virtual world, allowing the monkeys to control a virtual arm. Next, by adding in a technique called intracortical microstimulation, which electrically stimulates sensory neurons in the brain, the researchers gave the monkeys the ability to “feel” the virtual objects they manipulated.

https://gizmodo.com/biotech-breakthrough-monkeys-can-feel-virtual-objects-5846275

It was a cool, groundbreaking experiment, which had implications for instilling touch into prosthetics in the future. But like the brain-machine interface (BMI) studies that came before and after it, the work only gave monkeys control of a single limb. This limitation lies in how the brain works to produce movement.

https://gizmodo.com/a-major-breakthrough-in-bringing-the-sense-of-touch-to-1445039422

Research has shown that the brain doesn’t encode the movement of two arms at once as a simple sum of the movement of two independent arms. Instead, the specific patterns of activity in the brain are completely different. This means that the neuronal patterns that researchers have recorded for single-arm movements will not work — at all — for moving two virtual or prosthetic limbs.

So Nicolelis, who was also behind that trippy mind-meld mouse experiment from earlier this year, set out to create a BMI that allows monkeys to control two virtual arms with their thoughts alone. “Bimanual movements in our daily activities — from typing on a keyboard to opening a can — are critically important,” he said in a statement. “Future brain-machine interfaces aimed at restoring mobility in humans will have to incorporate multiple limbs to greatly benefit severely paralyzed patients.”

https://gizmodo.com/brain-to-brain-interfaces-have-arrived-and-they-are-ab-5987567

Connecting the Mind to the Machine

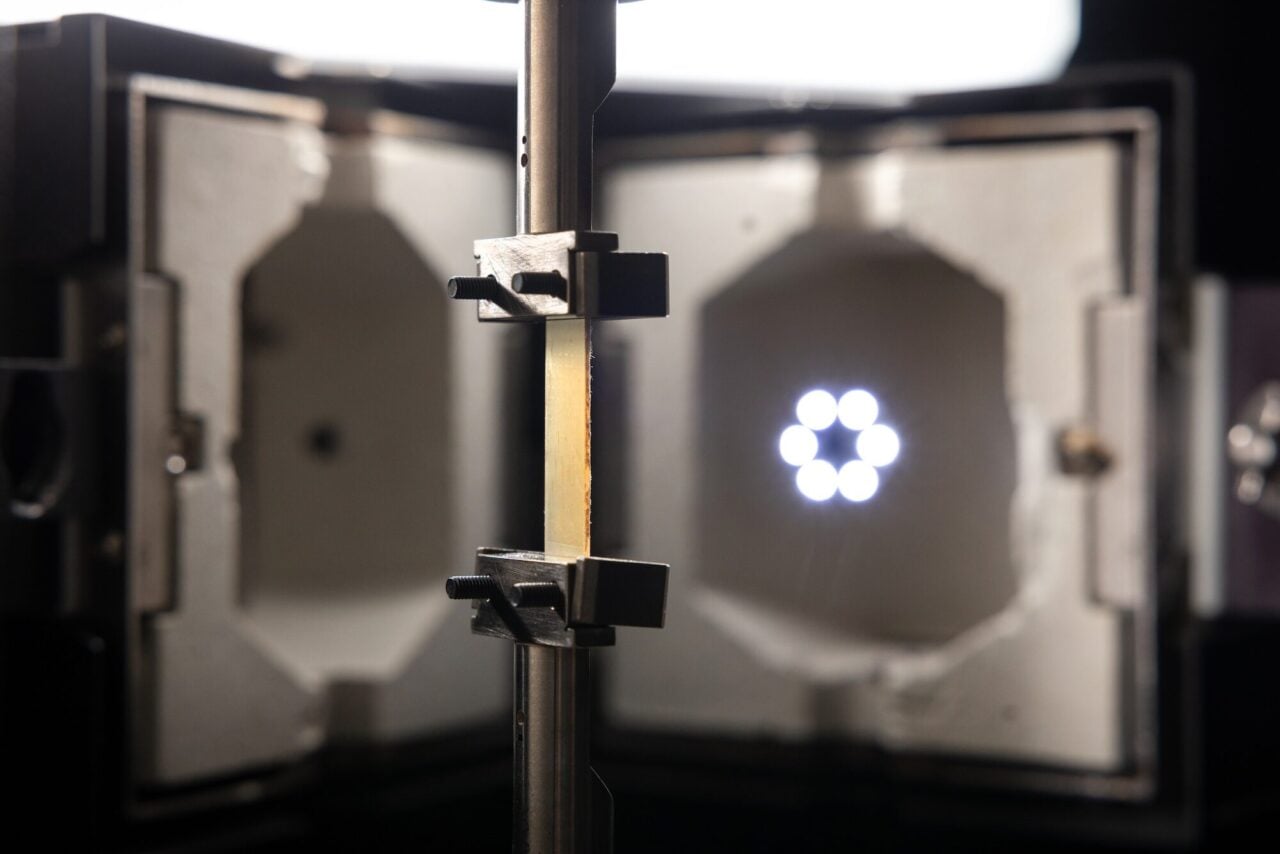

Nicolelis and his colleagues began by implanting electrodes into the brains of two monkeys, which recorded the electrical activity of nearly 500 neurons acting together. This is the highest number of neurons simultaneously recorded in nonhuman primates to date, the researchers note in their study, published recently in the journal Science Translation. The neurons were situated across both brain hemispheres, in the monkeys’ supplementary motor area, primary motor cortex and posterior parietal cortex — areas that work together to plan and execute movement.

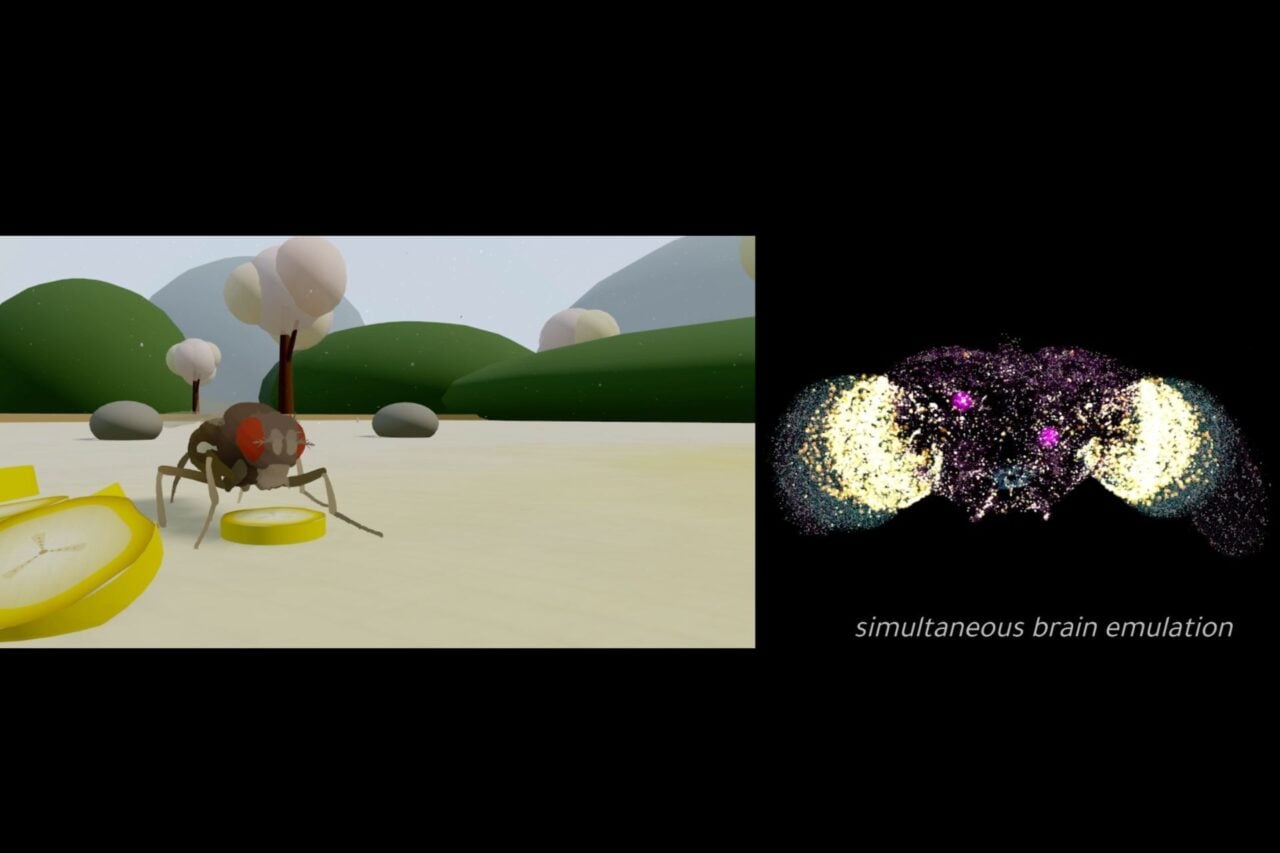

The researchers trained one of the monkeys to control the movement of two avatar monkey arms on a computer screen using two joysticks. To get a juice reward, it had to simultaneously place the virtual arms over two virtual objects for one-tenth of a second. While the primate worked at its task, a computer algorithm analyzed the monkey’s neuronal activity to determine how different patterns related to specific arm movements.

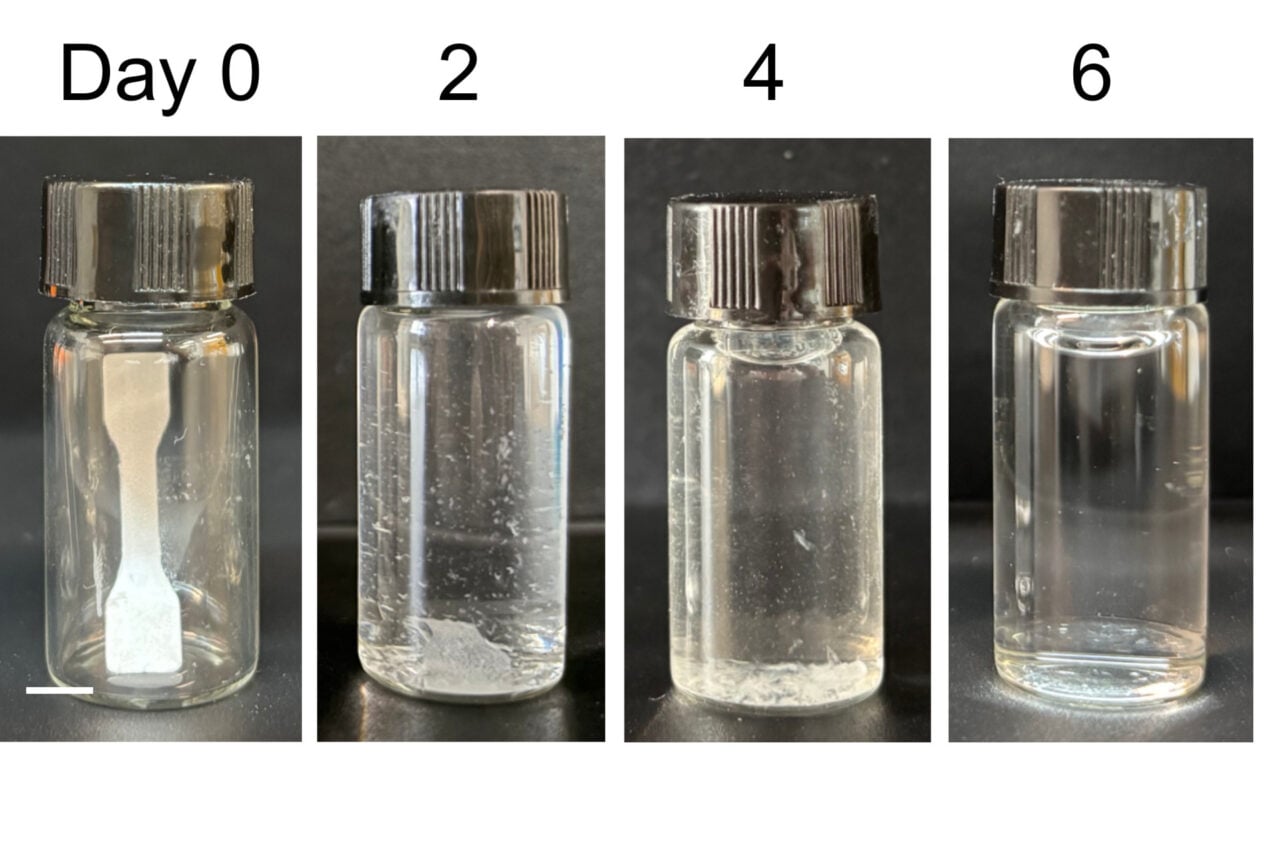

Once the algorithm could accurately predict the monkey’s movements based on its brain patterns, the researchers moved on to phase two. The monkey repeated its tasks from the first part of the experiment, but this time the joysticks were disconnected — the primate was actually moving the two avatar arms with just its thoughts, which were being decoded by the computer algorithm. The researchers removed the joysticks altogether and strapped the monkey into a chair so it couldn’t move its arms. The monkey quickly learned to move the virtual arms by thoughts alone.

Importantly, this kind of setup cannot be used for paralyzed and amputee patients, who are unable to train the algorithm by playing with joysticks. So the researchers had the second monkey go through a different training program. Here, the team stuck the monkey into a chair and secured its hands on a table in front of it. The monkey simply watched the avatar arms move around on screen, while the algorithm correlated the monkey’s brain patterns with the movement of the avatar arms. It took longer, but the monkey was eventually able to control the virtual arms with thoughts, too.

The researchers think that in the future, the process of controlling two avatar arms with the mind could be translated to controlling two prosthetic arms. However, this goal may not be reached any time soon, as the movements the monkeys achieved were quite simple. “It still remains to be tested how well BMIs would control motor activities requiring precise interlimb coordination,” they write in their paper.

Check out the study over in the journal Science Translation.

Media via Duke Center for Neuroengineering.