Content and moderation decisions by tech companies are increasingly the focus of unending kicking and screaming—to the point where it’s now essentially a campaign plank for at least one of the U.S.’s major political parties. The likes of Facebook, Twitter, and Google are also trying to convince the public how well they’re dealing with the flood of disinformation and hateful content they’ve helped unleash by redirecting attention to whichever niche group they banned in the past few days, or citing dubious internal statistics as evidence.

So we’re trying out new formats to round up and summarize some of these developments with ongoing context on a semi-recurring basis in the interest of everyone’s sanity (and perhaps without giving undue weight to every swing around the bullshit merry-go-round). Let us know your thoughts and feedback in the comments below.

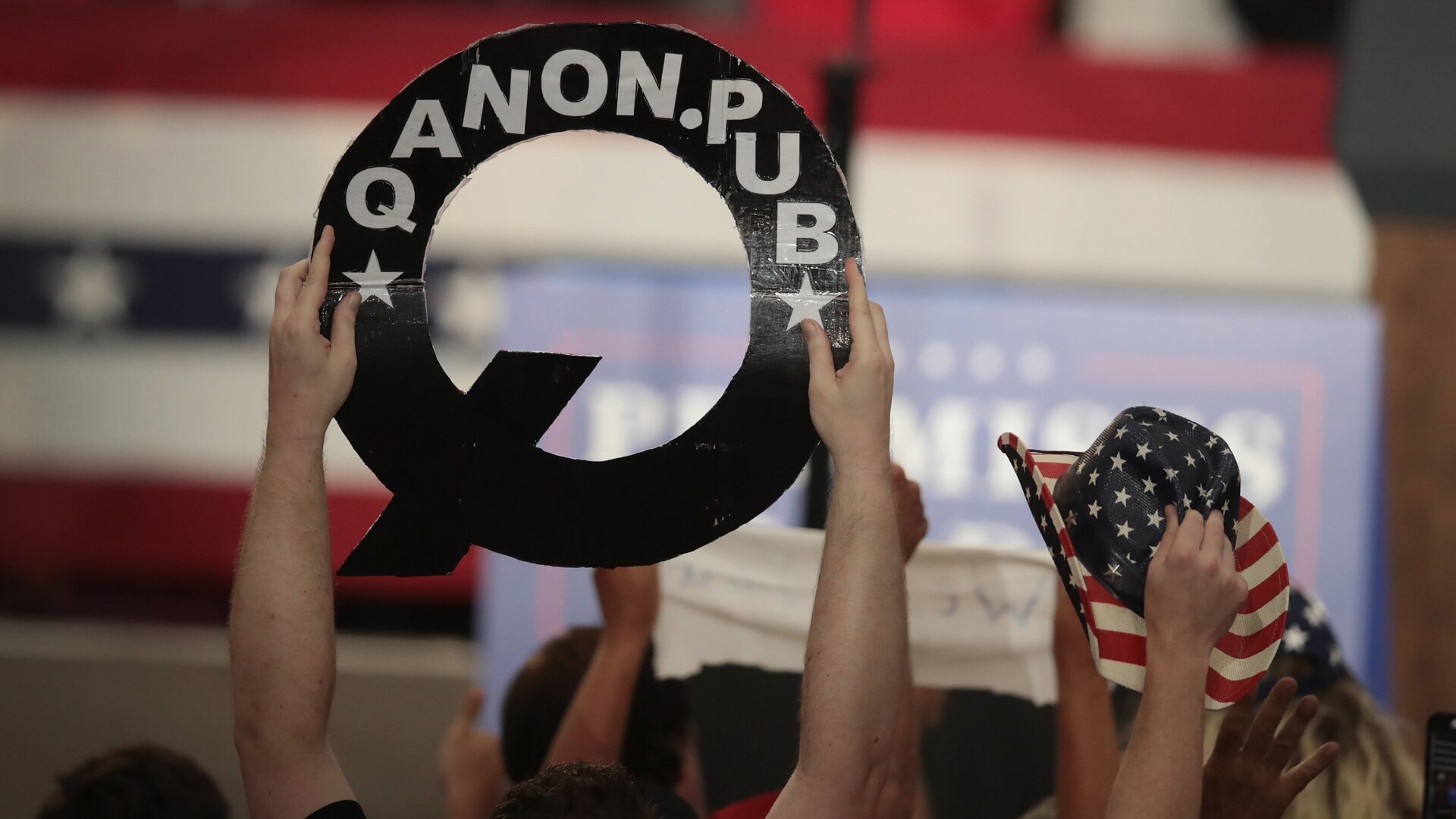

QAnon vs Facebook

Earlier this month, Facebook announced it had banned one of the largest QAnon groups on the site—a decision that conveniently coincided with growing awareness of the sheer scale of QAnon activity on the site. A few days later, reports by NBC News and the Guardian report relayed that hundreds of groups and pages on Facebook and Instagram, that collectively had millions of followers, were left untouched even as Facebook touted the ban as evidence it was doing something. According to NBC, internal Facebook research also showed the company sold 185 pro-QAnon ads that made four million impressions in a previous month.

This week, Facebook expanded its policy on extremism to prohibit activities “tied to offline anarchist groups that support violent acts amidst protests, U.S.-based militia organizations and QAnon.” (Not all QAnon-related content is banned, just that calling for or speculating about violence.) The company said thousands of groups and pages were kicked off Facebook and Instagram under the expanded rules, though it softened the blow: many anti-fascist groups, a perennial boogeyman for right-wingers who insist against the evidence that they instigate violence at protests, were also given the boot as part of some type of balancing act.

So Facebook wants credit for fighting QAnon, despite its explosion in popularity being driven by the site’s own growth-boosting algorithms. The cat’s already out of the bag; Donald Trump endorsed the QAnon movement this week, and a Facebook spokesperson conceded the company doesn’t think it’s “flipping a switch and this won’t be a discussion in a week.”

Covid-19 Misinformation

Facebook, YouTube, Twitter, and even LinkedIn managed to get their shit together and blunt the spread of a sequel to Plandemic, a hoax documentary about the novel coronavirus that they disastrously failed to prevent from gathering millions of views in May. (The sequel is unfortunately titled Plandemic: Indoctornation.) Facebook prevented users from linking to the video at all, while Twitter hid it behind a warning screen. The video failed to gain anywhere near the momentum of its predecessor.

The main reason for the quick action, though, appears to be that its producers heavily promoted its release, thus giving the sites a heads up. Meanwhile, a report from human rights group Avaaz estimated Facebook has served up at least 3.8 billion views on coronavirus lies, misinformation, and hoaxes during the pandemic.

That’s The Least You Could Do

Short-form video app TikTok, which the Trump admin is currently threatening to ban, said this week it had set up a website and Twitter account to flag misinformation that no one will read. Facebook said it is considering installing a “virality circuit breaker” that would limit the spread of posts that are suddenly skyrocketing, providing time for review by its moderators. (Facebook has been quietly pressuring those mods to treat right-wing content preferentially and its track record on flagging misinformation early is abysmal.)

Laura Loomer Persists

A right-wing think tank demanded that the Federal Election Commission investigate why “Facebook, Twitter, Instagram, Uber, Lyft, PayPal, Venmo, and Medium” have not rescinded bans on Islamophobic conspiracy theorist Laura Loomer now that she’s a Republican nominee for Congress in Florida. Running for Congress is at least the third stunt Loomer has pulled to get back her Twitter account, counting a failed lawsuit and the time she handcuffed herself to the company’s NYC offices.

Burger King’s Mean Streams

Ad agency Ogilvy infuriated hosts on livestreaming platform Twitch by tricking streamers into airing text-to-speech Burger King menu readouts for chump change; Twitch wouldn’t tell Gizmodo’s sister site Kotaku whether that violated ad policies.

Duh

Reddit says it observed a significant (18 percent) drop in hateful content after it banned almost 7,000 subreddits it said engaged in hate speech and harassment.

Kill Switches and Other Facebook Attempt to Appease Angry Masses

Facebook is still trying to come up with a plan to respond to Donald Trump’s inevitable attempt to discredit the results of the 2020 election while somehow not angering conservatives too much. Per the New York Times, they’ve come up with maybe putting a label that politely reminds users election results aren’t finalized yet under posts falsely alleging electoral fraud and a “kill switch” that would turn off election ads after the election. Brain trust at work, folks:

… executives have discussed the “kill switch” for political advertising, according to two employees, which would turn off political ads after Nov. 3 if the election’s outcome was not immediately clear or if Mr. Trump disputed the results.

The discussions remain fluid, and it is unclear if Facebook will follow through with the plan, three people close to the talks said.

The Fight For the Presidential Ban

Trump, for what it’s worth, won’t take the hint and is petitioning the Supreme Court to overrule a federal appellate court’s decision that he can’t block critics on Twitter.