In the grand scheme of things, ten years is nothing; an insignificant slice of our planet’s long timeline. But when it comes to technological innovation, a lot can change in a decade, and some of the gadgets, software, and silicon you relied on back in 2010 now seems almost ancient and ready for the antique market.

So let’s reflect on just how far we’ve come, technologically speaking.

Digital photography

As it does now, ten years ago digital cameras ruled photography, and while film was a little easier to find a decade ago, consumers on the hunt for a new camera were still sticking with high-quality digital DSLRs like the Nikon D90 and the Canon EOS 5D Mark II. But 2010 also saw the arrival of the iPhone 4 and other smartphones that started to seriously embrace photography and slowly nibble away at the market share of compact point-and-shoot cameras.

It’s said that the best camera is the one you have with you to actually capture a moment or a memory, and it didn’t take long for smartphones to improve enough for the average consumer to forget why they used to carry a separate camera at all. An expensive, professional-grade digital camera can still outperform a smartphone on a technical level, but looking back at Apple’s last big product unveil of the decade the most lauded feature of the new iPhone 11 Pro is its improved photography capabilities—and it’s more than just hype.

https://gizmodo.com/the-most-innovative-gadgets-of-the-decade-1840269553

But dedicated shooters haven’t gone the way of analog film just yet. They’ve managed to evolve and still provide a usability experience that smartphones can’t—at least yet. Sensors have improved to the point where they’re sensitive enough to produce stunning images even in pitch darkness, and autofocus capabilities can now lock onto a subject faster than the human eye can. Most importantly, the reign of chunky DSLR cameras is coming to an end. They’ve been supplanted by compact mirrorless and shutterless alternatives that are changing the way photographers, and even videographers, work and shoot.

Display technology

In 2010, Apple revealed the iPhone 4 with a screen the company branded the Retina Display that squeezed in so many pixels it was almost impossible for the human eye to see them individually. Other smartphones soon followed suit, but the Retina Display and other pixel-packed screens weren’t the only treat for the eyes the past decade delivered.

Making the brightest parts of a screen shine like the sun while the darkest parts disappear like shadows was the ultimate goal of companies churning out LCD displays, but the start of the decade marked the start of mass production on a new technology that promised to outperform the flatscreen hardware that had been around for decades prior. OLED (organic light-emitting diode) screens can produce bright, colorful pixels without the need for a backlight like an LED or a fluorescent tube. OLED displays are thinner, less power-hungry, and produce images with staggering levels of contrast. The first OLED TVs were small and obscenely expensive, but over the past ten years the technology slowly found its way into affordable consumer tech, starting with MP3 players and fitness trackers, and eventually smartphones.

There’s one other unique feature of OLED displays that promises to revolutionize the shape and form of gadgets over the next 10 years: they can flex and fold. The tail end of this decade saw the reveal of different consumer-ready folding phones from companies like Samsung, Huawei, and Motorola, and while the technology has hit a few speed bumps, it promises a future where dropping your smartphone on the ground doesn’t leave you with a pile of shattered glass to cry over.

Biometric security

Biometric security features on our mobile devices are so ubiquitous, seamless, and reliable today that few of us even remember they’re there while mindlessly tapping a fingerprint reader to complete a purchase, or glancing down at our smartphones to authenticate our identities so that we can check who liked our last Instagram upload. That wasn’t the case a decade ago.

In 2010 biometrics were far from a new concept. At that point organizations like the FBI and the Department of Homeland Security were already compiling databases of suspects featuring digital scans of fingerprints, irises, palms, and even faces, that could be quickly searched and matched. But the turnkey hardware they relied on far outperformed what was available to consumers at the time. At the start of the decade fingerprint readers were widely available in laptops and the technology had managed to shrink small enough so that even Victorinox was able to squeeze a reader onto a secure USB flash drive. It was finicky, though, and more often than not you’d have to carefully swipe your fingerprint across a sensor a couple of times to authenticate your identity.

https://gizmodo.com/amazon-is-marketing-face-recognition-to-police-departme-1839073749

It was a technology that worked, but it wasn’t seamless, so most consumers were happy to stick with passwords. In 2013 that changed, however, when Apple introduced a feature called Touch ID on the iPhone 5S. Taking advantage of custom security hardware and the iPhone’s beefy processor, authenticating a user through their fingerprint was suddenly an instantaneous process and one that was automatically performed whenever someone reached for their iPhone and tapped the Home button—an action that most iPhone users had already been doing hundreds of times a day for years at that point.

Fingerprints were faster than typing in a PIN code or password, and there was nothing to remember aside from which finger you had programmed into your device. But unlike passwords, humans leave a copy of their fingerprint on almost everything they touch, and it didn’t take long for even Apple’s Touch ID to be circumvented using just a digital photo of a fingerprint, a printout, and some wood glue.

https://gizmodo.com/emotion-recognition-is-creepy-as-hell-1840415094

Four years later, Apple took a technology that many assumed was only available at the highest levels of the CIA: facial recognition, and made it available on the iPhone X. With Face ID users no longer had to reach for a button to authenticate themselves, they simply had to look at their mobile devices to gain access to their digital lives. It was a feature that made users feel they’d been given access to James Bond and MI6’s most covert technology, but unfortunately, not all users have the same experience. While Apple promised it thoroughly trained the neural networks that power Face ID with a highly representative group of subjects to ensure there was no performance bias based on gender, age, or ethnicity, the technology behind facial recognition systems have typically favored users with lighter skin. It might not be a problem with Apple’s products, but as the use of facial recognition technology spreads, there’s the potential of larger problems if, for example, an ATM refuses to release funds because it’s only been optimized for white people, or a threat-focused system throws false positives because it has a bias towards people with darker skin.

Messaging

There was a time when cellphone carriers nickel and dimed subscribers by charging for every text message sent between devices. But when the smartphone arrived, their ability to stream videos and access the full version of the internet demanded a lot of bandwidth, which encouraged carriers to not only upgrade their wireless networks to remain competitive, but to also introduce affordable monthly data plans allowing smartphone users to upload and download gigabytes of data.

At the start of the decade, apps like WhatsApp and Apple’s iMessage took advantage of this often unlimited mobile data pipeline to give smartphone users an alternate way to message each other and completely avoid the ridiculous charges carriers levied on the SMS protocol. For a while, these apps became one of the biggest drivers of smartphone sales, particularly for users who wanted to stay in touch with friends and family around the world as charges for SMS messages inexplicably skyrocketed when they were sent to people in other countries.

https://gizmodo.com/whats-the-best-chat-app-of-all-time-1826683060

They also helped make instant messages more ubiquitous as these apps could be installed and used on more than just devices with a SIM card installed. iMessage soon became available on the iPad and Apple’s computers, so no matter what device you happened to be using you could continue a conversation. Windows has since released the Your Phone app which gives Android users a similar skill, and apps like Telegram, Whatsapp, and Signal all let you message across a wide variety of phones and other devices. In just a decade’s time texting a friend over SMS now feels almost as antiquated as sending them a telegram or tapping out a message in Morse Code.

Voice recognition

Software like Dragon Natural Speaking promised a work routine that freed you from endless hours spent sitting at a desk pounding away on a keyboard. You could simply dictate emails, messages, memos, and all your words and commands would be automatically processed, understood, and executed. By 2010 voice recognition had come a long way, but it was limited to specialty apps, and seemingly only advancing thanks to hard work being done by researchers at universities.

In 2011, voice finally recognition made its most successful debut to consumers when Apple introduced its Siri voice assistant on the iPhone 4S. Siri wasn’t flawless, and Apple cautiously rolled out the feature to users over the coming year, but for those who’d occasionally dabbled in voice recognition software ahead of Siri’s debut, it finally felt like this was a long-promised technology that was finally ready for primetime. Siri clearly wasn’t, and her mistakes in understanding what iPhone users asked her to do became a running joke for years, at least until Amazon debuted its Alexa voice assistant in late 2014, and Google introduced its own voice-controlled assistant in mid-2016.

Powered by massive cloud servers, and in the case of Google, a near-comprehensive index of the sprawling internet, the next generation of smart assistants outperformed Siri and demonstrated the remarkable improvements made to voice recognition over the decade. They also quickly spread to multiple devices, including smart speakers, mobile devices, and appliances, and eventually became a strong driver for the smart home, making hundreds of IoT devices controllable through simple voice requests. As the decade comes to an end we’ve even gotten a sneak peek at what the proceeding decade holds for the next generation of voice recognition technology with Google’s Recorder mobile app that can transcribe and digitize natural conversations in real-time while rarely making a mistake.

It’s also a technology that’s developed some unfortunate tradeoffs along the way. Whereas software like Dragon Natural Speaking existed before home internet was common, working entirely on the local computer, the voice assistants we’ve come to rely on are sharing everything we say with servers around the world. We’re already past the point of privacy concerns; despite what companies like Amazon and Google claim, millions of households across the country have essentially welcomed eavesdropping devices into their home with open arms.

Artificial intelligence

To most consumers, artificial intelligence was still a vague concept at the start of the decade. It was one of science fiction’s most ambitious ideas dating back decades prior, and while recreating the capabilities of the human brain through silicon, microchips, and processors would be an important step towards a promised future filled with helpful robots and autonomous cars, it was also a mysterious technology relegated to government research labs, think tanks, and universities. Self-driving cars existed, but they were mostly relegated to obscure DARPA-funded challenges, and tested a safe distance from the general public.

https://gizmodo.com/how-we-can-prepare-now-for-catastrophically-dangerous-a-1830388719

But by the close of the decade, artificial intelligence isn’t such a vague concept. Self-driving cars have been roaming big cities across the country for quite a few years now, and while it’s not quite a feature you can opt for at the dealership just yet, it is something available in a limited fashion as many vehicles can now navigate crowded highways all by themselves, find their way around a parking garage, and even masterfully parallel park into a space that would take most drivers 15 attempts to get it right. The advancements we’ve seen in only ten years are promising, but we’ve also seen the unfortunate downsides and huge challenges of developing a technology like this, which serve as a cautionary tale that some innovations shouldn’t be rushed out of the R&D lab.

The past decade has seen several other promising applications for artificial intelligence, including the voice-activated smart assistants we rely on in our homes and on our mobile devices, and the ability to make powerful applications like Photoshop even more capable by automating and streamlining tasks that would previously take artists hours and even days to complete. But we’ve also gotten a glimpse at how the technology could be potentially abused in the coming decade. Terms like deep learning and neural network meant nothing to most of us ten years ago, but now they’re terms that pop up all the time as the internet fills with deepfake videos featuring swapped faces and synthesized voices that will make it even harder to know for sure what’s real and what’s a lie in the coming years.

Electric cars

The EV1, General Motors’ first electric car, was more of an experiment than a new brand for the car maker. It was only leased to owners, and after four years the company reclaimed and destroyed most of them, much to the chagrin of loyal drivers who had fallen in love with the vehicles. GM claimed the EV1 wasn’t financially viable, but a decade later, in late 2010, the company had apparently figured out how to make electric cars profitable, when the Chevy Volt officially went on sale after three years of excessive hype.

Batteries are still the most problematic component on any device that promises to cut cords or forego a reliance on fossil fuels, but the Volt managed to squeeze roughly 35 miles of pure electric driving time from its sizable battery, which was enough distance to get many drivers to and from work, or allow them to complete a handful of chores like grocery shopping, before the car needed a charge again. To solve range anxiety, the Volt included a backup gas-powered generator that could kick on to power the vehicle’s electric powertrain, but it meant the Volt wasn’t a true electric car like Nissan’s compact Leaf, or the vehicles from a Silicon Valley-based startup known as Tesla.

Despite endless controversy, Tesla’s electric cars, which were first available in 2012 with the Model S sedan, boasted ranges of several hundred miles on a single charge, offered touchscreen displays and other modern amenities that other automakers seemed disinterested in. They were expensive, but Tesla’s vehicles helped advance the technology and popularity of electric cars more than it had over the previous century, thanks in part to the company working hard to set up a network of charging stations across the country so that stopping to charge up would be nearly as easy as stopping for gas. As the decade comes to a close, nearly every carmaker on the planet now offers electric options, including luxury brands like Porsche. The era of the prestigious gas-guzzling SUV might finally be coming to an end.

Consumer health tech

It’s hard to imagine life without smartphones and the myriad of apps providing much-needed distractions around the clock. But while the advent of mobile devices has arguably made us more addicted to technology than ever, they’ve also become a genuinely useful tool when it comes to getting fit and keeping tabs on our health. In fact, at the start of the decade the wearable revolution wasn’t kicked off with smartwatches, but by an endless array of fitness trackers, like the Nike Fuelband, which tracked steps, movements, and provided endless motivation to get up and get outside for a little exercise.

https://gizmodo.com/does-my-smartwatchs-sleep-tracker-actually-do-anything-1838290221

Over the decade the accuracy of these devices improved dramatically, eventually allowing wearable activity trackers to automatically know if you were swimming, jogging, or even riding a bike, allowing them to make more accurate estimations of your fitness level and the calories you were burning. For more dedicated athletes companies like Polar introduced wearable chest straps that could monitor heart rate and share that information with a mobile app, but in 2015 that technology finally became available to mainstream consumers through the re-emergence of the smartwatch in products like the Polar A360 and soon after, the Apple Watch.

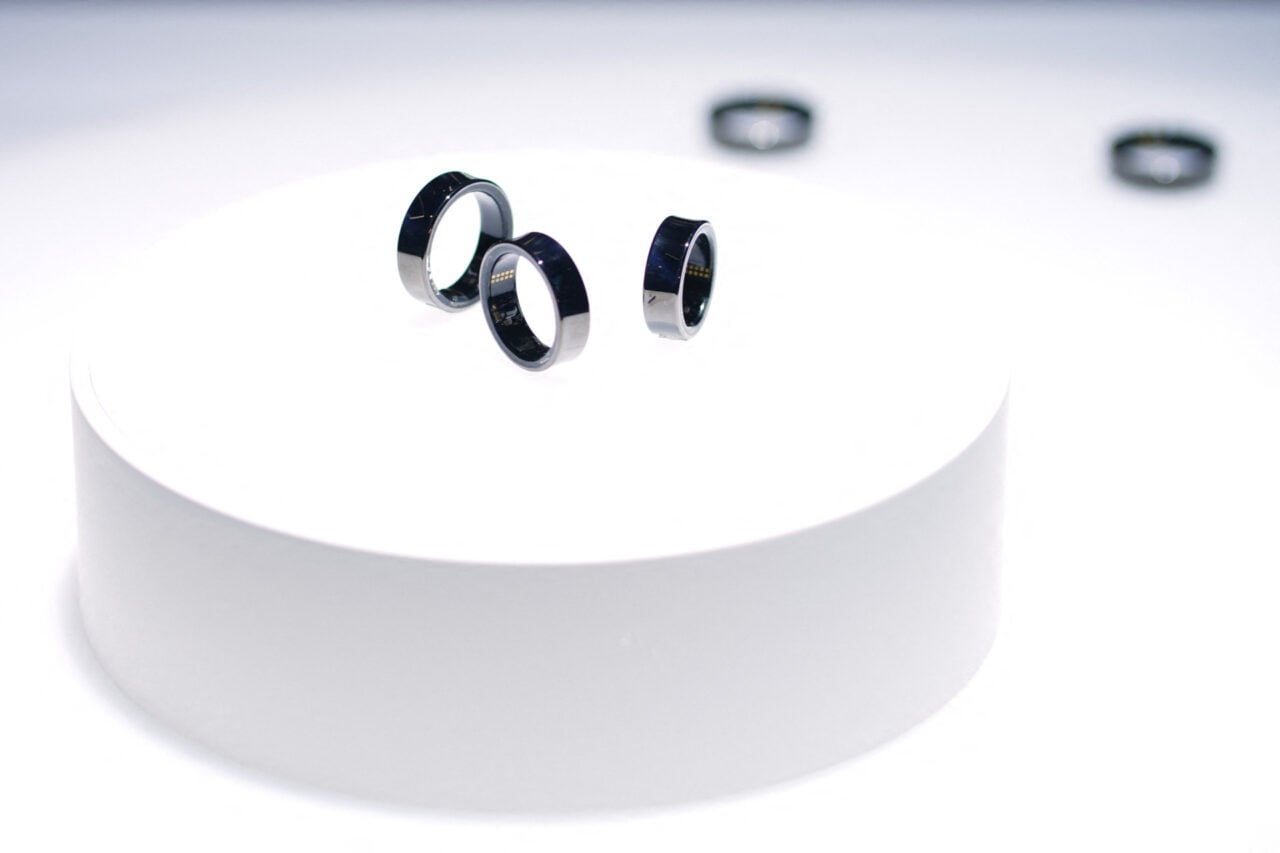

These wearable devices featured optical heart rate sensors that relied on a technique known as photoplethysmography where a bright light shone onto a wearer’s skin allows optical sensors to detect the frequency of the blood flow which allowed software to calculate and display their heart rate in real-time. For tracking a user’s physical activities their heart rate provided a far more accurate metric about their body’s performance than simply measuring their movements with an accelerometer, but a few years later the Apple Watch would be upgraded with the ability to take actual electrocardiograms which measure heart rate by detecting the electric signals that keep your ticker pumping. It not only allowed for faster and more accurate readings, but it also meant the Apple Watch Series 4 could detect the signs of potential life threatening conditions such as erratic rhythms and atrial fibrillation. Going from “get off the couch you lazy bum” to “you need to see a doctor immediately, it could save your life” is quite a leap for a technology in just 1o years.

Wireless connectivity

By 2010 it was rare to find a home that hadn’t long since upgraded to a wifi router to conveniently broadcast wireless internet to almost every device, but the past decade saw a huge increase in the number of gadgets in a home that demanded access to the web. Smart home and IoT (internet of things) devices quickly took advantage of advances in wireless chips that were small enough to even hide inside of a light bulb, but being able to remotely operate almost every appliance in your home from an app created a growing demand for wireless bandwidth.

https://gizmodo.com/the-future-of-wireless-everything-1794814613

Wifi speeds not only improved over the decade, but so did the approach taken by the routers that broadcast internet throughout a home. Antennas got larger and more abundant, but eventually multiple units were need to meet the demand for wireless connectivity in the average home, so companies like Eero introduced technologies like mesh networking that spread the bandwidth load across multiple devices to ease congestion and allow an endless number of wireless devices to operate in a home.

https://gizmodo.com/the-history-of-wireless-everything-1795227728

The past decade also made another wireless technology almost impossible to live without: Bluetooth. Just before 2010 the Bluetooth standard, which had already been used for years for connecting devices like wireless headsets and computer mice, received some crucial updates in terms of data transfers speeds, but also power consumption. This paved the way for Bluetooth to become the easiest way to stream audio from a device to a speaker, with devices like Jawbone’s tiny Jambox almost immediately replacing the stack of devices that previously made up a decent home stereo. Eventually, Bluetooth spread to headphones, eliminating that awkward cable (and allowing smartphone makers to get rid of the headphone port) and as the hardware and software further improved, wireless headphones were dramatically miniaturized to the point where it’s not easy to lose one down the sink if you’re not careful.